Radeon HD 5770, Radeon HD 4890, And GeForce GTX 275 Overclocked

There's Always Some Headroom There...

Writing an article about overclocking is always a mixed blessing. On one hand, it gives us an excuse to push hardware to its limits and enjoy the feeling of garnering extra performance for free. On the other hand, we’re always very aware that our readers may not be able to achieve the same levels of success because there are so many factors involved.

Cooling is a good example. While the reference designs that grace the first cards to hit the market do their jobs well enough, after-market solutions tend to yield better results, as do models carrying a manufacturer’s own design. Heatpipes are good, and more heatpipes tend to be better. Along the same lines, a bigger heat sink is also beneficial, as the thermal load can be dissipated over a larger area. This is a very important point, since a reference cooler is designed to keep the GPU at a temperature of about 80 to 90 degrees Celsius at stock speeds while (hopefully) producing only moderate noise.

If you go and increase your GPU's core frequency, resulting in higher heat output, the fan will become progressively louder, spinning up until it reaches its maximum speed in an effort to keep the graphics chip from overheating. Once this point is reached, the card is running at the highest clock speed the cooler will enable. Usually, stock coolers only provide a little headroom. And while the fan will try to cope with the additional heat by blowing more air onto the cooler, it can only do so much with the surface area it has at its disposal.

Something similar happens when you have two brawny graphics cards working together in an SLI or CrossFire setup. Although the cards' fans spin up from 85 to 100 percent duty cycle, they end up fighting a losing battle against the heat output of two cards running at full blast. The problem here is airflow. Too little cool air enters the case, so what little air there is inside the enclosure gets circulated again and again, heating up in the process. At some point, the air gets so warm that the cooler effectively stops cooling the GPU, resulting in a temperature buildup in the GPU, as well as other components.

To recap: the higher the ambient temperature in the case, the harder it is to keep the graphics card cool.

Two more points to consider are the card’s BIOS and its graphics drivers. Ideally, both should support overclocking and clock scaling (lowering the clock speeds for the GPU and memory when the card is in 2D mode) where possible. In some cases, card makers don’t do their homework. They overclock the GPU by five percent and sell the card as an OC Edition. While this does boost 3D performance under load, locking the graphics chip in to a higher overclocked frequency means the board doesn't scale down when idle. The only winning party here is your power company. The other side-effect of the GPU being stuck at max speed is that it constantly produces the same amount of heat, whether you’re browsing the Web or playing a graphically-demanding 3D game. The cooler’s fans are never idle, either.

As a rule of thumb, frequencies should drop to 300/600/100 MHz (GPU/shader/memory) in 2D mode on Nvidia cards. For cards built around an ATI chip, scaling will depend on the model. Although the graphics chip is usually down-clocked to 240 or 500 MHz, the GDDR5 memory on older cards tends to stay at its top speed. Thus, overclocking has an immediate effect on idle power consumption of either vendor's products as well, because they keep running at the higher clocks in 2D mode as well.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The final factor, GPU voltage, is also controlled by the card maker. In the worst case, the card’s voltage is locked in place and overclocking such a card will result in very little margin. Although there are some ways to alter GPU voltage using BIOS modifications or utilities like ATI Tool, even these aren’t guaranteed to work for all cards. Also, don’t forget that overclocking your card almost certainly voids your warranty, since you’re running your hardware outside of specifications the manufacturer considers safe. Almost all current graphics cards have a mechanism to prevent overheating. Once they reach 100 degrees Celsius or so, they automatically reduce their clock speed. But no matter what, raising the GPU’s voltage significantly increases the risk of damaging your hardware.

MSI ventured into voltage mod territory with its GeForce GTX 260 Lightning, a card that uses a special driver add-on allowing users to increase the GPU voltage at higher clock speeds to improve stability. MSI seems to have become more cautious with the card’s successor, the GeForce GTX 275 Lightning. Its software now displays a warning explaining that, while changes to certain parameters is possible, they can also result in damage to the hardware.

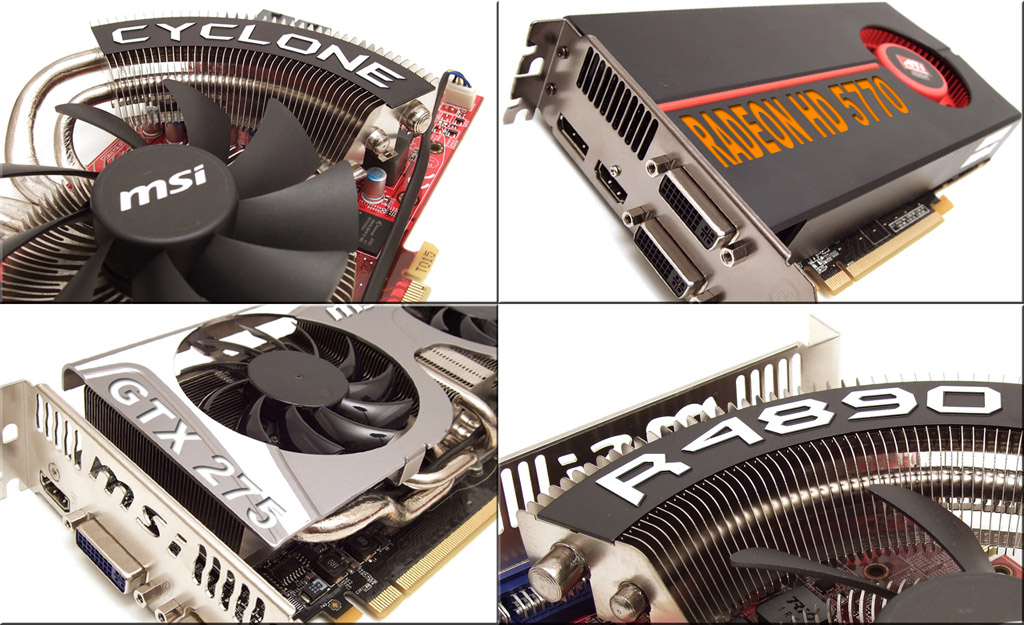

Our objective today is to attempt to reach the performance level of the next-highest class of graphics hardware through overclocking. We ordered two special models from MSI for this test that come with beefier cooling solutions and are sold as OC editions, namely the GTX 275 Lightning and the HD 4890 Cyclone SOC. As it turns out, reaching our goal was a snap on the GTX 275 Lightning, which can take on a reference GeForce GTX 285 once it is overclocked. ATI’s Radeon HD 4890 is already the fastest single-GPU card in the 4800-series, so we’ll only be comparing the improved overclocked performance to that of the reference card. Our final candidate is ATI’s brand new Radeon HD 5770. Without giving away too much, we can say that this card blew us away, amply demonstrating the scalability of the 40nm production process.

Current page: There's Always Some Headroom There...

Next Page Graphics Chips Compared And Test Setup-

amdgamer666 Nice article. Ever since the 5770 came out I've been wondering how far someone could push the memory to relieve that bottleneck. Being able to push it to 1430 allows it to be competitive to it's older sibling and makes it enticing (with the 5700 series' extra features of course)Reply -

Onyx2291 Damn some of these cards run really well for 1920x1200 which I run at. Could pick up a lower one and run just about anything at a decent speed if I overclock well. Good ol charts :)Reply -

skora If you're trying to get to the next cards performance by OCing, shouldn't the 5850 be benched also? I know the 5770 isn't going to get there because of the memory bandwidth issue, but you missed the mark. One card is compared to its big brother, but the other two aren't.Reply

I am glad to see the 5770 produce playable frame rates at 1920x1200. Nice game selection also. -

quantumrand I'm really disappointed that they aren't any benchmarks from the 5870 or 5850 series included. Why even bother with tha GTX 295 or 4870x2 and such without the higher 5-series Radeons?Reply

I mean if I'm considering an ATI card, I'm going to want to compare the 5770 to the 5850 and 5870 just to see if that extra cost may be justified, not to mention the potential of a dual 5770 setup. -

presidenteody I don't care what this article says, when the 5870 or 5970 become available i am going to buy a few.Reply -

kartu Well, at least in Germany 4870 costs quite a bit less (30-40 Euros) compared to 5770. It would take 2+ years of playing to compensate for it with lower power consumption.Reply -

kartu "Power Consumption, Noise, And Temperature" charts are hard to comprehend. Show bars instead of numbers, maybe?Reply -

arkadi Well that put things in prospective. I was really happy with 260gtx numbers, and i can push my evga card even higher easy. To bad we didn't see the 5850 here, it looks like the optimal upgrade 4 gamers on the budget like my self. Grade article overall.Reply -

B16CXHatch I got lucky with my card. Before, I had a SuperClocked 8800GT from EVGA. I ordered a while back, a new EVGA GeForce GTX 275 (896MB). I figured the extra cash wasn't worth getting an overclocked model particularly when I could do it myself. I get it, I try to register it. The S/N on mine was a duplicate. They sent me an unused S/N to register with. I then check the speeds under one utility and it's showing GTX 275 SuperClocked speeds, not regular speeds. I check 2 more utilities and they all report the same. I had paid for a regular model and received a mislabeled SuperClocked. Flippin sweet.Reply

Now they also sell an SSC model which is overclocked even more. I used the EVGA precision tool to set those speeds and it gave me like 1 or 2 extra FPS is Crysis and F.E.A.R. 2 already played so well without overclocking. So overclocking on these bad boys doesn't really do much. Oh well.

One comment though, GTX 275's are HOT! Like, ridiculously hot. I open my window in 40 degree F weather and it'll still get warm in my room playing Team Fortress 2. -

With the 5970 out there seems to be nothing else about graphic cards that interests me anymore :D Its supposed to be the fastest card yet and beats Crysis too!Reply