Mocap For Less: Noitom Perception Neuron Suit, Hands On

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

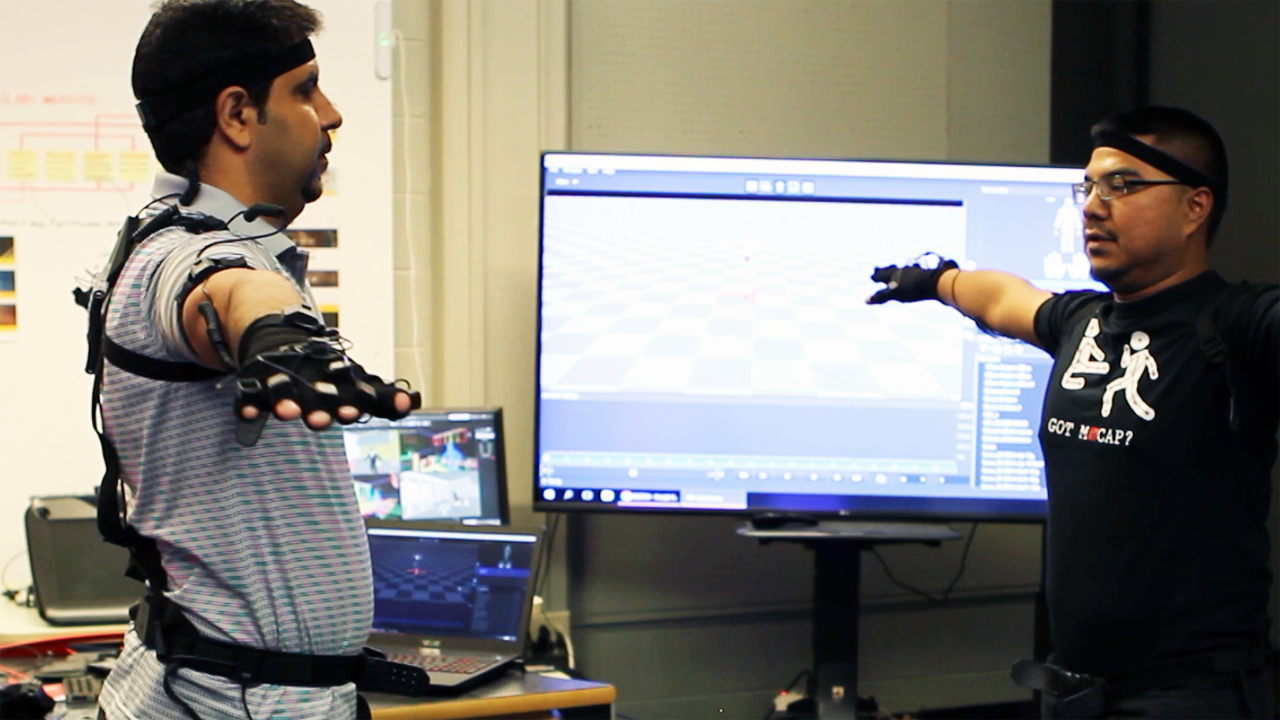

When we first heard about Noitom’s surprisingly inexpensive motion capture (mocap) suit, the Perception Neuron, Cloudhead Games was using it to record an actor’s performance for a VR game. We recently got to see the suit in person, watch the Noitom crew rig up a volunteer, and configure for the suit for motion capture. All things considered, the whole process is relatively quick and easy, and the suit's design is brilliant in its simplicity.

The Suit

Mocap suits are typically not cheap, but Noitom figured out a way to create one for $1,500. You can see from the results of Cloudhead Games’ The Gallery, Episode One: Call of the Starseed that the Perception Neuron punches well above its weight class. Its simplicity not only ensures a lower cost, it also makes the suit rather flexible.

All you really need is the suit, Unity software, a decent PC, and some creative ideas to start making things with the Perception Neuron.

Article continues below“Suit” may actually be a bit of a misnomer; it’s not as if you don the Perception Neuron like a garment. Instead, it’s more like pulling on a pair of gloves and then wrapping a few straps around key areas of your body--your head, arms, lower back, legs, feet, and between your shoulders.

On each strap, you’ll find a node. Each node has a mount for a small sensor, which Noitom calls “neurons.” Each neuron measures 12.5 x 13.1 x 4.3mm and is connected to all the other sensors (er, neurons) on your body through simple cabling, via Pogo pin connectors, from node to node.

The neurons each have an inertial measurement unit (IMU) that includes a gyroscope and accelerometer.

The whole kit connects to a belt-mounted hub, which is then connected to a PC via a wired (USB 2.0) or wireless (802.11b/g/n Wi-Fi, 2.4 GHz) connection. (In our demo, Noitom used a wired connection. Obviously, being so tethered is problematic, but there are definitely mocap scenarios where that’s not an issue, such as seated capture or hand-only capture.)

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The glove system is slightly different. There’s a node mounted on the wrist, but instead of connecting to one other node, it splits out and connects to a series of neurons mounted on the back of the hand and the topmost knuckle on all five fingers. (The thumb and index finger get two neurons each.)

You can also opt to simplify the glove system with neurons on just the thumb, middle finger, and index finger. Noitom said that the full glove system is better for gaming, because it provides more fidelity.

There’s an optional battery pack you can attach to the suit if you’re going wire-free. Noitom said that the battery pack it currently has can keep the Perception Neuron humming for about three or four hours.

The nodes each produce a flickering red LED light, which makes the whole thing appear alive and slightly Terminator-like.

Keep It Simple, Keep It Clean

In addition to the overall simplicity of the Perception Neuron, the suit is flexible, too. For example, although a standard kit employs 32 neurons, there’s also an 18-neuron version you can snag. Even so, there are multiple “modes” of use that employ various numbers of neurons:

Single arm: 3-11 neurons Upper body: 11-25 neurons Full body: 18-32 neurons

Although it seems like the neurons could be placed willy-nilly anywhere on the body, Noitom has determined that the most ideal placement is on parts of the body that don’t move much. And by “move,” they really mean “flex.” That is, the upper leg sensor is mounted on the side of the thigh, instead of the top, where your quadricep--a large muscle--would be constantly flexing. The lower leg sensor is mounted on the shin, not the meaty calf. And so on.

The idea is that you want the incoming sensor data to be as “clean” as they can be. This helps keep the neurons wrapped as tightly to the body as possible. The Axis Neuron software does have tools to smooth out janky or jittery data, but it’s still ideal to pipe in the cleanest data possible.

Perception Neuron’s performance is not affected by the distance between neurons, which means you don’t have to worry about losing accuracy or performance if the sensors are especially far apart or close together.The placement of the sensors on the body, not the distance between them, is what matters.

Calibrating

Throughout the setup process we were a part of, there were some flubs and dropped connections here and there, but overall getting the suit hooked up (physically and digitally) looked to be a fairly easy, painless task. There are a few straps to wrap around the wearer, and the Axis Neuron software running on the PC recognized the suit right away.

Getting it calibrated was even simpler. Once the demonstrator had the suit connected to the PC and had Unity loaded up, configuring the suit was a quick, four-step process.

The wearer of the suit had to position his body in four poses:

-Steady Pose: You simply hold still.-”A” Pose: Stand straight with your arms at your sides and your feet together-”T” Pose: Stand straight and extend your arms out, parallel with the ground-”S” pose: From the “T” pose, bring your arms forward to your thumbs touch, then squat down.

You should always do the Steady pose first. Noitom also noted that you should recalibrate every two to four hours.

He had to hold each pose for a few seconds, and the software would beep at him to let him know the appropriate data had been received. And that was it. Immediately upon completion of the poses, you could see the subject’s physical movements represented on the screen by a faceless, animated humanoid dummy on the screen that mimicked his every movement.

As you can see in the video, latency does not seem to be much of an issue. It’s visible, but unless your mocap performance requires especially quick reactions, it shouldn’t significantly hamper any experience. Noitom said that the wired connection introduces 8ms latency from the sensor to the PC, and the wireless connection has 23ms latency.

(Depending on the PC you’re using, you may see additional latency. The Noitom crew showed us the setup and demo on a well-appointed but two-year-old gaming laptop, so you can infer from that what sort of specs you might need.)

Once you’re connected and calibrated, you can perform virtually any body movement you can imagine, from sitting at a desk twiddling your thumbs to doing cartwheels around the room. (That is, as long as you’re connected wirelessly. Don’t do cartwheels while tethered to your PC.)

Now What? Creation, Not Consumption

The Perception Neuron is most definitely a compelling innovation, but where some people, I think, seem to get tripped is understanding what to make of it. When it comes to the world of immersive technology, people are accustomed to learning about a Thing that does Things that you can consume. For example, the HTC Vive, Oculus Rift, and OSVR let you enjoy VR experiences. You buy the HMD, connect to a PC, and go grab any one of hundreds of games and apps that developers have created.

That’s not the Perception Neuron; it’s not primarily for consumption, it’s for creation.

As we saw with Noitom’s Cloudhead Games collaboration, the significant value of a $1,500 mocap suit that’s geared to work with software such as Unity, Unreal, Motionbuilder, and more, is that it gives developers and researchers a powerful, inexpensive, and flexible tool. With Unity and Unreal, you can use the Perception Neuron to make experiences for the Oculus Rift and HTC Vive. With the Neuron SDK Reader, you can make your own plugins for data streaming, too.

A small gaming outfit using it to make performance capture a more engaging yet easier process is one thing, but there’s a multitude of other potential applications. In other settings, such as universities, it can be used for actual research.

The video above was shot at the University of Missouri's Immersive Visualization Lab (aka, iLab). Headed by Dr. Bimal Balakrishnan, the professors and students in the iLab have been using mocap technology to conduct research concerning, among other things, how professionals move around in their environments. They’re benchmarking how, for example, seasoned medical professionals move about the spaces in which they work and compare it to how students perform the same tasks. Their work also looks at the effectiveness of virtual training tools for professional workplaces, evaluating ergonomics and efficiency.

Until they got their hands on a Perception Neuron, they’d been using an 18-camera setup and a semi- permanent room installation. This setup, though powerful, has some shortcomings that the Perception Neuron obviates. For example, the 18-camera setup does a better job of tracking an individual’s body in space, but the Perception Neuron’s glove is superior for capturing smaller, more precise hand movements.

James Hopfenblatt, a Graduate Research Assistant in Architectural Studies who works in the iLab, elaborated on some of the other advantages of the Perception Neuron mocap suit. “We’re impressed with how fast it is to set up the system; it’s basically plug and go,” he said. “It’s much more convenient than the 18-camera setup and the big tripods.” He also noted that although the Perception Neuron is not as precise, it’s eminently useful for a number of applications, such as interacting with virtual objects in VR and AR environments.

Being cameraless, he said, is also an advantage, because that means you can use the Perception Neuron with shiny objects, in poorly-lit rooms, and outdoors.

Wait, outdoors? Yes, outdoors. Without a PC. “You can actually just strap on the little [hub] and put in a [micro]SD card and go run outside, and it will store the data,” said Hopfenblatt. “Then you can put the data into the custom software and manipulate it as you [normally] would.”

Remember, the Perception Neuron has a battery pack. With that and a microSD card in the hub, the suit can entirely generate and record its own data. Hopfenblatt further pointed out that the Perception Neuron doesn’t produce a tremendous amount of data, so there aren’t many storage issues. For example, one file he had on hand was from 20-30 seconds of motion capture, and it was less than 1MB in size.

The one downside to a free-roaming, outdoors Perception Neuron wearer is that the suit doesn’t offer much in the way of positional tracking. So if, say, you run a mile, the suit won’t track anything pertaining to the distance--just what your body was doing and what it looked like while you were running.

The Nitty Gritty

Noitom sells four versions of the Perception Neuron. Two are for anyone (devs, consumers, etc.), and two are solely for academics. The only differences between the consumer and academic “Aluminum” editions are the prices. The full, 32-neuron kits cost $1,500 (consumer) and $1,200 (academic), and the 18-neuron versions are $1,000 and $800, respectively.

You can only get them directly from Noitom’s online store, but that store also sells extra parts. For example, you can get a hand assembly for $100, a single neuron for $30, and so on. There are some bundles that include software, too.

| Header Cell - Column 0 | Suit Specifications |

|---|---|

| Number of Neurons | 32 or 18 |

| Straps | -Two gloves with finger straps-Arm straps-Six (3 x 2) leg straps-Upper body straps (incl. back and hip nodes) |

| Misc. | -Hub-Extra Pogo and prop cables-Soft carrying case-Pouches for component storage |

| Transmission | -Wired (USB 2.0), 8ms latency-Wireless (Wi-Fi 2.4GHz), 23ms latency |

| Power | External USB battery, 5V/2AMP |

| Working Modes | -Single Arm: 3-11 Neurons -Upper Body: 11-25 Neurons -Full Body 18-32 Neurons (higher numbers include full finger motion) |

| Output Data Format | -.RAW (Raw data for playing and editing) -.BVH (Biovision Hierarchical Data) -.FBX (Filmbox)-Export to .FBX for MotionBuilder, Maya, Reallusion, and more |

| Included Software | -SDK with C/C++API and integrations into Unity3D -AXIS Neuron |

| Available Plugins | -Unreal plugins-Unity SDK-Motionbuilder plugin-Neuron SDK Reader (users can create their own plugins) |

| Row 9 - Cell 0 | Row 9 - Cell 1 |

| Row 10 - Cell 0 | Hub Specifications |

| Size | 59 x 41 x 23mm |

| Max. Supported Neurons | 32 |

| Max. Output Rate | 60fps with 32 Neurons, 120fps with 18 Neurons |

| Onboard Recording | MicroSD |

| Connectivity | Dual-mode 802.11b/g/n Wi-Fi (only 2.4GHz is used) |

| Misc. | -Open, WEP, WPA/WPA2-PS encryption-SoftAP or Station modes supported |

| Row 17 - Cell 0 | Row 17 - Cell 1 |

| Row 18 - Cell 0 | Individual Neuron Specifications |

| Size | 12.5 X 13.1 X 4.3mm |

| Dynamic Range | 360 degrees |

| Accelerometer Range | ±16g/±8g adaptive |

| Gyroscope Range | ±2000 dps |

| Resolution | 0.02 degrees |

| Static Accuracy | -Roll < 0.5 deg -Pitch< 0.5 deg -Yaw angle< 1.5 deg |

| Row 25 - Cell 0 | Row 25 - Cell 1 |

| Row 26 - Cell 0 | Price |

| 32 Neuron Aluminum Edition | $1,499 |

| 18 Neuron Aluminum Edition | $999 |

| 32 Neuron Aluminum Academic | $1,199 |

| 18 Neuron Aluminum Academic | $799 |

Seth Colaner previously served as News Director at Tom's Hardware. He covered technology news, focusing on keyboards, virtual reality, and wearables.

-

DrBackwater Your 1 year and 4 months late.Reply

Noitcom Kickstartered last october their nouron for $499 32 bundle world wide for backing their cause, sadly their 32 bundles a rip now and getting replacements are pain. They will not include a bundle under $999 or allow customisation rather infact they've designed their store front to minimise control on what you buy.

If you were to sell them on ebay they would send ebay cease and decist notice.

Theres better mocaps arriving from the sweds that launched also on kickstarter much much cheaper for under a $1000

Noitcoms greedy korean business waiting for competition.

-

scolaner Reply18769097 said:Theres better mocaps arriving from the sweds that launched also on kickstarter much much cheaper for under a $1000

Would love to check that out. Link?