Intel’s 24-Core, 14-Drive Modular Server Reviewed

Modular Server Control

Intel's Modular Server Control is a Web-based administration interface that runs off of the MFSYS25's management module. It offers the administrator a well rounded set of tools to manage, configure, and monitor the many different modules and services running on the modular server. This includes the compute modules, networking, storage, and power.

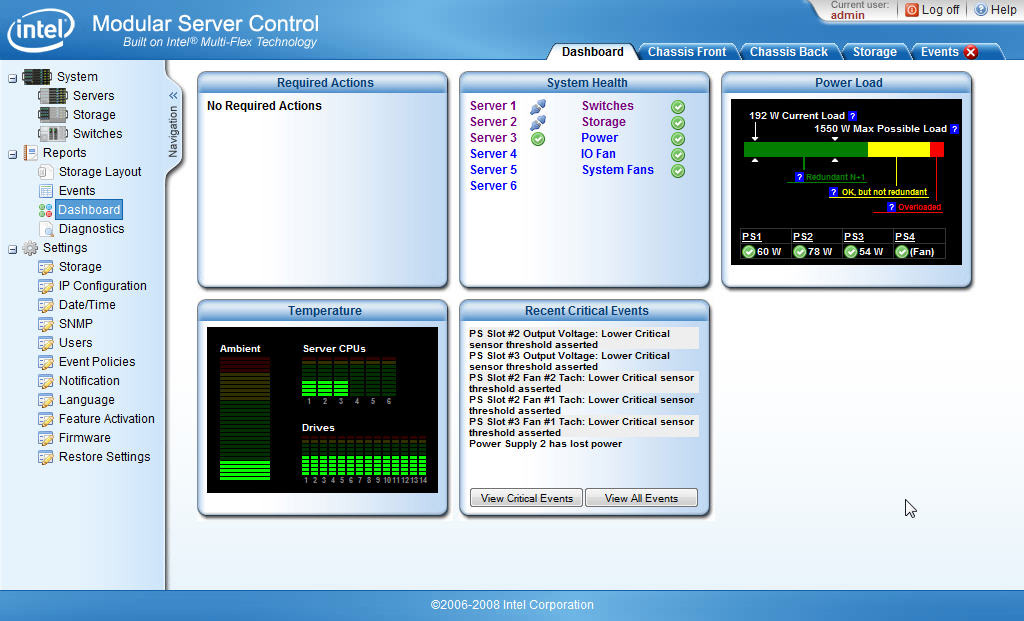

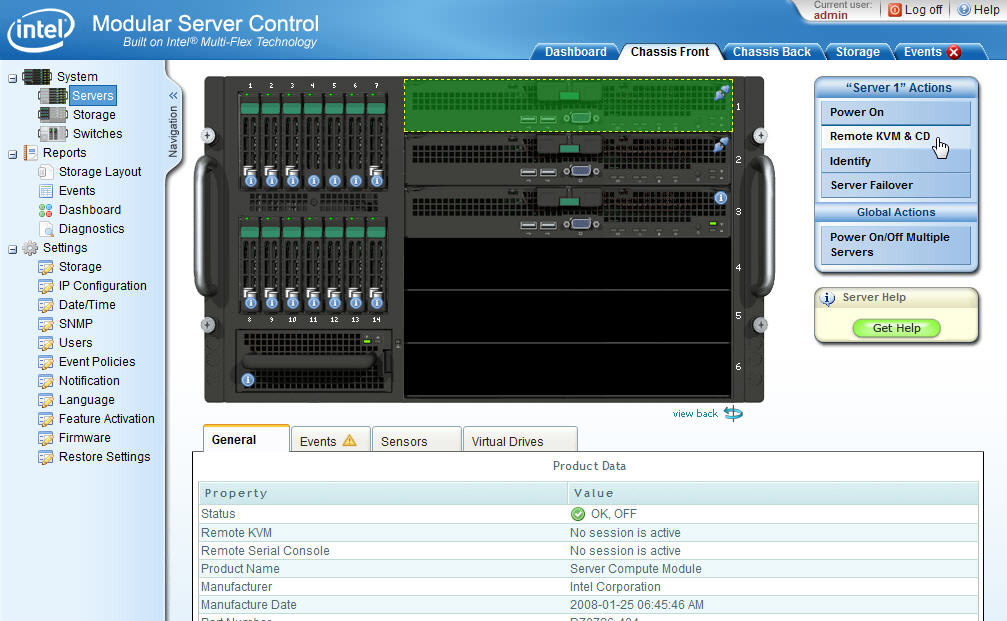

After logging into Intel's Modular Server Control, you’re presented with a straightforward interface split into two main screens.

On the left-hand side is a Navigation menu that provides shortcuts to the servers, storage, and switch interfaces. It’s from these views that the admin can power on the compute modules, create virtual drives, or configure the external ports on the Ethernet switch module. You can also access reports that provide storage layouts, system events, and system diagnostics. The final set of objects in the Navigation menu are some of the system settings needed to setup the modular server, including the network configuration for the management module, firmware updating tools, and as mentioned before, additional feature activation.

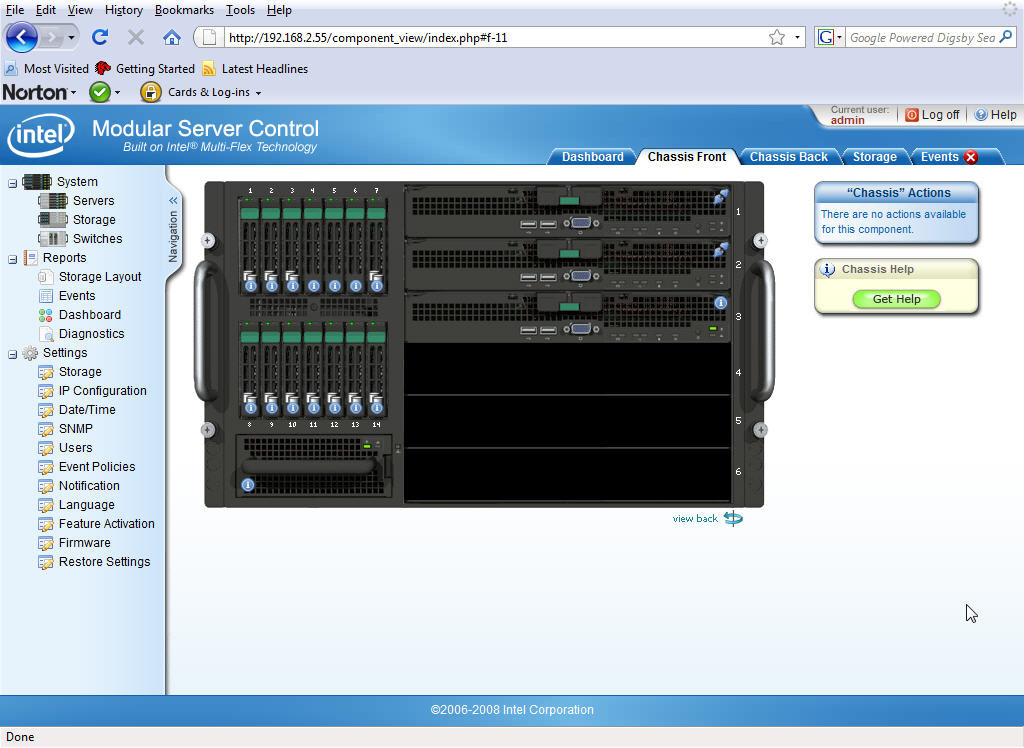

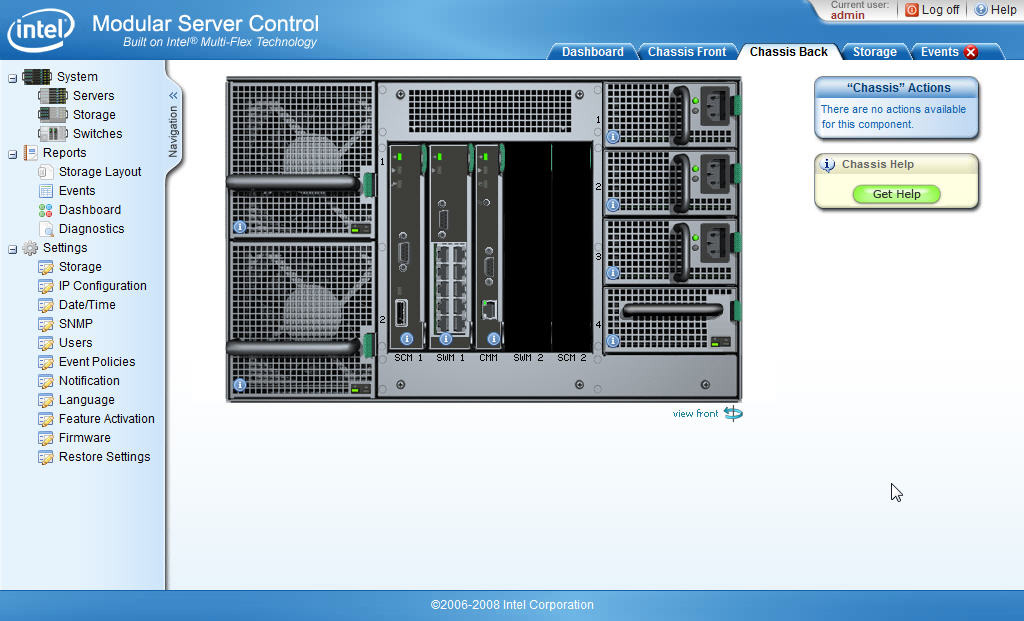

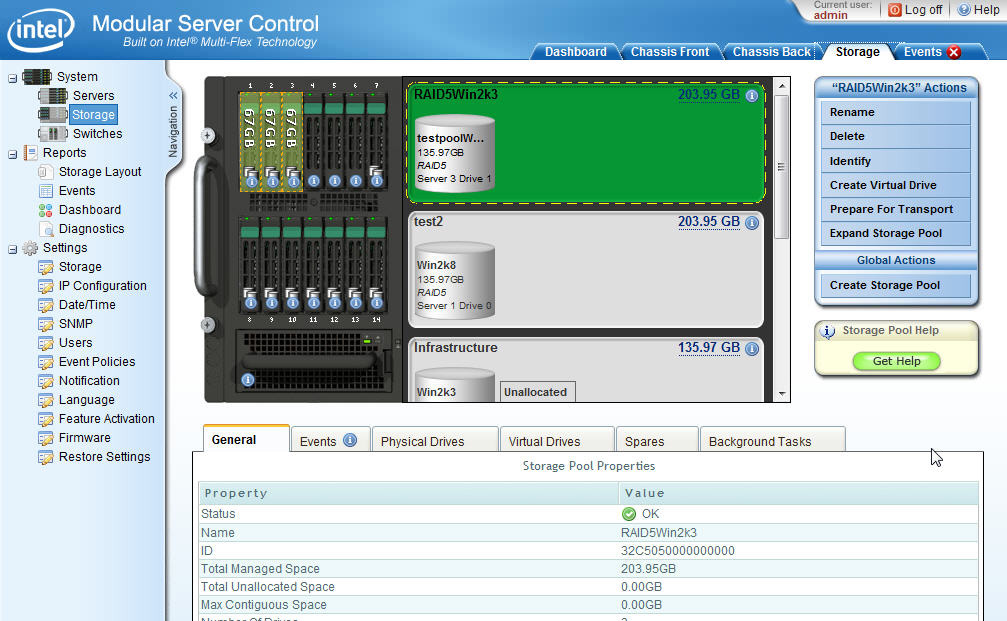

On the right hand side are tabbed shortcuts to many of the items in the Navigation menu. By default, the first tab that comes up after logging into the Modular Server Control is the Dashboard. The Dashboard provides a general overview of the MFSYS25 and gives the admin a quick look at the current state of the overall system. Environmental diagnostics for the power and temperature are given in an easy-to-read graphical format as well as the general system’s health and a quick view of the critical-system events. Three other tabs have great graphical tools that let you look at the machine as if you were standing right in front of it. The Chassis Front tab shows all the installed compute modules, disk drives, and their corresponding lights, while the Chassis Back tab shows all the rear-mounted components and their current states as well. The Storage tab is just as graphical as the other two tabs, providing a nice visual picture of the storage configurations running in the MFSYS25.

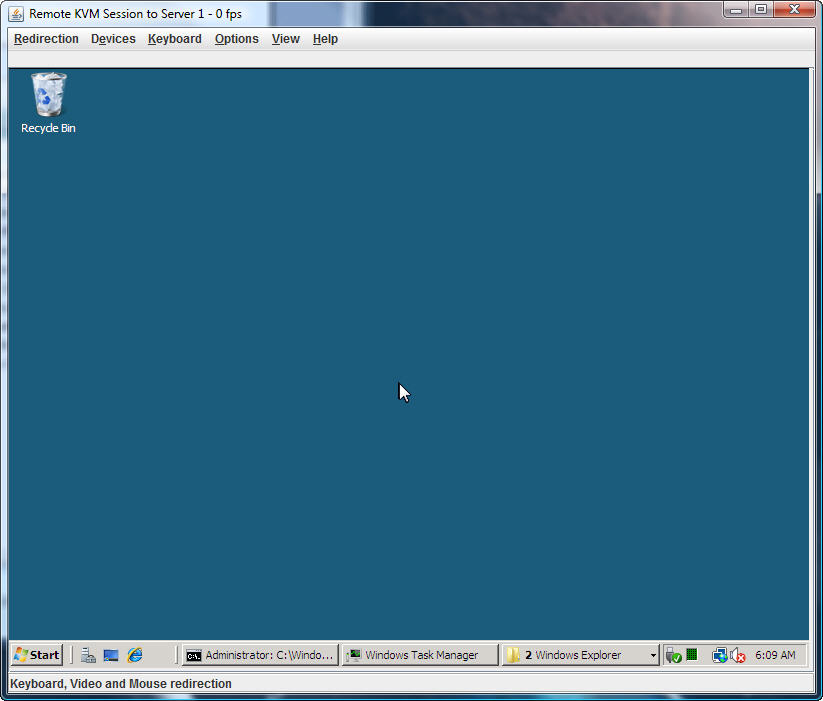

The particular feature that stands out most for me is the built-in Remote KVM (keyboard/video/mouse)

and CD feature. Intel’s inclusion of a built-in KVM is great because you don’t have to go out and buy a separate device to access the compute modules’ keyboard, video, and mouse controls.

Located in the Servers section of Modular Server Control, Intel’s KVM lets you work on your servers as if though they were right in front of you. You can also use the Remote CD feature to load ISO files onto the virtual CDROM and install operating systems from your desk. By simply launching KVM, you can control your remote servers using your desktop mouse, keyboard, and monitor with the same browser connection.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

While RDP is a great tool for Windows users, you still don’t get the full functionality as you would with direct console access. If the server can’t talk over the network anymore, RPD won’t connect and you need to wait for a solid network connection before you can even get back on the machine. With KVM, I get to see what comes up during the boot process. With Linux, for example, I like to review and catch any red flags that might concern me as the operating system starts up. If not for the KVM, a blind restart of the server would hide important information from the admin that he or she would know about when working on a problem server locally, defeating the purpose of remote administration.

Another feature worth mentioning is the Server Failover function used to “move” a compute module’s assigned virtual disks from one server to another. Done while either the source server is running or not, with a couple of clicks of a button you can transfer its running drives to a different destination server in the chassis. The Server Failover can come in handy for repairs, especially if you need to replace faulty hardware on a compute module. I’ve successfully failed over storage from one server to another in both with the servers running and powered off.

However, I got a warning message recommending that the source server be powered-off first. The help file explains that there may be processes running in the operating system that may not like the failover and de-stabilize the running machine.

Current page: Modular Server Control

Prev Page Main And I/O Cooling Modules Next Page First Impressions-

kevikom This is not a new concept. HP & IBM already have Blade servers. HP has one that is 6U and is modular. You can put up to 64 cores in it. Maybe Tom's could compare all of the blade chassis.Reply -

sepuko Are the blades in IBM's and HP's solutions having to carry hard drives to operate? Or are you talking of certain model or what are you talking about anyway I'm lost in your general comparison. "They are not new cause those guys have had something similar/the concept is old."Reply -

Why isn't the poor network performance addressed as a con? No GigE interface should be producing results at FastE levels, ever.Reply

-

nukemaster So, When you gonna start folding on it :pReply

Did you contact Intel about that network thing. There network cards are normally top end. That has to be a bug.

You should have tried to render 3d images on it. It should be able to flex some muscles there. -

MonsterCookie Now frankly, this is NOT a computational server, and i would bet 30% of the price of this thing, that the product will be way overpriced and one could buid the same thing from normal 1U servers, like Supermicro 1U Twin.Reply

The nodes themselves are fine, because the CPU-s are fast. The problem is the build in Gigabit LAN, which is jut too slow (neither the troughput nor the latency of the GLan was not ment for these pourposes).

In a real cumputational server the CPU-s should be directly interconnected with something like Hyper-Transport, or the separate nodes should communicate trough build-in Infiniband cards. The MINIMUM nowadays for a computational cluster would be 10G LAN buid in, and some software tool which can reduce the TCP/IP overhead and decrease the latency. -

less its a typo the bench marked older AMD opterons. the AMD opteron 200s are based off the 939 socket(i think) which is ddr1 ecc. so no way would it stack up to the intel.Reply

-

The server could be used as a Oracle RAC cluster. But as noted you really want better interconnects than 1gb Ethernet. And I suspect from the setup it makes a fare VM engine.Reply