CPU Overclocking Guide: How (and Why) to Tweak Your Processor

Learn the pros and cons of overclocking your CPU, the physics behind changing your clock rate and the basics of overclocking an Intel or AMD processor.

Introduction

Overclocking was once the domain of enthusiasts with higher-than-average hardware know-how and a bit of derring-do. The community was made up of benchmark competitors keen on pushing CPU frequency envelopes, gamers trying to squeeze the last drop of performance out of an aging rig, or simply power users who wanted to chart the undocumented and unadvertised limits of their system. A classic example of the sort of spirit around overclocking/modding was the “DIY Cooking Oil PC” we showcased here at Tom’s Hardware in 2006.

Times have changed. As with many other niche “tweaks” to system performance like liquid cooling, vendors have embraced overclocking, avidly promoting the capabilities of their hardware, providing software and firmware tools to make overclocking far easier, and, for a premium price, providing pre-built, overclocked systems with specifications that would have made many of us swoon in the 2000s.

The mainstream adoption of overclocking has also been pushed by a plethora of new applications; not just gaming, but currency mining and decentralized scientific computing like BOINC and protein folding. And while the actual process of overclocking has been vastly simplified in recent years, it is not a blind one. Often, overclocking is a matter of looking at a system build as a whole and eliminating bottlenecks, not just pushing one component to its limit.

For example, you can run some Core i7-3770Ks at more than 5.1 GHz (expect a voltage setting of around ~1.45V), but if the system is being used for scientific computing (or any other application that requires manipulating large data sets), memory data rates may become your performance bottleneck.

We focus on CPUs in this article, but both system memory and graphics processors are also overclockable. And many beginner-enthusiasts may realize that instead of overclocking the processor for better performance, they can simply upgrade the system’s cooling to prevent the processor’s built-in thermal throttling from kicking in under heavy load.

Despite recent shifts in hardware capabilities, the core concept behind overclocking remains the same. Components with a clock—an oscillator—have a margin of performance (frequency) that we refer to as headroom, which is available above and beyond the advertised default settings. Some of the headroom is there because of engineered safety margins for the hardware, based on the thermal performance and available voltage constraints of a nominal system. That is, a mass-market component must not put out so much heat that only the top 5% of PC builds have the cooling capability to handle it. This is called an “intentional guardband.” Extreme overclocking eats into the guardband as well as the conservatism in the design of the hardware and silicon fabrication process.

Another portion of the headroom exists because the stock value is a stable set-point determined during manufacturer testing. For example, a given CPU and system configuration may crash least often when it is operated at 2.5 GHz below the available maximum.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Finally, manufacturers are loath to hand out what is essentially a free performance increase to overclockers without charging for it; Intel’s multiplier-locked and -unlocked CPUs are great examples, where identical chips are sold with and without artificial frequency caps, with a premium charged for the ability to overclock.

A component's frequency can be increased through various means, and higher component voltage is often used to provide the stronger signal needed at that higher frequency. This guide doesn’t discuss which processor is “better” for overclocking; every overclocker has a preference, and biases come into play when recommending CPUs. We do have an older AMD FX-8350 vs. Intel Core i7-3770K story that discusses bottlenecks, in case you want to compare the state of the top processors a few years ago, though.

Heat, Stability, Damage, and Warranty Considerations

The performance-over-stock achieved by overclocking a system is not just a minor tweak; HWBot member just_nuke_em overclocked the relatively inexpensive quad-module AMD FX-8120 with a stock clock rate of 3.1 GHz to 8.3 GHz, a more than 250% increase over AMD’s published specifications.

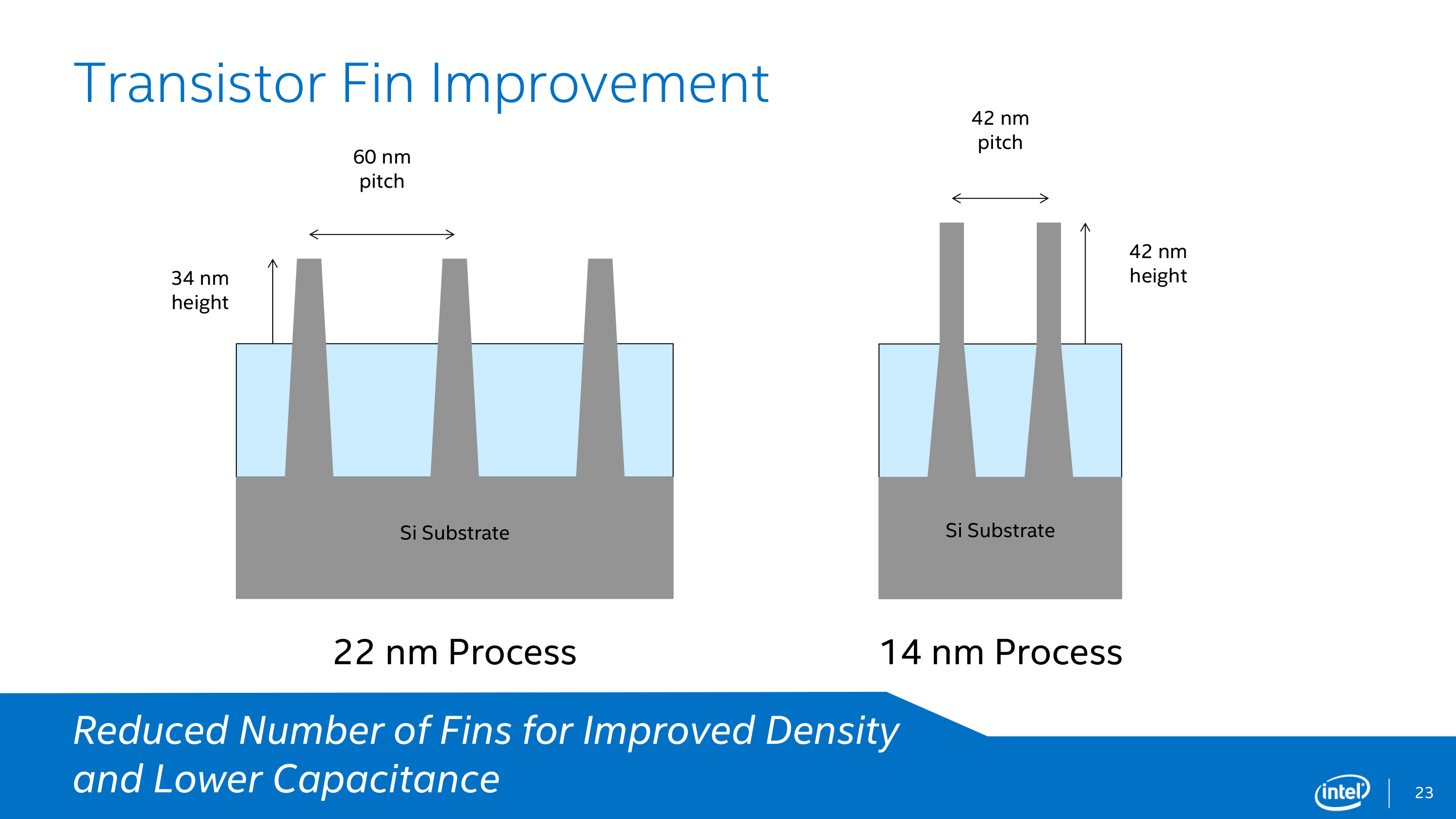

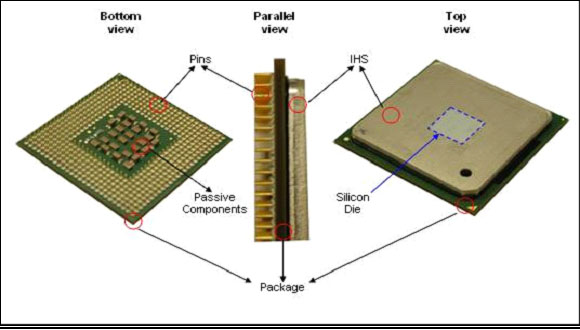

While most overclocks will be more modest than this world record, any performance boost comes with some limits imposed by physics. As a chip’s clock rate and voltage are increased, the waste heat output of the system also increases quickly, and this heat has to be removed somehow. Often, the cooling capacity of a build will max out long before the component’s theoretical maximum frequency. And CPU cooling will continue to become less efficient, not more, as time passes; each generation of CPU has a higher transistor density than the one before. Intel went from the 45nm Nehalem die in 2008 to a 14nm die in Skylake in 2015, and Cannonlake (slated for release in 2017) is going to be built on a 10nm process. AMD follows a similar progression.

While the transistor count tends to increase with each new architecture, die size does not, making it much more difficult for conventional cooling solutions to keep up with the rate at which thermal energy is generated. In fact, as dies get smaller, the total surface area contact between the CPU and its heat spreader drops, making cooling less efficient. All of this contributes to a much higher predilection for “hot spots” in current chips. Of course, as you increase voltage to (hopefully) stabilize more aggressive overclocks, power consumption rises very quickly. Core temperatures tend to jump for small, incremental frequency boosts.

System stability is often another victim of overclocking. Enthusiasts sometimes have to live with more system crashes and less consistent performance. That's not to say every overclocked system is less stable than stock; many overclockers have reported finding new and better operating points at higher-than-stock clock rates. However, CPUs operating beyond their factory specifications are more susceptible to shorter lifespans due to the stresses imposed on them.

Causing damage and voiding warranties are two oft-cited reasons that people are hesitant about overclocking. Damaging components due to heat or voltage overloads was easy in the old days, and it’s still possible now. But manufacturers incorporate a number of fail-safes, including thermal throttling, and the truth is that it is far more likely that a system will become unstable and crash before there is any permanent damage caused by a short-term test run.

Overclocking will, however, reduce the lifespan of system components; not just the processor, but motherboard, memory, and other parts that were stressed beyond their designed operating points alongside the overclocked processor. In electronics, the biggest source of wear is a phenomenon known as electromigration, whereby ions are slowly transferred from a structure to the adjacent structure under the force of electrical current. Major contributing factors include increased heat and voltage, but the limits of heat and voltage vary with different materials, different production technologies, and expected component lifespan. Thermal loads in particular tend to accelerate electromigration in ICs.

In terms of warranty, while GPU and motherboard manufacturers have become more overclocking-friendly lately, both Intel and AMD warranties are voided if the clock rate of their CPUs is changed at all. Intel does offer a “Performance Tuning Protection Plan” that will replace an eligible processor that falls outside of Intel’s specifications, but AMD will not cover a processor that was operated outside published specifications, even when AMD’s own Overdrive software is used for overclocking.

MORE: Best PC Builds

MORE: How To Build A PC

MORE: All PC Builds Content

-

rush21hit Look at the voltage setting on those world records. Lots of LN2 involved here.Reply

Speaking as a former user of Q6600 @3.0 (Corsair Hydro series were non-existent back then) and i7 920 @2.8. Small OC, I know. Since I want my motherboard not get fried.

Motherboard gets the most hammering in OC. Even the best of the best sometimes failed. Those capacitors can only hold so much. I just want to ensure it also last. Heck, even in normal use, motherboard tends to fail long before any other parts does. -

Calculatron Ever since the articles on the "E" series of the FX-line-up, I've tried to take efficiency into account. I run a mild overclock of 4.0ghz with just under 1.2V on my FX-8320. I can achieve a 4.7ghz overclock, but I just don't think that the performance gains are worth all the extra heat and stress on the components.Reply -

Nuckles_56 There's a typo in the table of record speeds for the CPUs and the respective voltages, as there is no way that the i7 860 got to that speed at 1.152VReply -

SinxarKnights Reply18275176 said:There's a typo in the table of record speeds for the CPUs and the respective voltages, as there is no way that the i7 860 got to that speed at 1.152V

http://valid.x86.fr/xuxjnn look for yourself. I suspect there was an error reading the voltage but I do not know, CPU-Z calls it valid though. -

TexelTechnologies Its not that complicated but then again I am always surprised by how dumb people are.Reply -

Murdock4321 Overclocking used to be pretty complicated and take some trial and error. With all these new processors and new bios', its really pretty easy. I'm still rocking an i7 930 @ 2.85ghz which is rock solid and has lasted me since 2010Reply -

jtd871 How about an underclocking/undervolting guide for those that want efficiency rather than performance?Reply -

anbello262 Well, isn't the procedure exactly the same, just lowering mult and volt instead of rising it? The stability concern (freq/volts) and the iterative methodology is the same, right?Reply