EVGA GeForce GTX 1080 Ti FTW3 Gaming Review

Why you can trust Tom's Hardware

Cooling

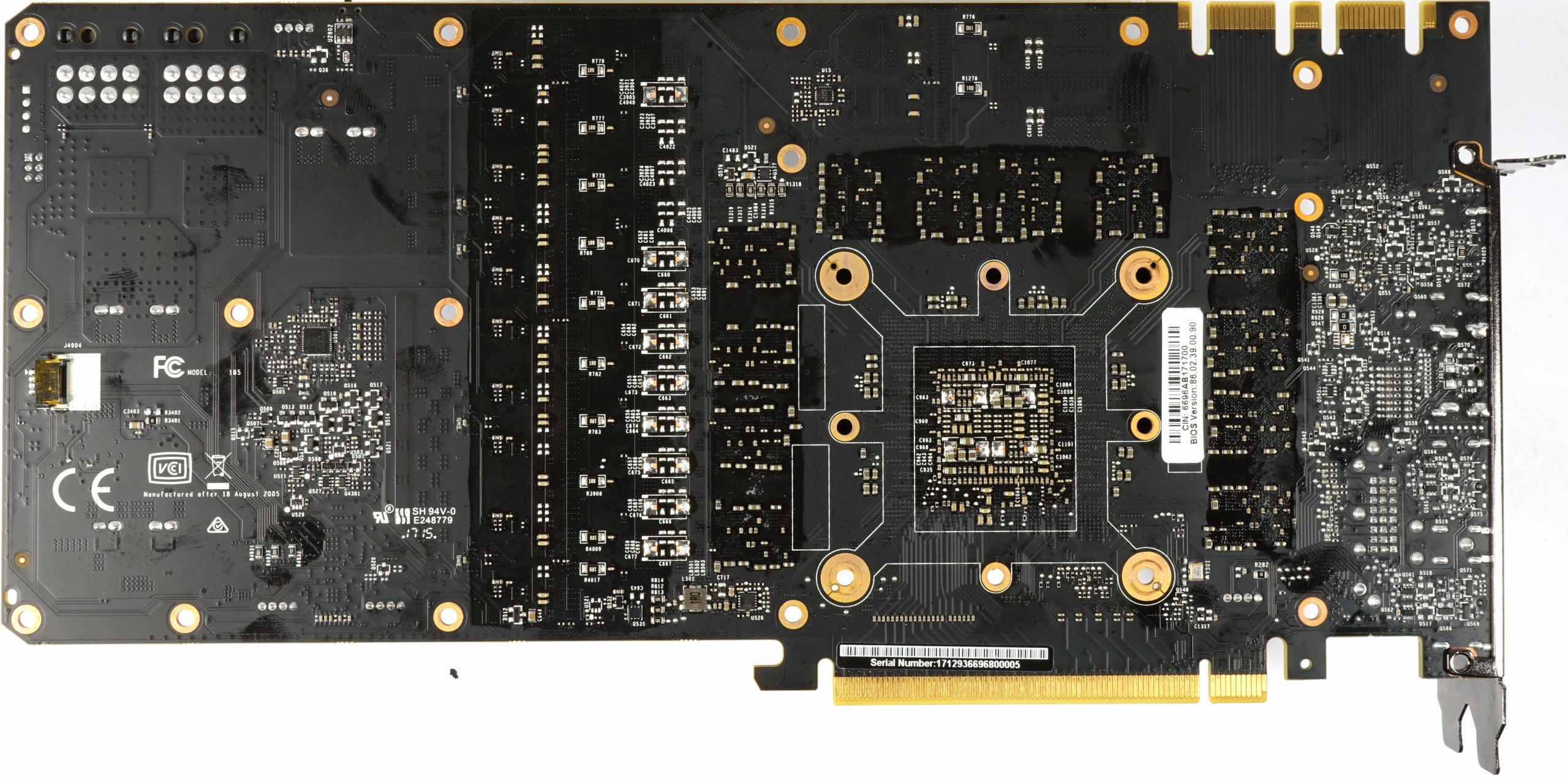

Around back, the board is unremarkable. Notice those dark spots where the thermal pads were glued on between the PCB and backplate.

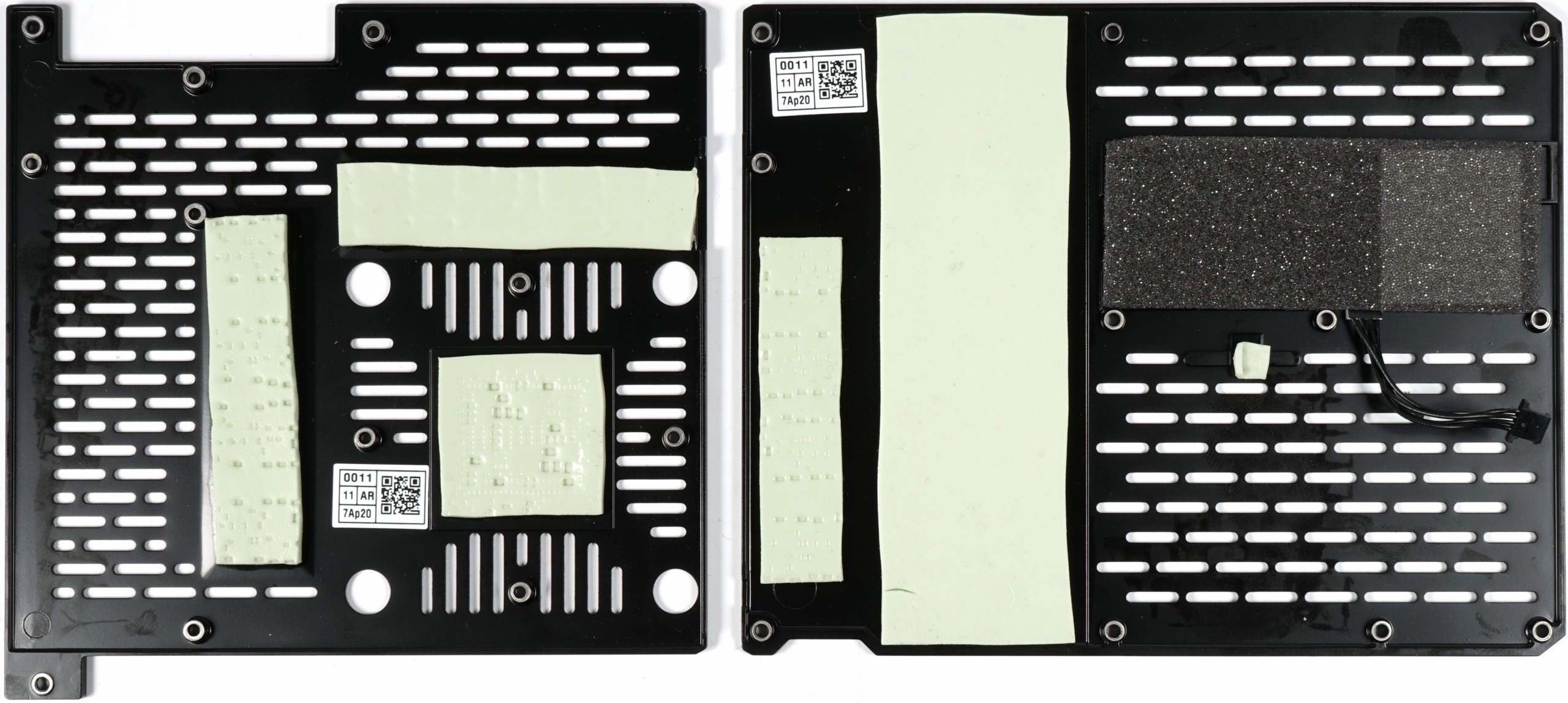

That two-part plate doesn't have any foil attached to the inside and it isn't there just for looks; the backplate helps cool the board passively. In addition, it adds rigidity to the sandwich structure, along with the other side's cooling plate between the PCB and main heat sink.

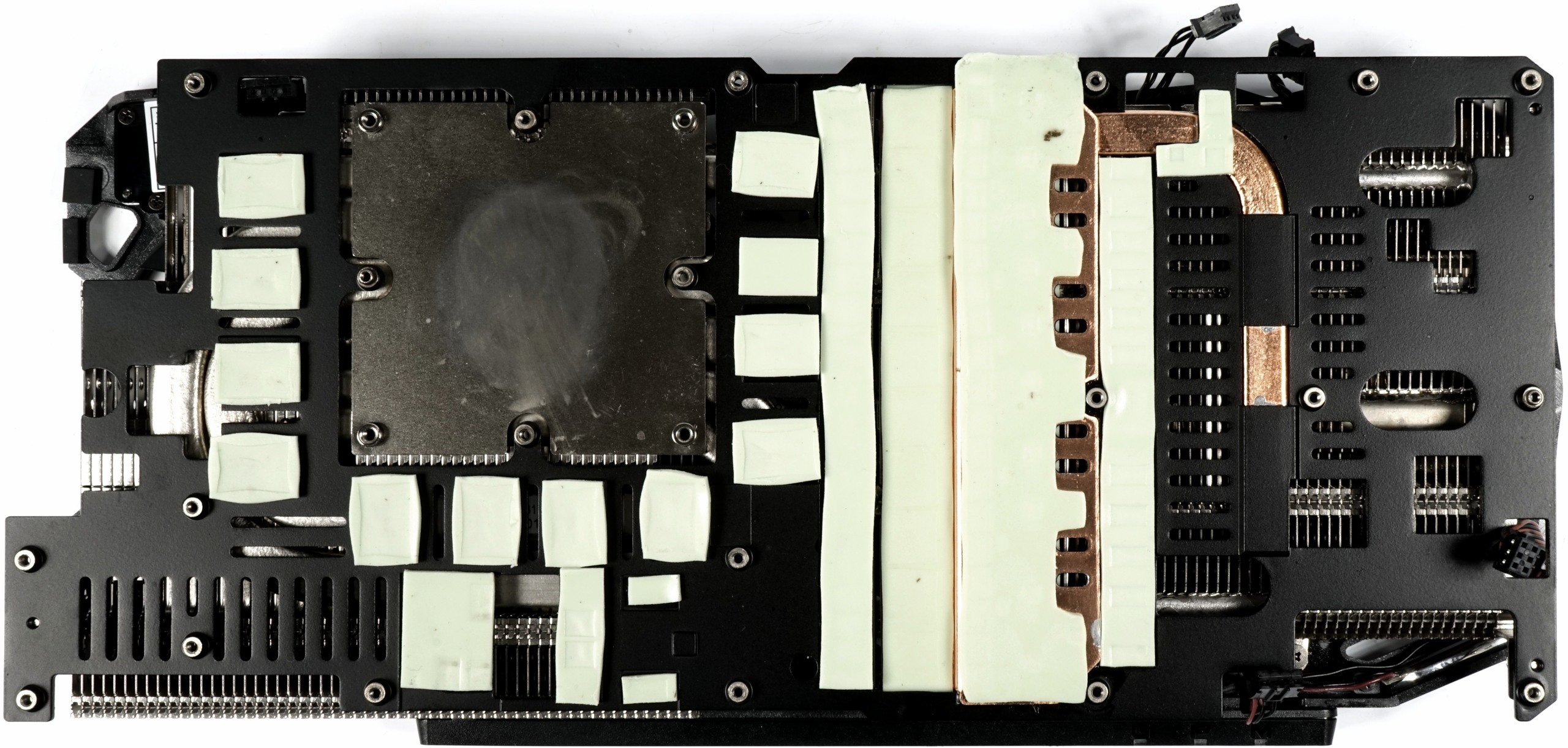

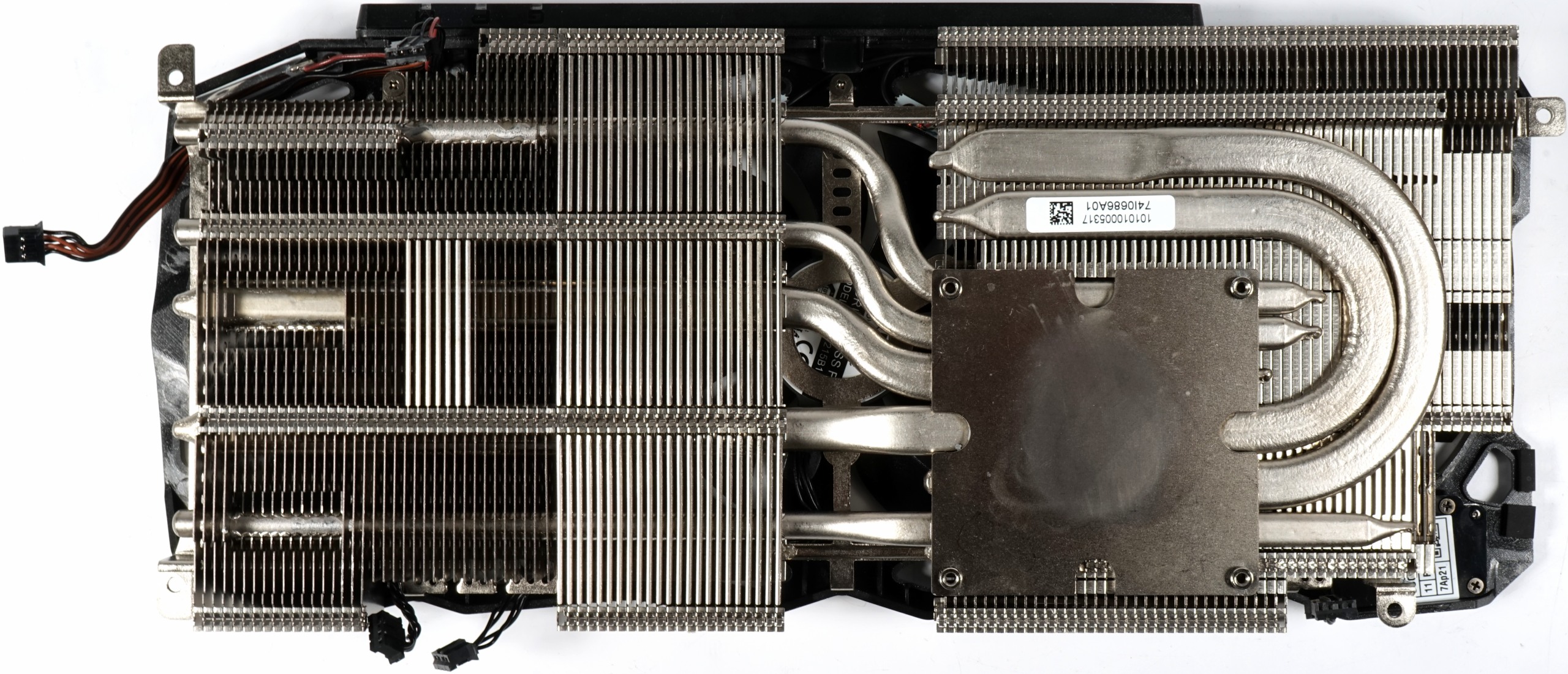

As mentioned, the main heat sink doesn't incorporate cooling for the voltage regulation circuitry. We also know that the power supply had to be spaced out due to its many control circuits. What we do find are a great many thermal pads.

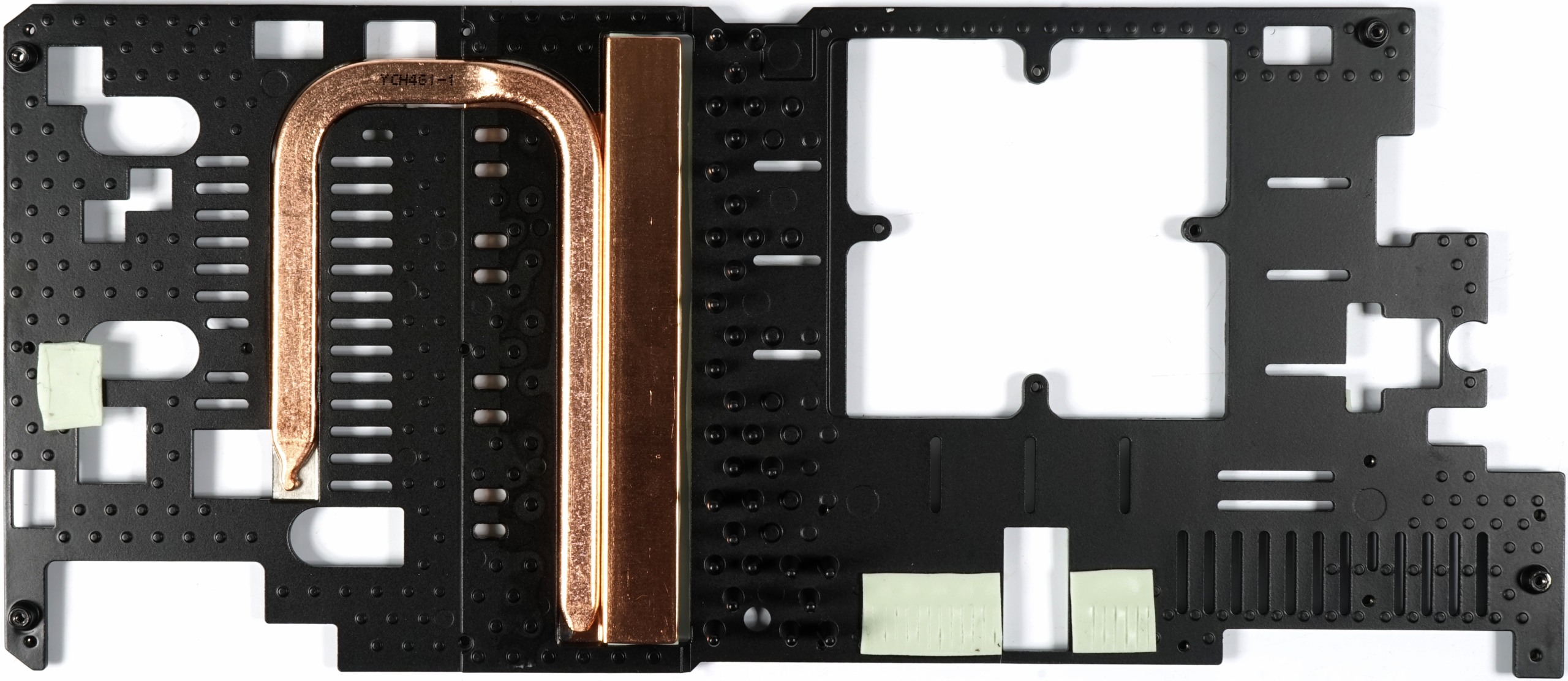

Except for the GPU and its heat sink, everything else is cooled by the sandwich-style cooling plate. This includes the memory modules and the GPU/memory power supplies. We also get another good look at the heat pipe and VRM heat sink, which touches the on-board components through thermal pads, while its back is connected to the cooler. This pipe helps combat the formation of a hot-spot, and it has another thermal pad that makes contact with fins on the top side.

As an experiment, we eliminated the heat pipe (by removing its thermal pad) and measured a temperature increase of up to 3 Kelvin on the center MOSFETs.

Again, the cooler is a true dual-slot solution. This naturally means it's subject to some surface area constraints not as prevalent on 2.5-slot cards. Nevertheless, EVGA managed to implement a 300W design fully capable of coping with GP102's waste heat, even in a closed case, so long as its three fans are running.

Three 8mm and three 6mm heat pipes consisting of a nickel-plated composite material are dedicated to the task of dissipating heat through the fin assembly. A nickel-plated sink holds the pressed-in heat pipes, as well as the cooler structure. Wherever the VRM heat sink's thermal pads connect, the surfaces form a 90° angle to create an additional, larger contact area.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

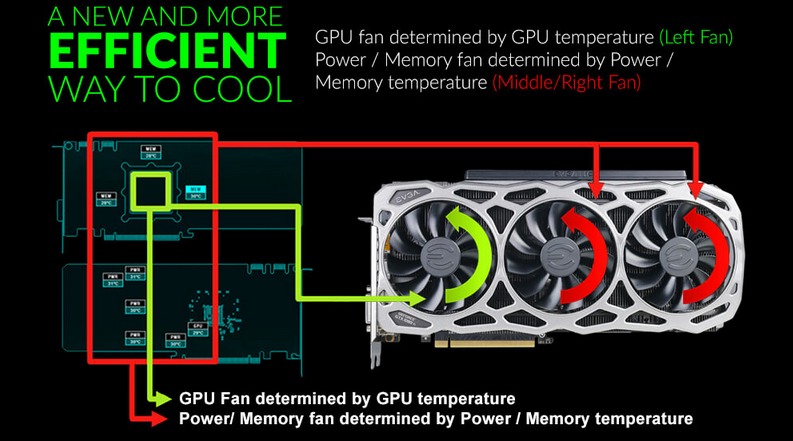

The card's three 85mm fans are equipped with 11 rotor blades each. Their rather steep angle suggests optimization for lots of airflow.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

AgentLozen I'm glad that there's an option for an effective two-slot version of the 1080Ti on the market. I'm indifferent toward the design but I'm sure people who are looking for it will appreciate it just like the article says.Reply -

gio2vanni86 I have two of these, i'm still disappointed in the sli performance compared to my 980's. What i can do but complain. Nvidia needs to do a driver game overhaul these puppies should scream together. They do the opposite which makes me turn sli off and boom i get better performance from 1. Its pathetic. Nvidia should just kill Sli all together since they got rid of triple sli they mind as well get rid of sli as well.Reply -

ahnilated I have one of these and the noise at full load on these is very annoying. I am going to install one of Arctic Cooling's heatsinks. I would think with a 3 fan setup this system would cool better and not have a noise issue like this. I was quite disappointed with the noise levels on this card.Reply -

Jeff Fx Reply19811038 said:I have two of these, i'm still disappointed in the sli performance compared to my 980's. What i can do but complain. Nvidia needs to do a driver game overhaul these puppies should scream together. They do the opposite which makes me turn sli off and boom i get better performance from 1. Its pathetic. Nvidia should just kill Sli all together since they got rid of triple sli they mind as well get rid of sli as well.

SLI has always had issues. Fortunately, one of these cards will run games very well, even in VR, so there's no need for SLI. -

dstarr3 Reply19811038 said:I have two of these, i'm still disappointed in the sli performance compared to my 980's. What i can do but complain. Nvidia needs to do a driver game overhaul these puppies should scream together. They do the opposite which makes me turn sli off and boom i get better performance from 1. Its pathetic. Nvidia should just kill Sli all together since they got rid of triple sli they mind as well get rid of sli as well.

It needs support from nVidia, but it also needs support from every developer making games. And unfortunately, the number of users sporting dual GPUs is a pretty tiny sliver of the total PC user base. So devs aren't too eager to pour that much support into it if it doesn't work out of the box. -

FormatC Dual-GPU is always a problem and not so easy to realize for programmers and driver developers (profiles). AFR ist totally limited and I hope that we will see in the future more Windows/DirectX-based solutions. If....Reply -

Sam Hain For those praising the 2-slot design for it's "better-than" for SLI... True, it does make for a better fit, physically.Reply

However, SLI is and has been fading for both NV and DV's. Two, that heat-sig and fan profile requirements in a closed case for just one of these cards should be warning enough to veer away from running in a 2-way SLI using stock and sometimes 3rd party air cooling solutions. -

SBMfromLA I recall reading an article somewhere that said NVidia is trying to discourage SLi and purposely makes them underperform in SLi mode.Reply -

Sam Hain Reply19810871 said:Unlike Asus & Gigabyte, which slap 2.5-slot coolers on their GTX 1080 Tis, EVGA remains faithful to a smaller form factor with its GTX 1080 Ti FTW3 Gaming.

EVGA GeForce GTX 1080 Ti FTW3 Gaming Review : Read more

Great article! -

photonboy NVidia does not "purposely make them underperform in SLI mode". And to be clear, SLI has different versions. It's AFR that is disappearing. In the short term I wouldn't use multi-GPU at all. In the LONG term we'll be switching to Split Frame Rendering.Reply

http://hexus.net/tech/reviews/graphics/916-nvidias-sli-an-introduction/?page=2

SFR really needs native support at the GAME ENGINE level to minimize the work required to support multi-GPU. That can and will happen, but I wouldn't expect to see it have much support for about TWO YEARS or more. Remember, games usually have 3+ years of building so anything complex needs to usually be part of the game engine when you START making the game.