Nvidia Infuses DGX-1 With Volta, Eight V100s In A Single Chassis

If you happen to need eight Tesla V100 GPUs with a total of 40,960 CUDA cores and 128GB of GPU memory crammed into one beefy chassis, Nvidia's got you covered. Yes, the DGX-1 can play Crysis (sorry to steal your thunder, commenters), but Nvidia designed it specifically for artificial intelligence workloads in the data center.

Artificial intelligence is reshaping the face of computing from the data center down to mobile devices. Nvidia GPUs already power a good portion of today's compute-intensive AI training, which is the critical step that enables the lighter-weight inference part of the deep learning equation. Processing data on the edge through inference, such as in IoT and mobile devices, brings its unique set of challenges, but the real processing grunt work occurs in the data center.

Nvidia sells plenty of GPUs, but as with any business model, it's always more lucrative to step up to the system level. It also helps build a more robust developer ecosystem to further the larger objective of bringing AI to a broad spate of applications that touch every facet of modern applications.

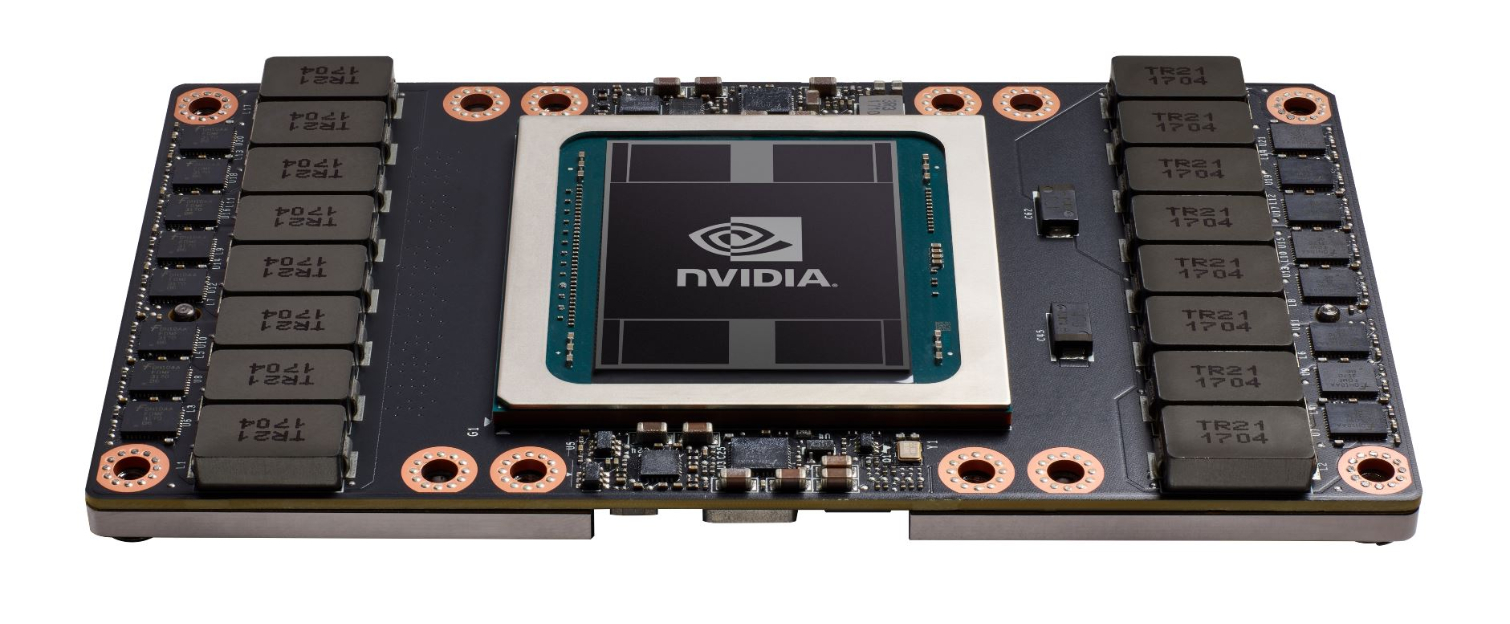

Tesla 150W FH-HL Accelerator

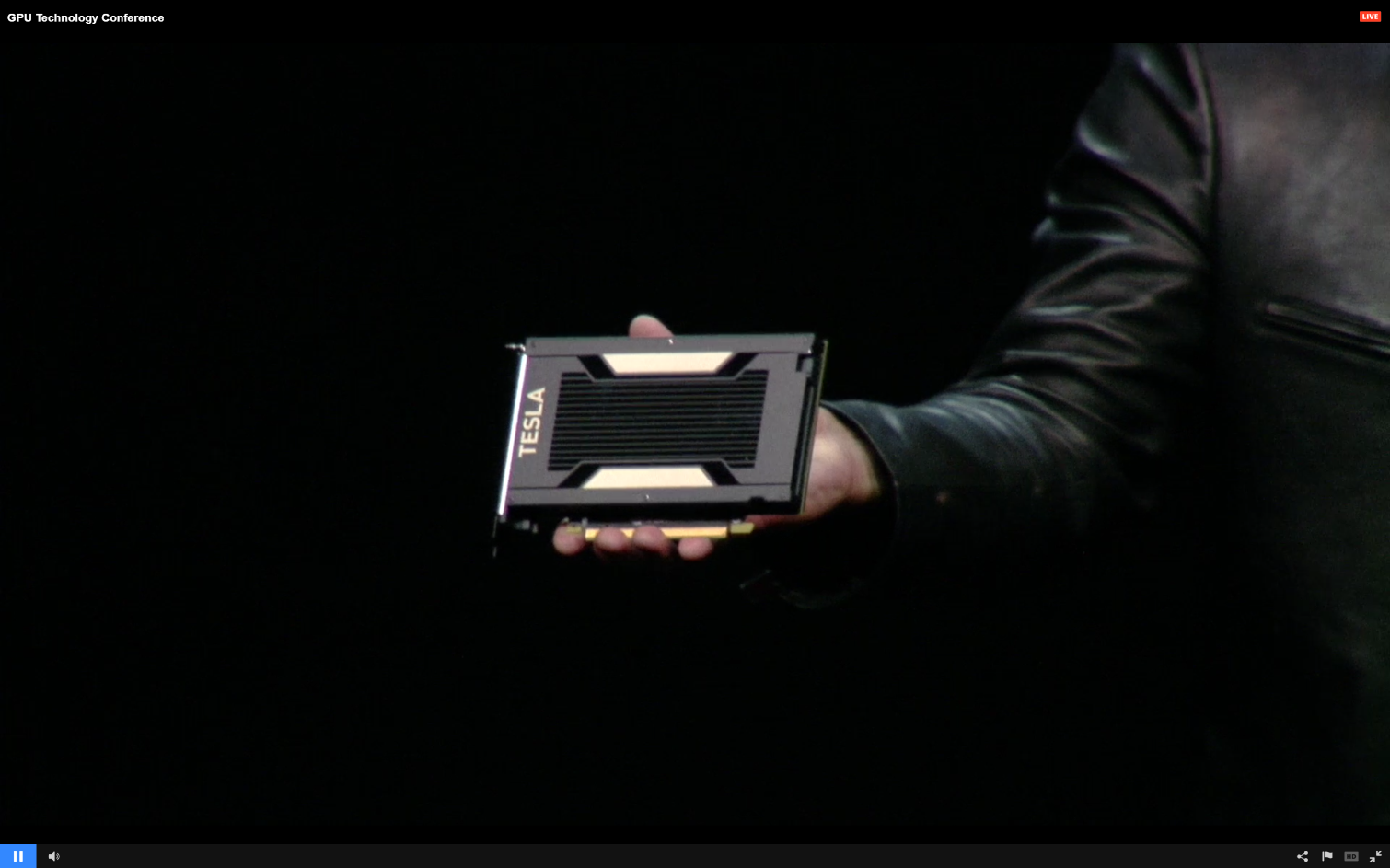

Of course, Nvidia isn't going to stray too far from the discrete component side of the house. The company also announced a 300W Tesla V100 accelerator and a 150W FH-HL (Full Height-Half Length) version. Both models feature the Volta GV100, but Nvidia designed the smaller FH-HL model to bring the power of inference to commodity inferencing servers.

The new model is designed to reduce power consumption and heat generation in scale out deployments. Nvidia CEO Jen-Hsun Huang displayed the 150W version of the card on stage at GTC, but didn't provide any technical details. Inference workloads aren't as intense, and considering the lower power envelope, the card will logically be slower than its 300W counterpart. The important takeaway is that the smaller version will enable a more power- and cost-efficient GPU avenue to tackling inference workloads.

Volta DGX-1

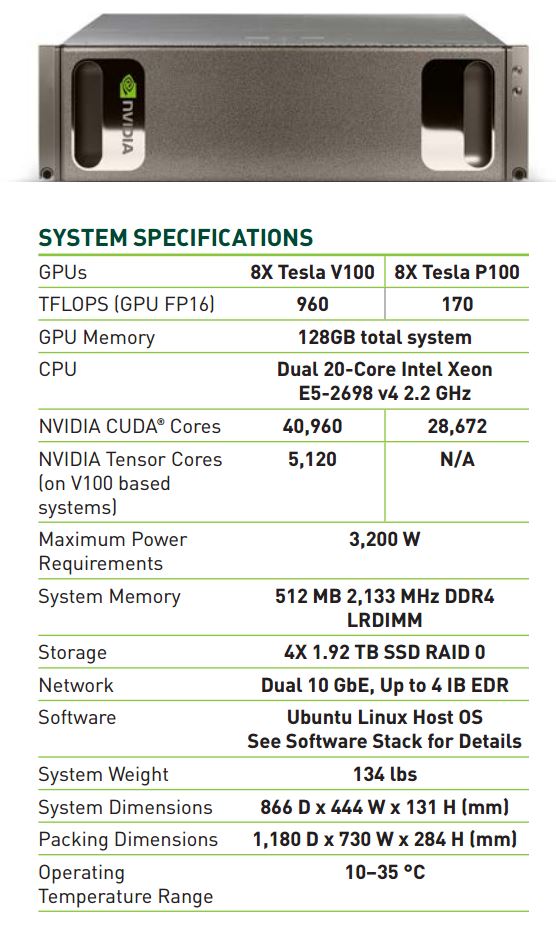

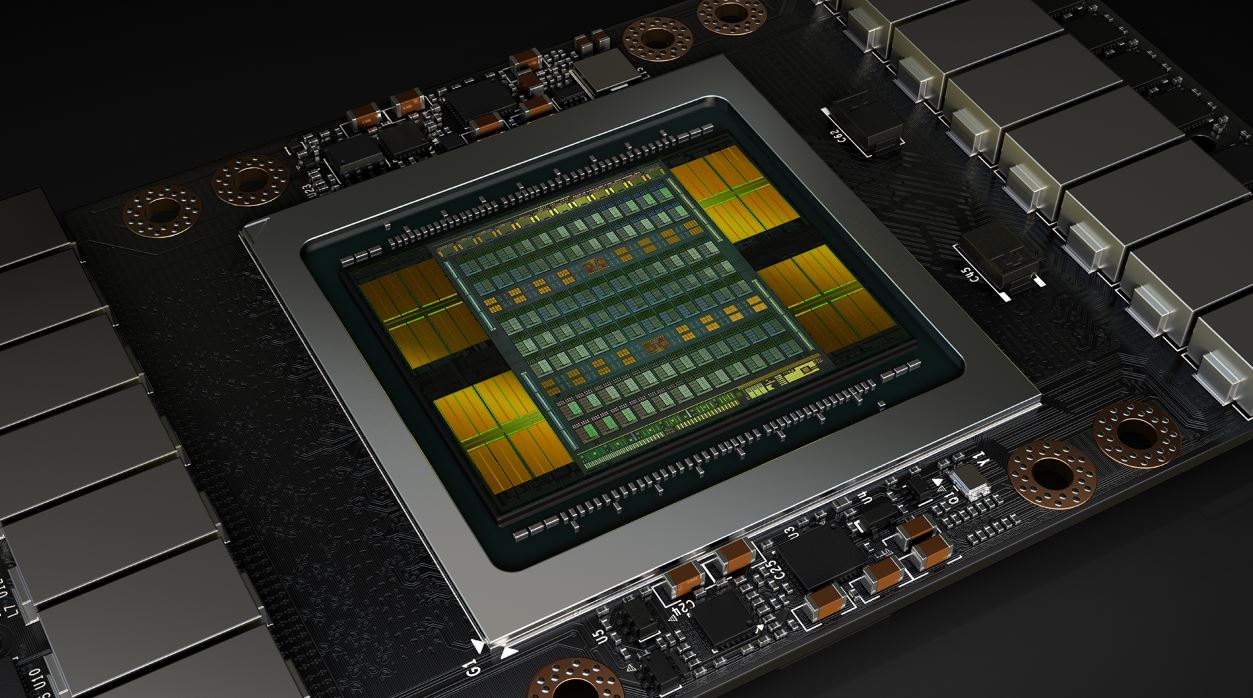

Nvidia announced its first-generation DGX-1 supercomputer last year. The Tesla-powered DGX-1 came bristling with eight P100 data center GPUs. The company has already seen strong uptake, with Elon Musk's OpenAI nonprofit among the first recipients. Nvidia claimed the first-generation DGX-1 replaces 400 CPUs with up to 170 FP16 TFLOPS courtesy of 28,672 CUDA cores. NVLink provided up to 5X the performance of a standard PCIe connection.

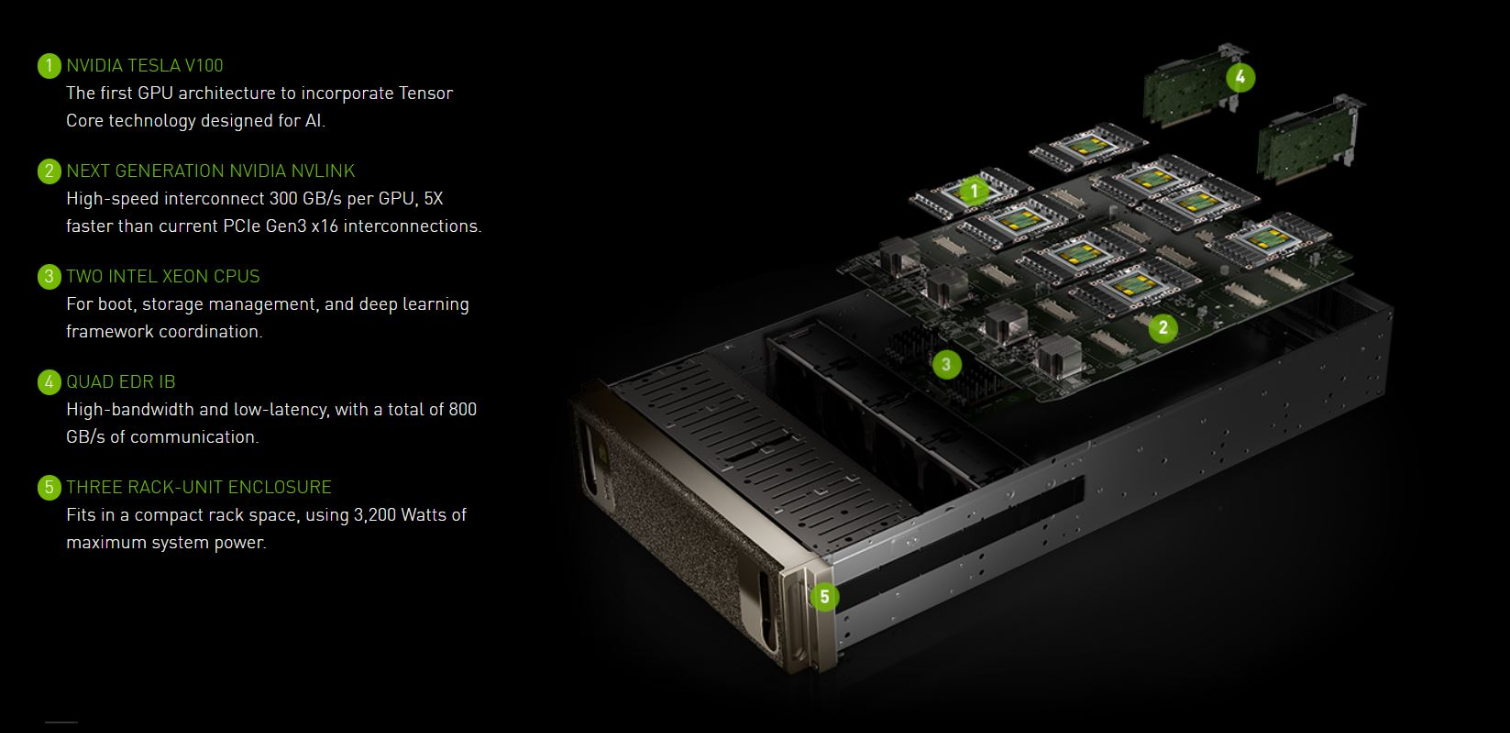

Those are impressive specs, indeed, but now Nvidia is upping the ante with the new Tesla V100-powered DGX-1. The new system packs eight Volta GPUs crammed into a 3U chassis to deliver a whopping 960 TFLOPs of power from 40,960 CUDA cores. It also brings the addition of 5,120 Tensor cores (more coverage here), and NVLink 2.0 increases throughput to 10X that of a standard PCIe connection (300GB/s). Power consumption weighs in at 3,200W. Nvidia claims the platform can replace 800 CPUs (or 400 servers) and can reduce the time required for a training task from eight days with a Titan X down to eight hours.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Software is just as important as the hardware. The DGX-1 comes with an integrated Nvidia deep learning software stack and cloud management services, which the company claims speeds time to deployment. The software stack supports many of the common tools, such as Pytorch, Caffe, and TensorFlow, among others, and also includes a Docker containerization tool. The Volta DGX-1 will ship in Q3 for $149,000. Customers can purchase the solution today with P100 GPUs, and Nvidia will provide a free upgrade to V100 GPUs in Q3, company CEO Jen-Hsun Huang announced on stage at GTC.

DGX Station

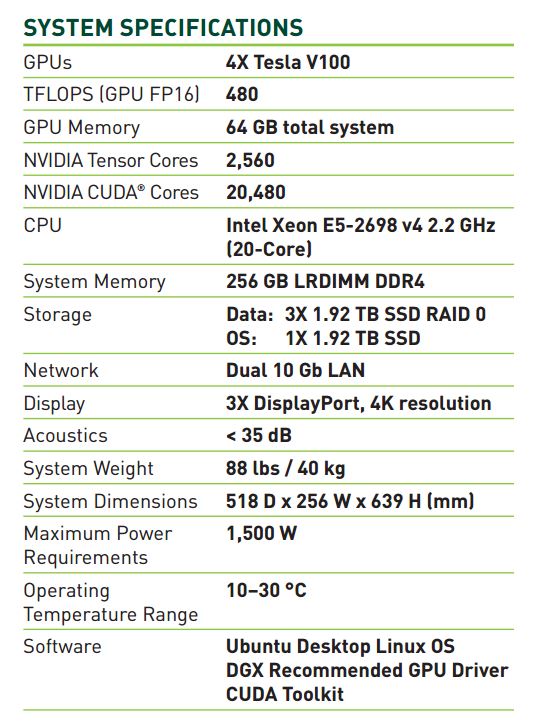

Nvidia designed the DGX Station for smaller deployments, so it's essentially a smaller and cheaper alternative with a lower entry-level price point.

Nvidia claims the svelte system can replace up to 400 CPUs while consuming 1/20th the power. The company claims the system is whisper quiet due to its integrated water cooling system. It pulls 1500W to power four V100 GPUs and deliver 480 TFLOPS (FP16). Nvidia claims the workstation provides a 100X speed-up for large data set analysis compared to a 20-node Spark server cluster.

The system carries a $69,000 price tag and also comes with a software suite.

HGX-1

Nvidia also touted its HGX-1, which it developed in collaboration with Microsoft to power Azure deep learning, GRID graphics, and CUDA HPC workloads, but the company was light on details. The HGX-1 appears to be a standard DGX-1 chassis paired with a complimentary 1U server.

The servers are connected via flexible PCIe cabling, and the configuration allows Microsoft to mix and match CPU and GPU requirements, such as having two or four CPUs paired with a varying number of GPUs in the secondary 3U DXG-1 chassis. The system isn't available to the public, but likely serves as a nice reminder to Nvidia's investors that it is heavily engaged in wooing the high-margin hyperscale data center customers.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Janissaire high end gaming for rich peoples lolz,you can tell it by looking at the chassis online&the premium look of the graphic card.Reply -

bit_user ReplyNVLink 2.0 increases throughput to 10X that of a standard PCIe connection (300GB/s).

Comparing aggregate vs. point-to-point bandwidth.

: (

A single NVLink2 is really just ~56% faster than PCIe 3.0 x16. -

drajitsh Paul please check. You alluded that it CAN be used a gpu, "Yes, the DGX-1 can play Crysis " . Tesla cards do NOT have display out hardware. You do NOT get DVI/DP/HDMI ports in tesla cards.Reply -

coolitic I believe a few tesla cards had display output in the past at least, for whatever reason. But yeah, most don't.Reply -

Fiqar_ I'm so sick of everyone following apple in terms of design. Be orginal for christs sake! This looks like something straight out of an Apple launch event! I mean the premium look alone gives it away!Reply -

bit_user Reply

Wow, of all the things to pick on...19677114 said:I'm so sick of everyone following apple in terms of design. Be orginal for christs sake! This looks like something straight out of an Apple launch event! I mean the premium look alone gives it away!

Anyway, for those of us who don't watch Apple launch events, what are you claiming resembles them? I have no idea what you mean by "premium look". -

Karadjgne Theres no issue with not having DP out on that monstrosity, it'll never be used in a pc, not with a 3200w necessity power consumption. The only way anyone will use it with games is via network.Reply -

bit_user Reply

There's allegedly a Quadro GP100 graphics card. So, it's plausible there could someday be a Quadro GV100.19678797 said:Theres no issue with not having DP out on that monstrosity, it'll never be used in a pc, not with a 3200w necessity power consumption. The only way anyone will use it with games is via network.

If this is to be believed, it looks like the drivers still need work:

http://www.videocardbenchmark.net/gpu.php?gpu=Quadro+GP100&id=3721 -

Karadjgne Dunno, I've always wondered about that, can't be just the drivers. Look at the Quadro's out now, like the $800 m4000, it's got 1664 Cc, 192 mem interface and 8Gb vram and DP 1.2 vrs the 1080ti that has double the Cc, 352 mem interface, 11Gb vram and DP 1.4 yet is $100 cheaper. And yet the gtx can't do what that m4000 can, so I'm guessing there's considerably more to what else is on those cards than just the physical specs, cuz in gaming the m4000 is gonna get Stomped by the gtx, yet in Cad, the gtx is gonna get Stomped and it can't all be just the drivers. The Quadro's / FirePros were never really good at gaming.Reply -

Elrabin Reply19679533 said:Dunno, I've always wondered about that, can't be just the drivers. Look at the Quadro's out now, like the $800 m4000, it's got 1664 Cc, 192 mem interface and 8Gb vram and DP 1.2 vrs the 1080ti that has double the Cc, 352 mem interface, 11Gb vram and DP 1.4 yet is $100 cheaper. And yet the gtx can't do what that m4000 can, so I'm guessing there's considerably more to what else is on those cards than just the physical specs, cuz in gaming the m4000 is gonna get Stomped by the gtx, yet in Cad, the gtx is gonna get Stomped and it can't all be just the drivers. The Quadro's / FirePros were never really good at gaming.

Why are you comparing an older model to a newer model?

M4000 is based on Maxwell. A more fair comparison would be to the P5000 which is based on Pascal, same as the 1080

GTX 1080 has 2560 CUDA cores at 1733 mhz and 8gb of GDDR5x

The P5000 has 2560 CUDA cores at an unspecified clock speed and 16gb of GDDR5x

They should perform almost identically in gaming and workstation tasks once clock speed is taken into account.

I'm using a GTX 1080 in Nvidia IRAY 3d renders and it stomp the snot out of a M4000 for that task.

http://www.migenius.com/products/nvidia-iray/iray-benchmarks-2016-3