Meta will deploy standalone Nvidia Grace CPUs in production, with Vera to follow — company sees perf-per-watt improvements of up to 2X in some CPU workloads

Nvidia lands a big win in its ambitions to become a CPU vendor

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Beyond selling its Vera data center CPUs as part of Vera Rubin NVL72 rack-scale systems, Nvidia has expressed ambitions to become a standalone data center CPU vendor, and a new partnership with hyperscale giant Meta represents a big step forward for that plan.

As part of a new multi-year strategic partnership announced today, Meta says it'll expand its use of Nvidia tech as it continues to build out hyperscale data centers optimized for its AI training and inference efforts. Those plans include “millions” of Blackwell and Rubin GPUs, part of a massive AI spending plan from Meta that could reach as much as $135 billion in total for 2026.

As part of the expanded collaboration between the two companies, Meta will use also Nvidia's Arm-powered Grace server CPUs as standalone platforms in its production data centers. The companies say their collaboration is the first "large-scale Grace-only deployment," and it's meant to deliver a sizeable performance-per-watt improvement for the company's facilities.

Nvidia data center honcho Ian Buck told The Register that Meta is seeing gains of up to 2x the performance per watt on certain workloads with the Grace platform, and that it's already test-driving Nvidia's next-gen Vera CPU with "very promising" results. The companies say that large-scale Vera-only deployments could begin as soon as 2027.

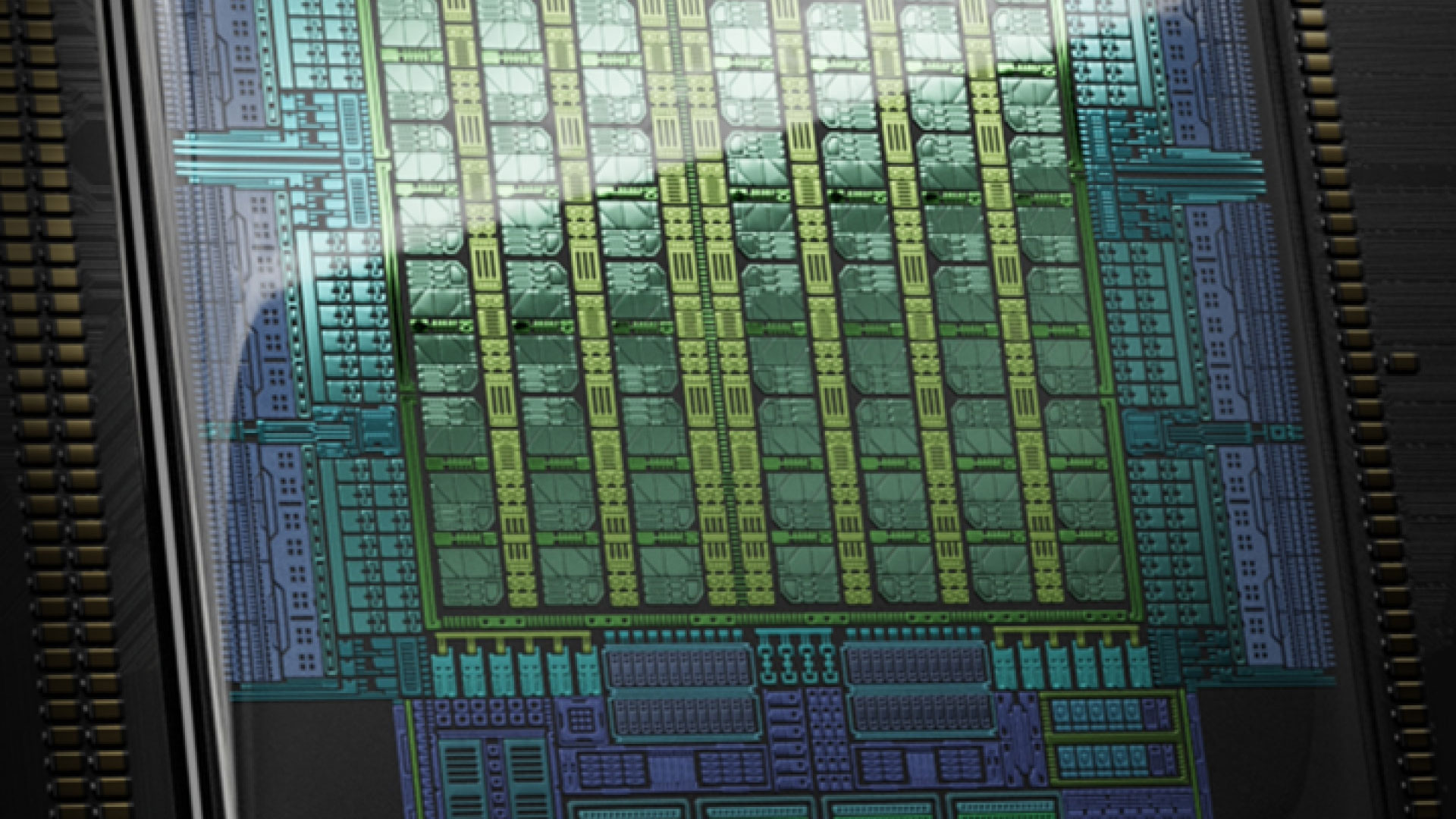

For reference, the Grace CPU sports 72 Arm Neoverse V2 cores and supports up to 480GB of LPDDR5X memory in its standalone C1 config. Nvidia also offers Grace as a "CPU Superchip" that joins two dies together over the NVLink-C2C interconnect, resulting in 144 cores with up to 960GB of LPDDR5X and up to 1024 GB/s of aggregate memory bandwidth from certain memory capacities.

Vera, in turn, has 88 custom Arm cores with up to 176 threads, support for up to 1.5 TB of LPDDR5X memory with up to 1.2TB/s of memory bandwidth, and PCIe Gen 6 and Compute Express Link 3.1 connectivity. Importantly, Vera is also the first Nvidia CPU to support a confidential or trusted computing environment throughout the entirety of its rack-scale systems.

Beyond CPUs, Meta also says it'll deploy Nvidia Spectrum-X Ethernet switches throughout its data centers. As we learned at CES, Spectrum-X switches with co-packaged optics promise to increase performance-per-watt for scale-out applications by eliminating active cabling with optical transceivers that can, when combined with the power usage of the switch itself, account for up to 10% of each rack’s power consumption.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Power used for data movement is power that isn’t used to feed GPUs, and with demands for the absolute maximum compute density and efficiency in every rack these days, a savings of that magnitude is a huge deal, so it's no surprise that Meta is hopping on board as it continues to expand its footprint.

Beyond hardware, Nvidia will offer its considerable in-house expertise in designing AI models to Meta’s engineers to help the company tune and boost the performance of its own core AI applications.

All of this just goes to show that as the AI revolution continues, Nvidia’s reach into tech far beyond gaming graphics cards is so extensive that people are likely to use software powered or shaped by its models and accelerators, whether they realize it or not, and that’ll only grow more true by the day as Meta expands its use of Nvidia's platforms and tech.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

As the Senior Analyst, Graphics at Tom's Hardware, Jeff Kampman covers everything to do with GPUs, gaming performance, and more. From integrated graphics processors to discrete graphics cards to the hyperscale installations powering our AI future, if it's got a GPU in it, Jeff is on it.

-

bit_user It looks like ARM CPUs were the straw that broke the camel's back.Reply

AMD should've kept going with its ARM ambitions. Maybe they didn't have the resources to complete the K12, but they should've made successors when their financial picture started to improve.

I had thought their Sound Wave SoC would feature Zen-based ARM cores, but rumors indicate they just had IP core licensed from ARM. I guess we'll have to wait a bit longer to see how well the Zen cores can compete, without being bogged down by the legacy of x86. -

thestryker If Neoverse cores were good enough to enhance their workloads they could have had this at pretty much any time. Seems like they just didn't want to do it themselves which they absolutely have the scale to have done.Reply

Unfortunate that with all the acquisition stuff going on Ampere wouldn't be an option as this seems like the type of market they were targeting. -

bit_user Reply

All of the other Hyperscalers did. Microsoft, Amazon, and Google.thestryker said:If Neoverse cores were good enough to enhance their workloads they could have had this at pretty much any time.

Maybe Meta just thought it was extending themselves to far to build their own CPUs, when they were already struggling to build their own AI accelerators? So far, the only off-the-shelf ARM server chip has been Ampere, but they haven't really been competitive for like 4-5 years.thestryker said:Seems like they just didn't want to do it themselves which they absolutely have the scale to have done.

AmpereOne just isn't very competitive. I think it missed its market window. It's very hard to beat the big boys at their own game.thestryker said:Unfortunate that with all the acquisition stuff going on Ampere wouldn't be an option as this seems like the type of market they were targeting. -

thestryker Reply

The acquisition situation seemed to ensure it was never going to happen. It never seemed to have a real launch despite existing in 2024.bit_user said:AmpereOne just isn't very competitive. I think it missed its market window. It's very hard to beat the big boys at their own game. -

bit_user Reply

No, Phoronix benchmarked it. It simply wasn't competitive enough. That's why you didn't hear much about it.thestryker said:The acquisition situation seemed to ensure it was never going to happen. It never seemed to have a real launch despite existing in 2024.

https://www.phoronix.com/review/ampereone-a192-32x

They even followed through with launching AmpereOne M, although I don't know where else it surfaced besides Oracle.

https://www.phoronix.com/news/AmpereOne-M-Oracle-Cloud-A4

Post-acquisition, it seems they're not quite dead, yet:

https://www.phoronix.com/news/LLVM-Clang-Ampere1C -

bit_user I think RAM could be playing a big role in this, in two ways. First, Nvidia is somewhat well-known for buying up capacity in advance. However many Grace CPUs they had secured wafers for, they probably bought up enough LPDDR5X to populate them. Because it's a fixed amount per CPU, that's an easy calculation to make. Having made these DRAM purchases in advance could be making them more cost-competitive, right now.Reply

The second point is about energy-efficiency. Here's where LPDDR5X has a huge advantage over DDR5 DIMMs. Given how much efficiency was highlighted in this article, it seems like that might be a factor at play.

As for the relatively small core & thread-count, NVLink can mitigate that, by scaling up servers well beyond the typical dual sockets you tend to find in mainstream cloud compute. I'd be really interested in knowing just how many Grace CPUs per machine Meta is deploying. That could go some ways toward further improving the value proposition. -

TerryLaze Reply

Does nvidia have any arm core only product?!?bit_user said:It looks like ARM CPUs were the straw that broke the camel's back.

AMD should've kept going with its ARM ambitions. Maybe they didn't have the resources to complete the K12, but they should've made successors when their financial picture started to improve.

I had thought their Sound Wave SoC would feature Zen-based ARM cores, but rumors indicate they just had IP core licensed from ARM. I guess we'll have to wait a bit longer to see how well the Zen cores can compete, without being bogged down by the legacy of x86.

My understanding was that the arm cores are just there to feed the GPUs and that the GPUs are the main attraction.

AMD would have to get their GPU business up to snuff first. -

thestryker Reply

https://www.nvidia.com/en-us/data-center/grace-cpu-superchip/TerryLaze said:Does nvidia have any arm core only product?!?

scroll down to: NVIDIA Grace CPU C1 -

bit_user Reply

The main point of the article is that they're deploying machines containing only Grace CPUs and no GPUs.TerryLaze said:Does nvidia have any arm core only product?!?

My understanding was that the arm cores are just there to feed the GPUs and that the GPUs are the main attraction.

But yes, Grace was ostensibly created with the primary purpose of supporting Hopper & Blackwell GPUs. That's why it's noteworthy they're being deployed without either. -

bit_user Reply

Pretty good synchronicity on this one, @thestryker . Apparently, ServeTheHome has one in their lab and is in the process of reviewing it.bit_user said:They even followed through with launching AmpereOne M, although I don't know where else it surfaced besides Oracle.

https://www.phoronix.com/news/AmpereOne-M-Oracle-Cloud-A4

Here's a preview:

https://www.servethehome.com/this-is-the-ampere-ampereone-m-a192-32m-192-core-12-channel-ddr5-arm-cpu/