AMD Radeon Vega RX 64 8GB Review

Why you can trust Tom's Hardware

Clock Rates, Temperatures & Noise

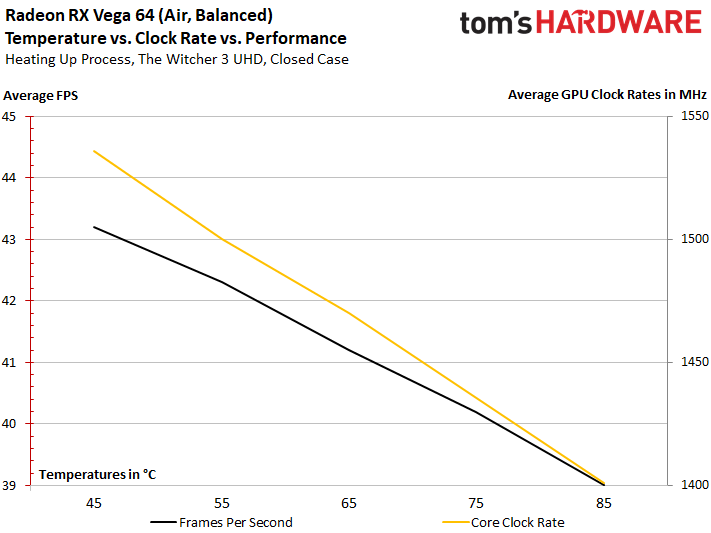

Temperature & Clock Frequency

A maximum temperature of 85°C is reached fairly quickly due to conservative fan control. At that point, the card loses approximately 6% of its performance compared to when it was still cool. This is almost entirely due to the automatic clock frequency throttling by ~9%. We calculated an average clock frequency across the entire run for every 5°C step. The results ranged from 1533 MHz when the card was cool all the way down to 1401 MHz when it was hot.

Temperature Curves & Power Consumption

This where things get interesting. At the warmed-up card’s 1401 MHz, we measure approximately 285W. However, at the cold card’s 1533 MHz, we measure approximately 310W. This means that a 9% power consumption increase nets us a 9% frequency increase, which, in turn, yields a 6% gaming performance increase. In other words, the efficiency curve is already starting to dip. There’s not much headroom left.

That also means losses due to leakage don’t really play much of a role anymore. The days when 40W could be saved by keeping the card cool at the same frequency are gone.

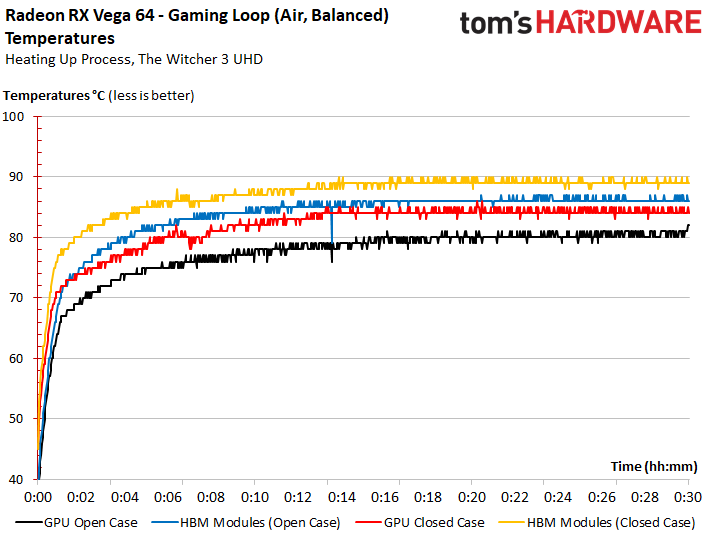

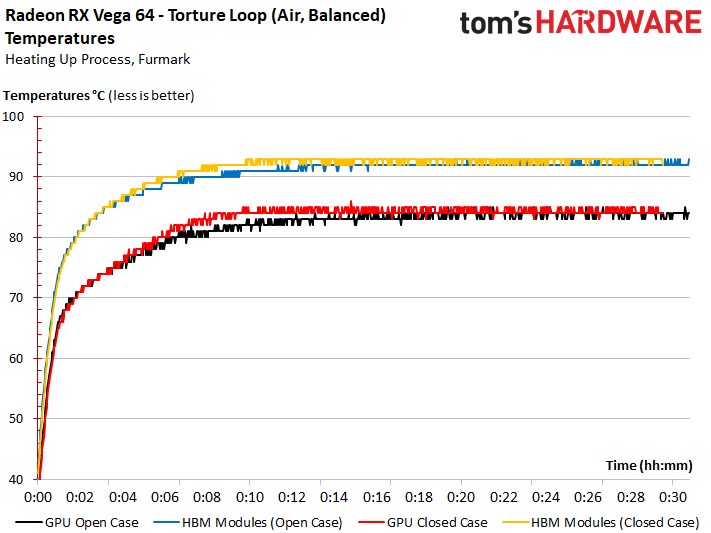

GPU vs. HBM2 Temperatures

Unless the sensors are lying to us, the GPU’s maximum temperature is 84°C (85°C peak), while the HBM2 gets up to 90°C (94°C peak during the stress test). That latter figure seems fairly high, but it does end up close to the ceiling for GDDR5X. We'll keep an eye on both temperatures during future tests; it's important to ensure the sensor data is 100% accurate.

During the stress test, the card heats up so quickly that the curves for the open and closed PC cases are practically on top of each other.

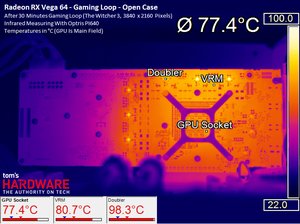

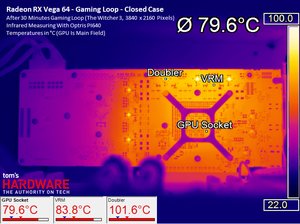

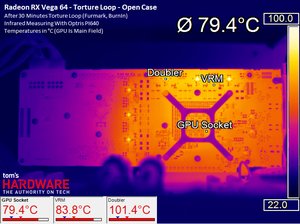

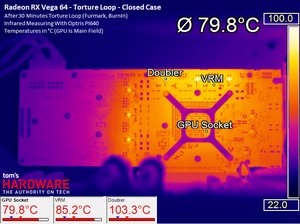

Board Temperatures

What jumps out to us is that the board just below the GPU reads ~5°C cooler than the inside of Vega 10! The obvious question is why. To answer, remember that AMD's Vega 10 GPU and its HBM2 sit on an interposer attached to a package substrate positioned on top of the PCB. The interposer doesn’t seem to make full contact with this substrate, causing a so-called underfill issue. Air between the layers acts almost like insulation.

During the stress test, temperatures are a little lower due to the fan spinning faster and Vega 10's lower (throttled) frequency.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

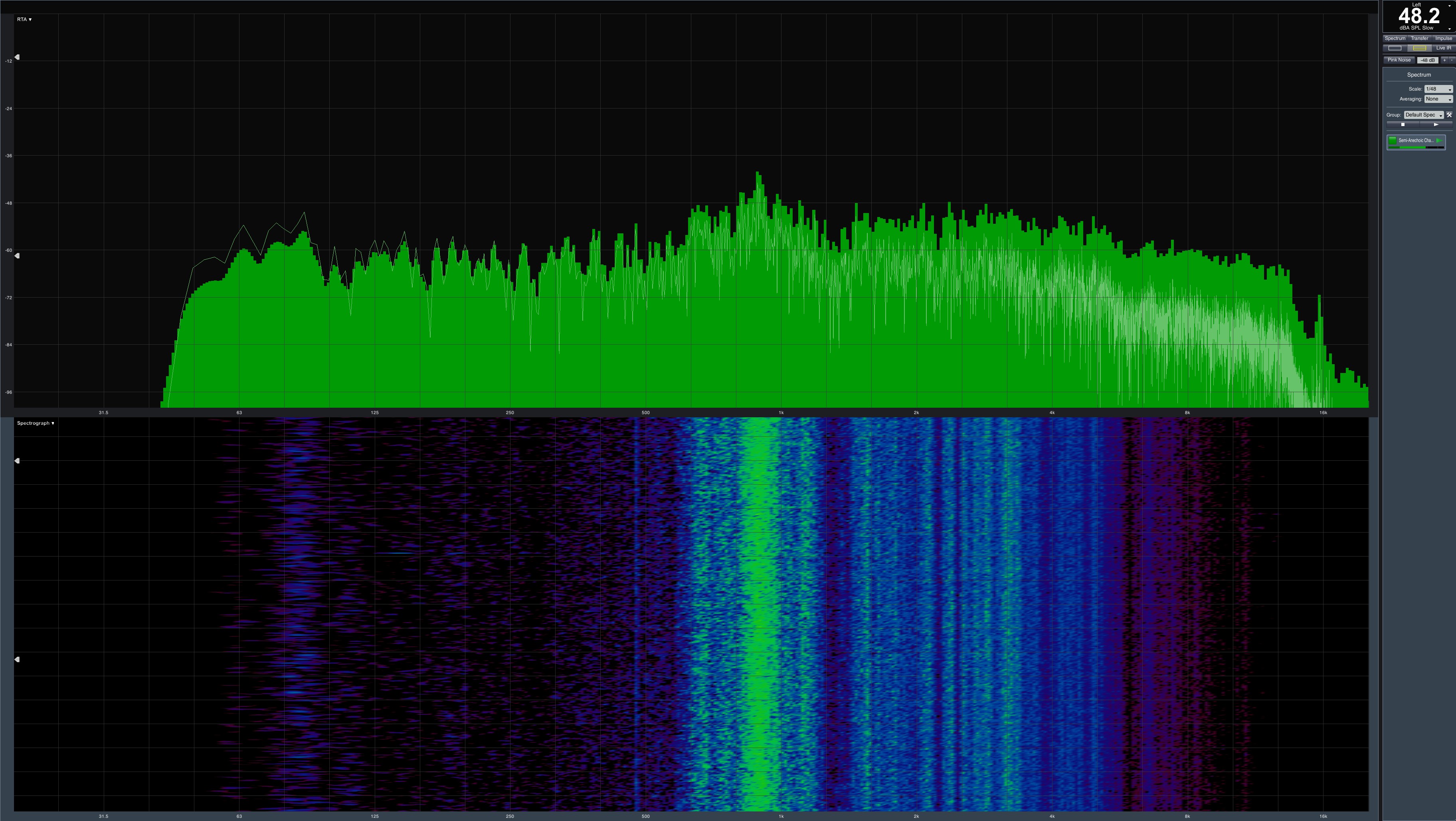

Noise

Using the primary BIOS and the Balanced power profile, Radeon RX Vega 64 generates a maximum of 48.2 dB(A). Switching to Turbo mode causes the card to exceed 50 dB(A).

We praised AMD’s cooling solution in our Vega Frontier Edition review. However, a pleasant breeze turns into a raging tornado this time around due to power consumption that's way too high. Then again, an Nvidia GeForce GTX 1080 Ti Founders Edition with a 295W maximum power target is almost as loud.

The version of AMD’s cooling solution used on Radeon RX Vega 64 is significantly different from the one we found on Vega Frontier Edition. It’s too aggressive, too hot, and, of course, too loud. Designing a thermal solution to be "good enough" never works out well for thermals or noise.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

-

10tacle We waited a year for this? Disappointing. Reminds me of the Fury X release which was supposed to be the 980Ti killer at the same price point ($649USD if memory serves me correctly). Then you factor in the overclocking ability of the GTX 1080 (Guru3D only averaged a 5% performance improvement overclocking their Vega RX 64 sample to 1700MHz base/boost clock and a 1060MHz memory clock). This almost seems like an afterthought. Hopefully driver updates will improve performance over time. Thankfully AMD can hold their head high with Ryzen.Reply -

Sakkura For today's market I guess the Vega 64 is acceptable, sort of, since the performance and price compare decently with the GTX 1080. It's just a shame about the extreme power consumption and the fact that AMD still has no answer to the 1080 Ti.Reply

But I would be much more interested in a Vega 56 review. That card looks like a way better option, especially with the lower power consumption. -

envy14tpe Disappointing? what. I'm impressed. Sits near a 1080. Keep that in mind when thinking that FreeSync sells for around $200 less than Gsync. So pair that with this GPU and you have awesome 1440p gaming.Reply -

SaltyVincent This was an excellent review. The Conclusion section really nailed down everything this card has to offer, and where it sits in the market.Reply -

10tacle Reply20060001 said:Disappointing? what. I'm impressed. Sits near a 1080.

The GTX 1080 has been out for 15 months now, that's why. If AMD had this GPU at $50 less then it would be an uncontested better value (something AMD has a historic record on both in GPUs and CPUs). At the same price point however to a comparable year and three month old GPU, there's nothing to brag about - especially when looking at power use comparisons. But I will agree that if you include the cost of a G-Sync monitor vs. a FreeSync monitor, at face value the RX 64 is the better value than the GTX 1080. -

redgarl It`s not a bad GPU, however I would not buy one. I am having an EVGA 1080 FTW that I am living to hate (2 RMAs in 10 months), however even if I wanted to switch to Vega, might not be a good idea. It will not change anything.Reply

However two Vega 56 in CF might be extremely interesting. i did that with two 290x 2 years ago and it might be still the best combo out there. -

blppt IIRC, both AMD and Nvidia are moving away from CF/SLI support, so you'd have to count on game devs supporting DX12 mgpu (not holding my breath on that one for the near future).Reply -

cknobman I game at 4k now (just bought 1080ti last week) and it appears for the time being the 1080ti is the way to go.Reply

I do see promise in the potential of this new AMD architecture moving forward.

As DX12 becomes the norm and more devs take advantage of async then we will see more performance improvements with the new AMD architecture.

If AMD can get power consumption under control then I may move back in a year or two.

Its a shame too because I just built a Ryzen 7 rig and felt a little sad combining it with an Nvidia gfx card. -

AgentLozen I'm glad that AMD has a video card for enthusiasts who run 144hz monitors @ 1440p. The RX 580 and Fury X weren't well suited for that. I'm also happy to see that Vega64 can go toe to toe with the GTX 1080. Vega64 and a Freesync monitor are a great value proposition.Reply

That's where the positives end. I'm upset with the lack of progress since Fury X like everyone else. There was a point where Fury X was evenly matched with nVidia's best cards during the Maxwell generation. Nvidia then released their Pascal generation and a whole year went by before a proper response from AMD came around. If Vega64 launched in 2016, this would be totally different story.

Fury X championed High Bandwidth Memory. It showed that equipping a video card with HBM could raise performance, cut power consumption, and cut physical card size. How did HBM2 manifest? Higher memory density? Is that all?

Vega64's performance improvement isn't fantastic, it gulps down gratuitous amounts of power, and it's huge compared to Fury X. It benefits from a new generation of High Bandwidth memory (HBM2) and a 14nm die shrink. How much more performance does it receive? 23% in 1440p. Those are Intel numbers!

Today's article is a celebration of how good Fury X really was. It still holds up well today with only 4GB of video memory. It even beat the GTX 1070 is several benchmarks. Why didn't AMD take the Fury X, shrink it to 14nm, apply architecture improvements from Polaris 10, and release it in 2016? That thing would be way better than Vega64.

edit: Reworded some things slightly. Added a silly quip. 23% comes from averaging the differences between Fury X and Vega64. -

zippyzion Well, that was interesting. Despite its flaws I think a Vega/Ryzen build is in my future. I haven't been inclined to give NVidia any of my money for a few years now, since a malfunction with an FX 5900 destroyed my gaming rig... long story. I've been buying ATI/AMD cards since then and haven't felt let down by any of them.Reply

Let us not forget how AMD approaches graphics cards and drivers. This is base performance and baring any driver hiccups it will only get better. On top of that this testing covers the air cooled version. We should see better performance on the water cooled version that would land it between the 1080 and the Ti.

Also, I'd really like to see what low end and midrange Vega GPUs can do. I'm interested to see what the differences are with the 56, as well as the upcoming Raven Ridge APU. If they can deliver RX 560 (or even just 550) performance on an APU, AMD will have a big time winner there.