Watch Dogs 2 Performance Benchmarks

Benchmark Results & Conclusion

Test System and Software

We have summarized our our standard test system's hardware and software configuration in the following table:

Alphacool Water Cooler (Nexxxos CPU Cooler, VPP655 Pump, Phobya Balancer, 24cm Radiator)

| Drivers | |

|---|---|

| AMD | Crimson 16.11.5 |

| Nvidia | GeForce 376.09 (GameReady) |

MORE: All Gaming Content

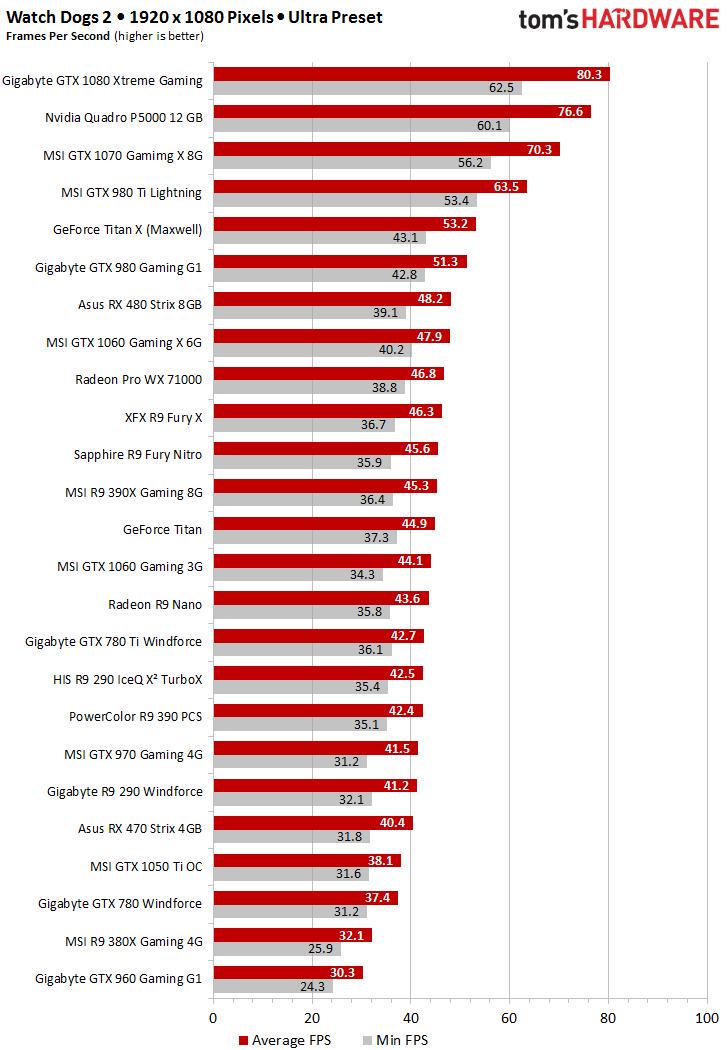

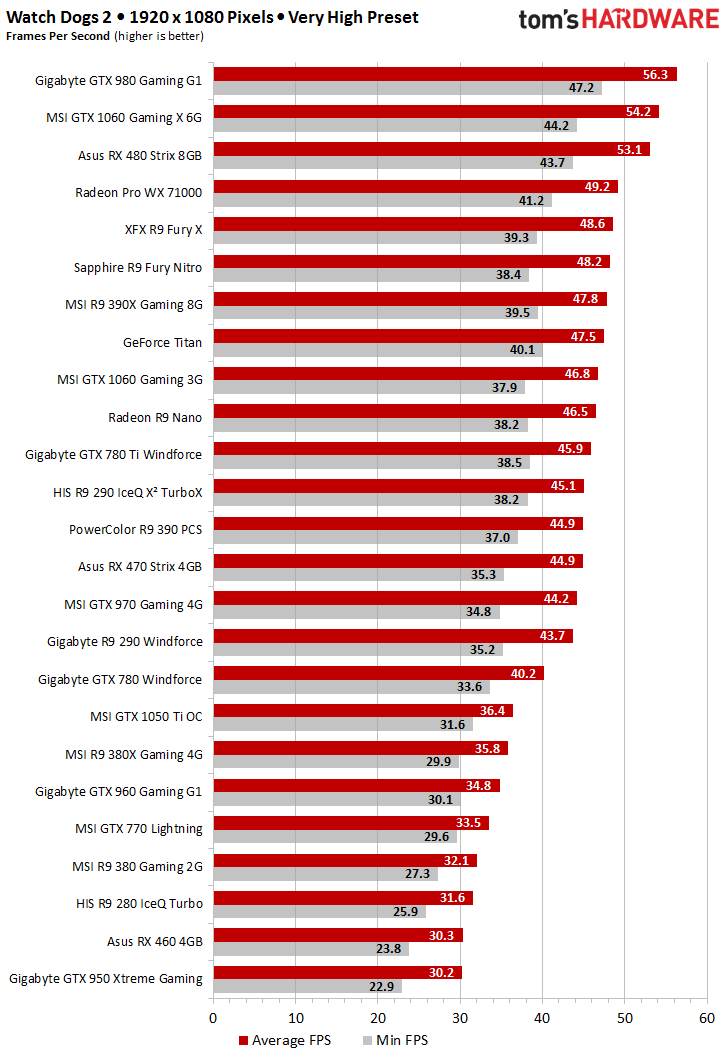

1920x1080 @ Ultra & Very High Presets

The following charts only include cards that could muster at least 30 FPS, since anything under that just isn't playable. Given how taxing this game can be, it's surprising to see how many cards are able to handle the Ultra detail preset at 1920x1080.

There’s not a lot of difference between the Ultra and Very High presets when it comes to graphics quality. Performance, on the other hand, is affected dramatically. Stepping down generally yields at least 10 percent-higher frame rates, and this rises all the way to 15 or 20 percent during less-challenging workloads, depending on the graphics card and scene.

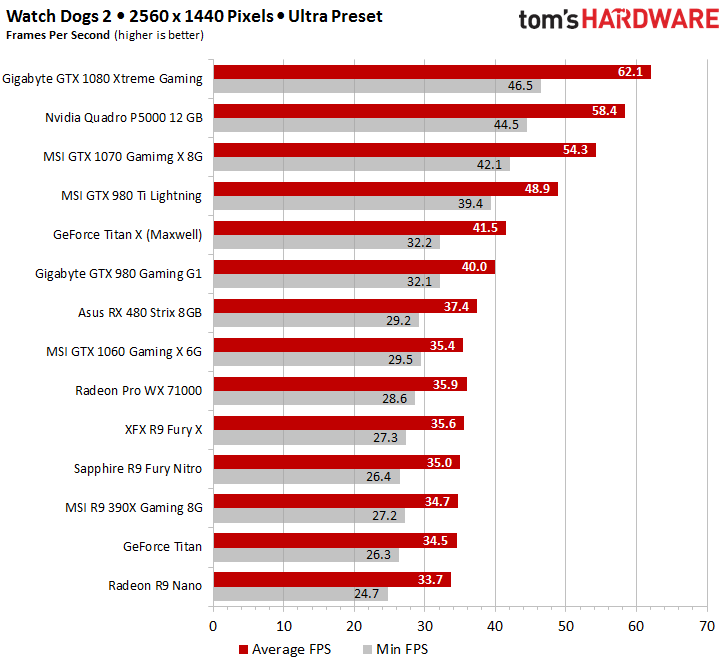

2560x1440 @ Ultra Preset

If you want your graphics card to keep up at 2560x1440, then you’ll need at least an AMD Radeon R9 Nano or Nvidia GeForce GTX 1060 with 6GB of GDDR5. The 3GB version doesn't make the list because it runs out of memory and generates unplayable frame rates. For all results below 50 FPS, we recommend switching to the Very High preset rather than trying to push Ultra.

If that’s still too much for the graphics card, then the High or Medium preset might seem preferable. At that point, though, dropping your resolution and sticking with a higher preset could be a better option.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

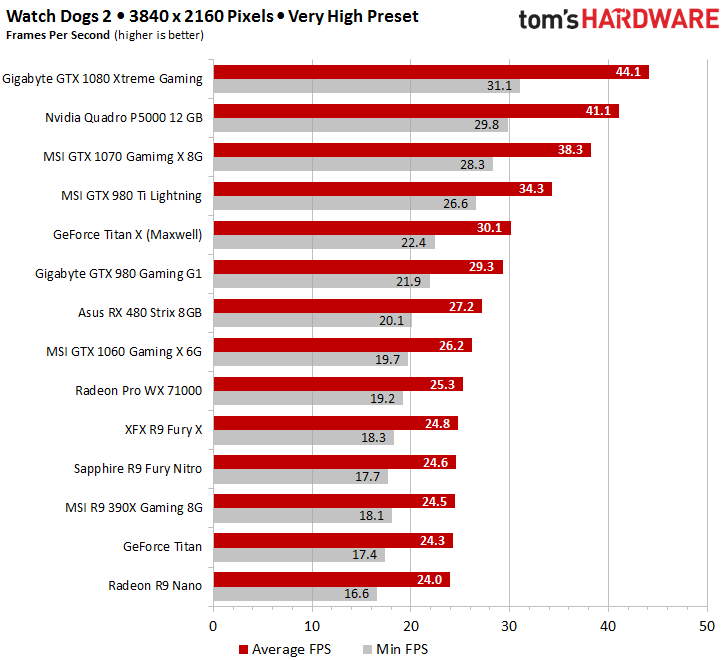

3840 x 2160 @ Very High Preset

Enthusiasts who don't have a problem with console-class FPS from their GeForce GTX 1080 can try the Ultra preset at 3840x2160. You may not enjoy the game as much, though. Realistically, the Very High preset is as good as it gets from a single-GPU configuration. But Watch Dogs 2 does support AMD's CrossFire and Nvidia's SLI technologies, so there's room to experiment with a second graphics card.

Conclusion

The first few hours of playing Watch Dogs 2 were compelling, both from a graphical and gameplay perspective. The settings are plentiful, varied, self-explanatory, and, most important, they actually have a significant impact on visual quality. However, there were also a few things that left us scratching our heads.

The most annoying aspect of Watch Dogs 2’s graphics was the ever-present aliasing. Some settings help reduce it, but this comes at the price of performance and sharpness. On the plus side, this game features the best-looking water we've seen in a long time. Vehicle handling comes straight from the arcade, but that style fits the often-cheeky dialog and everything-but-stiff characters.

We can forgive the problems with the anti-cheating tool that just won’t die, and causes issues with being online even if you really thought you were offline. However, crashing straight to the desktop every so often is just annoying (even if our benchmarking doesn't represent normal gaming behavior).

To be fair, we penned our summary right after many hours of intensive benchmarking, when emotions are still running high. Even if the game doesn't deliver all that gamers were hoping for, we have to credit Ubisoft for serving up a finished product. Sadly, this is far from the norm these days. For instance, Mafia III shipped with problems that were bad enough to make it partially unplayable.

Gamers who put some time and effort into figuring out the best detail settings for their PCs, and who own modern graphics hardware, will find a lot to like about Watch Dogs 2.

The open world looks nice, is entertaining to explore, and provides lots of fun.

Overall, this makes Watch Dogs 2 a solid game, which is a nice change from some of the half-baked releases this year. For our part, we’re just happy to finally be able to write this in a conclusion again.

MORE: Best Deals

MORE: Hot Bargains @PurchDeals

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

amk-aka-Phantom Great and thorough testing, thank you very much. But since you said yourself that High seems to be the sweet spot whereas Very High and Ultra barely look better yet demand a lot more from your hardware, why don't we get 1920x1080/High benchmarks then? I'd love to see how my 970 does. I can sort of deduce that it'll be alright considering it does 35-44 on Very High and VRAM consumption is within its limits, but a confirmation would be nice.Reply -

cwolf78 I agree. Please add the High preset benchmark including the 970. This is the setting I'd probably use if I were to purchase it.Reply -

Elysian890 Getting 60 w/ texture DLC on High setting preset, 290x Devil 13Reply

stable 60 without DLC on very high to ultra, 1080p, vsync enabled -

cknobman Its a rather poorly optimized game TBH.Reply

Digital Foundry had big time issues getting this game to play well at high settings even using a Titan X.

You can also see that this game relies on gfx memory more than anything by the RX 480 with 8GB Ram outpacing more powerful cards with less RAM (like the Fury X). -

IceMyth I don't understand one point, how Ultra settings have better FPS then Very high settings?Reply -

FormatC Reply

Take a look at the resolution ;)19077036 said:I don't understand one point, how Ultra settings have better FPS then Very high settings?

Windows 7 is dead. Only for a few games the testing on two different systems makes no sense. This review here was alone a benchmark session over 19 working hours. I'm not testing only one run, but many - depending at the possible tolerance. I write per month around 8 reviews - from CPU, GPU and Workstation to PC-Audio and some investigative ones.19077316 said:Go test under Windows 7, you will get much better result.

-

Windows 7 is not dead as performs better in gaming and supports everything. Also Windows 10 holds only 22% of the Windows Market Share, the rest for the most part is Windows 7. I think it is rather unprofessional not to include Windows 7 in test, and just to get that bullshit argument out of the way saying Win10 is better for the gaming. It would be fair to people to show that it is not what MS. claims as many f. up their systems doing upgrade to Win10 based on false advertisement.Reply