The Myths Of Graphics Card Performance: Debunked, Part 1

Did you know that Windows 8 can gobble as much as 25% of your graphics memory? That your graphics card slows down as it gets warmer? That you react quicker to PC sounds than images? That overclocking your card may not really work? Prepare to be surprised!

More Graphics Memory Measurements

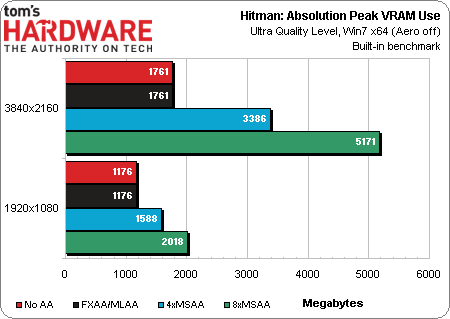

Io Interactive's Glacier 2 engine, which powers Hitman: Absolution, is memory-hungry, second only (in our tests) to the Warscape engine from Creative Assembly (Total War: Rome II) when the highest-quality presets are taken into account.

In Hitman: Absolution, a 1 GB card is not sufficient for playing at the game’s Ultra Quality level at 1080p. A 2 GB card does allow you to set 4xAA at 1080p, or to play without MSAA at 2160p.

To enable 8xMSAA at 1080p you need a 3 GB card, and nothing short of a 6 GB Titan supports 8xMSAA at 2160p.

Once again, enabling FXAA uses no additional memory.

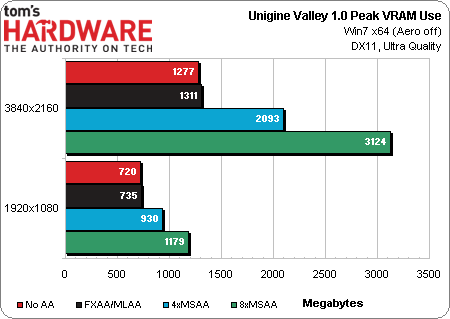

Note: Ungine’s latest benchmark, Valley 1.0, does not support MLAA/FXAA directly. Thus, the results you see represent memory usage when MLAA/FXAA is force-enabled in CCC/NVCP.

The data shows us that Valley runs fine on a 2 GB card at 1080p (at least as far as memory use goes). You can even use a 1 GB card with 4xMSAA enabled, which is not the case for most games. At 2160p, however, the benchmark will only run properly on a 2 GB card so long as you don't turn on AA, or use a post-processing effect instead. The 2 GB ceiling gets hit with 4xMSAA turned on.

Ultra HD with 8xMSAA enabled gobbles up over 3 GB of graphics memory, which means this benchmark will only run properly at that preset using Nvidia's GeForce GTX Titan or one of AMD's 4 GB Hawaii-based boards.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

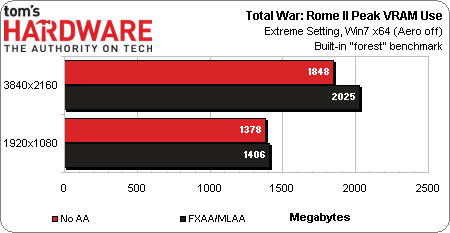

Total War: Rome II uses an updated Warscape engine from Creative Assembly. It doesn't support SLI at the moment (CrossFire does work, however). It also doesn't support any form of MSAA. The only form of anti-aliasing that works is AMD's proprietary MLAA, which is a post-processing technique like SMAA and FXAA.

One notable feature of this engine is its ability to auto-downgrade image quality based on available video memory. That's a good way to keep the game playable with minimal end-user involvement. But a lack of SLI support cripples the title on Nvidia cards at 3840x2160. At least for now, you'll want to play on an AMD board if 4K is your resolution of choice.

With MLAA disabled, Total War: Rome II’s built-in “forest” benchmark at the Extreme preset uses 1848 MB of graphics memory. The GeForce GTX 690’s 2 GB limit is exceeded with MLAA enabled at 2160p. At 1920x1080, memory use is in the 1400 MB range.

Note the surprising factor of running a supposedly AMD-only technology (MLAA) on Nvidia hardware. As both FXAA and MLAA are post-processing-based techniques, there is no technical reason why they won't run on interchangeable hardware. Creative Assembly is either switching behind-the-scenes to FXAA (despite what the configuration file says), or AMD's marketing department hasn't picked up on the fact above.

You need at least a 2 GB card to play Total War: Rome II at its Extreme quality preset at 1080p, and likely a CrossFire array with 3 GB+ to play smoothly at 2160p. If you only have a 1 GB card, the game might still be playable at 1080p, but you'll have to make some quality compromises.

What happens when graphics memory is completely consumed? The short answer is that graphics data starts getting swapped to system memory over the PCI Express bus. Practically, this means performance slows dramatically, particularly when textures are being loaded. You don't want this to happen. It'll make any game unplayable due to massive stuttering.

So, how much graphics memory do I need?

If you own a 1 GB card and a 1080p display, there's probably no need to upgrade right this very moment. A 2 GB card would let you turn on more demanding AA settings in most games though, so consider that a minimum benchmark if you're planning a new purchase and want to enjoy the latest titles at 1920x1080.

As you scale up to 1440p, 1600p, 2160p or multi-monitor configurations, start thinking beyond 2 GB if you also want to use MSAA. Three gigabytes becomes a better target (or multiple 3 GB+ cards in SLI/CrossFire).

Of course, as I mentioned, balance is critical across the board. An underpowered GPU outfitted with 4 GB of GDDR5 memory (rather than 2 GB) isn't going to automatically be playable at high resolutions just because it's complemented by the right amount of memory. And that's why, when we review graphics cards, we test multiple games, resolutions, and detail settings. It takes fleshing out a card's bottlenecks before smart recommendations can be made.

Current page: More Graphics Memory Measurements

Prev Page The Myths Surrounding Graphics Card Memory Next Page Thermal Management In A Modern Graphics Card-

manwell999 The info on V-Sync causing frame rate halving is out of date by about a decade. With multithreading the game can work on the next frame while the previous frame is waiting for V-Sync. Just look at BF3 with V-Sync on you get a continous range of FPS under 60 not just integer multiples. DirectX doesn't support triple buffering.Reply -

hansrotec with over clocking are you going to cover water cooling? it would seem disingenuous to dismiss overclocking based on a generating of cards designed to run up to maybe a speed if there is headroom and not include watercooling which reduces noise and temperature . my 7970 (pre ghz editon) is a whole different card water cooled vs air cooled. 1150 mhz without having to mess with the voltage on water with temps in 50c without the fans or pumps ever kicking up, where as on air that would be in the upper 70s lower 80s and really loud. on top of that tweeking memory incorrectly can lower frame rateReply -

hansrotec I thought my last comment might have seemed to negative, and i did not mean it in that light. I did enjoy the read, and look forward to more!Reply -

hansrotec I thought my last comment might have seemed to negative, and i did not mean it in that light. I did enjoy the read, and look forward to more!Reply -

noobzilla771 Nice article! I would like to know more about overclocking, specifically core clock and memory clock ratio. Does it matter to keep a certain ratio between the two or can I overclock either as much as I want? Thanks!Reply -

chimera201 I can never win over input latency no matter what hardware i buy because of my shitty ISPReply -

immanuel_aj I'd just like to mention that the dB(A) scale is attempting to correct for perceived human hearing. While it is true that 20 dB is 10 times louder than 10 dB, but because of the way our ears work, it would seem that it is only twice as loud. At least, that's the way the A-weighting is supposed to work. Apparently there are a few kinks...Reply