Nvidia’s Turing Architecture Explored: Inside the GeForce RTX 2080

Meet TU104 and GeForce RTX 2080

TU104: Turing With Middle Child Syndrome

It’s not that TU104 goes unloved, but again, we’re not used to introducing three GPUs alongside a new architecture. Then again, with GeForce RTX 2080 Ti starting at $1000, the RTX 2080, priced from $700, is going to find its way into more gaming PCs.

Similar to TU102, TSMC manufactures TU104 on its 12nm FinFET node. But a transistor count of 13.6 billion results in a smaller 545 mm² die. “Smaller,” of course, requires a bit of context. Turing Jr out-measures the last generation’s 471 mm² flagship (GP102) and comes close to the size of GK110 from the 2013-era GeForce GTX Titan.

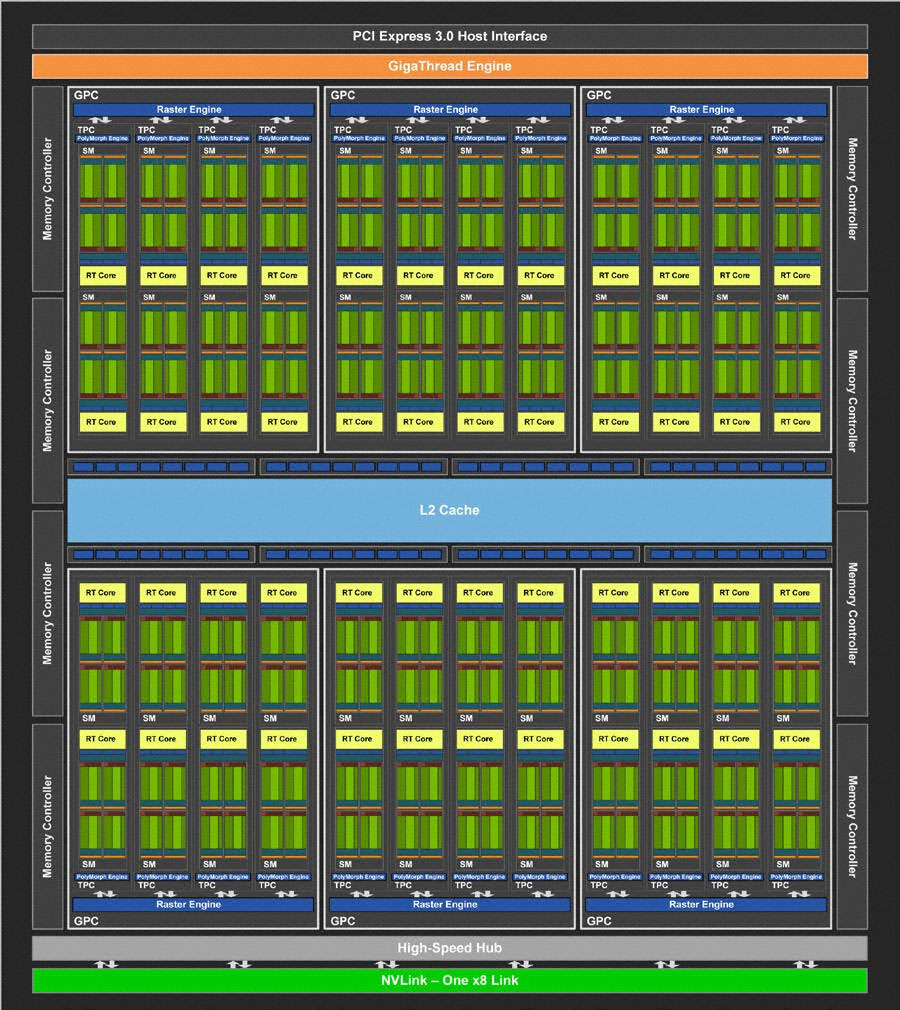

TU104 is constructed with the same building blocks as TU102; it just features fewer of them. Streaming Multiprocessors still sport 64 CUDA cores, eight Tensor cores, one RT core, four texture units, 16 load/store units, 256KB of register space, and 96KB of L1 cache/shared memory. TPCs are still composed of two SMs and a PolyMorph geometry engine. Only here, there are four TPCs per GPC, and six GPCs spread across the processor. Therefore, a fully enabled TU104 wields 48 SMs, 3072 CUDA cores, 384 Tensor cores, 48 RT cores, 192 texture units, and 24 PolyMorph engines.

A correspondingly narrower back end feeds the compute resources through eight 32-bit GDDR6 memory controllers (256-bit aggregate) attached to 64 ROPs and 4MB of L2 cache.

TU104 also loses an eight-lane NVLink connection, limiting it to one x8 link and 50 GB/s of bi-directional throughput.

GeForce RTX 2080: TU104 Gets A (Tiny) Haircut

After seeing the GeForce RTX 2080 Ti serve up respectable performance in Battlefield V at 1920x1080 with ray tracing enabled, we can’t help but wonder if GeForce RTX 2080 is fast enough to maintain playable frame rates. Even a complete TU104 GPU is limited to 48 RT cores compared to TU102’s 68. But because Nvidia goes in and turns off one of TU104’s TPCs to create GeForce RTX 2080, another pair of RT cores is lost (along with 128 CUDA cores, eight texture units, 16 Tensor cores, and so on).

In the end, GeForce RTX 2080 struts onto the scene with 46 SMs hosting 2944 CUDA cores, 368 Tensor cores, 46 RT cores, 184 texture units, 64 ROPS, and 4MB of L2 cache. Eight gigabytes of 14 Gb/s GDDR6 on a 256-bit bus move up to 448 GB/s of data, adding more than 100 GB/s of memory bandwidth beyond what GeForce GTX 1080 could do.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

| Row 0 - Cell 0 | GeForce RTX 2080 FE | GeForce GTX 1080 FE |

| Architecture (GPU) | Turing (TU104) | Pascal (GP104) |

| CUDA Cores | 2944 | 2560 |

| Peak FP32 Compute | 10.6 TFLOPS | 8.9 TFLOPS |

| Tensor Cores | 368 | N/A |

| RT Cores | 46 | N/A |

| Texture Units | 184 | 160 |

| Base Clock Rate | 1515 MHz | 1607 MHz |

| GPU Boost Rate | 1800 MHz | 1733 MHz |

| Memory Capacity | 8GB GDDR6 | 8GB GDDR5X |

| Memory Bus | 256-bit | 256-bit |

| Memory Bandwidth | 448 GB/s | 320 GB/s |

| ROPs | 64 | 64 |

| L2 Cache | 4MB | 2MB |

| TDP | 225W | 180W |

| Transistor Count | 13.6 billion | 7.2 billion |

| Die Size | 545 mm² | 314 mm² |

| SLI Support | Yes (x8 NVLink) | Yes (MIO) |

Reference and Founders Edition RTX 2080s have a 1515 MHz base frequency. Nvidia’s own overclocked models ship with a GPU Boost rating of 1800 MHz, while the reference spec is 1710 MHz. Peak FP32 compute performance of 10.6 TFLOPS puts GeForce RTX 2080 Founders Edition behind GeForce GTX 1080 Ti (11.3 TFLOPS), but well ahead of GeForce GTX 1080 (8.9 TFLOPS). Of course, the faster Founders Edition model also uses more power. Its 225W TDP is 10W higher than the reference GeForce RTX 2080, and a full 45W above last generation’s GeForce GTX 1080.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: Meet TU104 and GeForce RTX 2080

Prev Page Meet TU102 and GeForce RTX 2080 Ti Next Page Meet TU106 and GeForce RTX 2070-

siege19 "And although veterans in the hardware field have their own opinions of what real-time ray tracing means to an immersive gaming experience, I’ve been around long enough to know that you cannot recommend hardware based only on promises of what’s to come."Reply

So wait, do I preorder or not? (kidding) -

jimmysmitty Well done article Chris. This is why I love you. Details and logical thinking based on the facts we have.Reply

Next up benchmarks. Can't wait to see if the improvements nVidia made come to fruition in performance worthy of the price. -

Lutfij Holding out with bated breath about performance metrics.Reply

Pricing seems to be off but the followup review should guide users as to it's worth! -

Krazie_Ivan i didn't expect the 2070 to be on TU106. as noted in the article, **106 has been a mid-range ($240-ish msrp) chip for a few generations... asking $500-600 for a mid-range GPU is insanity. esp since there's no way it'll have playable fps with RT "on" if the 2080ti struggles to maintain 60. DLSS is promisingly cool, but that's still not worth the MASSIVE cost increases.Reply -

jimmysmitty Reply21319910 said:i didn't expect the 2070 to be on TU106. as noted in the article, **106 has been a mid-range ($240-ish msrp) chip for a few generations... asking $500-600 for a mid-range GPU is insanity. esp since there's no way it'll have playable fps with RT "on" if the 2080ti struggles to maintain 60. DLSS is promisingly cool, but that's still not worth the MASSIVE cost increases.

It is possible that they are changing their lineup scheme. 106 might have become the low high end card and they might have something lower to replace it. This happens all the time. -

Lucky_SLS turing does seem to have the ability to pump up the fps if used right with all its features. I just hope that nvidia really made a card to power up its upcoming 4k 200hz hdr g sync monitors. wow, thats a mouthful!Reply -

anthonyinsd ooh man the jedi mind trick Nvidia played on hyperbolic gamers to get rid of thier overstock is gonna be EPIC!!! and just based on facts: 12nm gddr6 awesome new voltage regulation and to GAME only processes thats a win in my book. I mean if all you care is about is your rast score, then you should be on the hunt for a titan V, if it doesn't rast its trash lol. been 10 years since econ 101, but if you want to get rid of overstock you dont tell much about the new product till its out; then the people who thought they were smart getting the older product, now want o buy the new one too....Reply -

none12345 I see a lot of features that are seemingly designed to save compute resources and output lower image quality. With the promise that those savings will then be applied to increase image quality on the whole.Reply

I'm quite dubious about this. My worry is that some of the areas of computer graphics that need the most love, are going to get even worse. We can only hope that overall image quality goes up at the same frame rate. Rather then frame rate going up, and parts of the image getting worse.

I do not long to return to the day where different graphics cards output difference image quality at the same up front graphics settings. This was very annoying in the past. You had some cards that looked faster if you just looked at their fps numbers. But then you looked at the image quality and noticed that one was noticeably worse.

I worry that in the end we might end up in the age of blur. Where we have localized areas of shiny highly detailed objects/effects layered on top of an increasingly blurry background. -

CaptainTom I have to admit that since I have a high-refresh (non-Adaptive Sync) monitor, I am eyeing the 2080 Ti. DLSS would be nice if it was free in 1080p (and worked well), and I still don't need to worry about Gstink. But then again I have a sneaking suspicion that AMD is going to respond with 7nm Cards sooner than everyone expects, so we'll see.Reply

P.S. Guys the 650 Ti was a 106 card lol. Now a xx70 is a 106 card. Can't believe the tech press is actually ignoring the fact that Nvidia is relabeling their low-end offering as a xx70, and selling it for $600 (Halo product pricing). I swear Nvidia could get away with murder... -

mlee 2500 4nm is no longer considered a "Slight Density Improvement".Reply

Hasn't been for over a decade. It's only lumped in with 16 from a marketing standpoint becuase it's no longer the flagship lithography (7nm).