Nvidia unveils details of new 88-core Vera CPUs positioned to compete with AMD and Intel – new Vera CPU rack features 256 liquid-cooled chips that deliver up to a 6X gain in CPU throughput

Broadening the data center assault

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

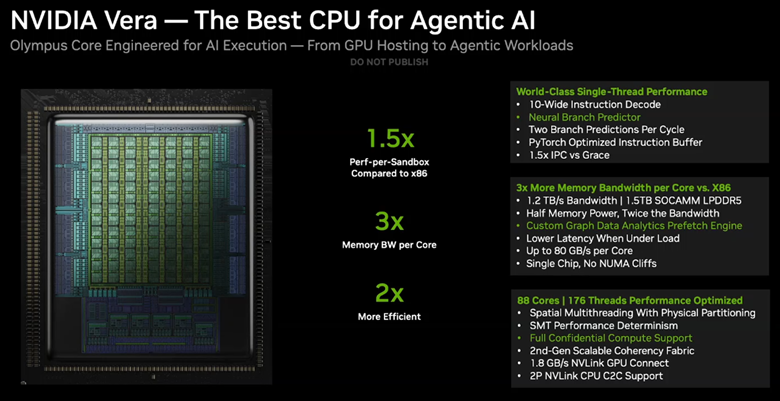

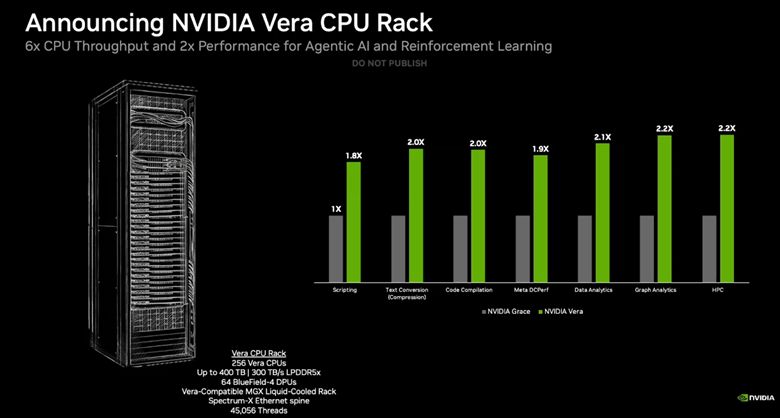

Nvidia announced more details about its new 88-core Vera data center CPUs at GTC 2026 here in San Jose, California, claiming impressive 50% performance gains over standard CPUs, fueled by a 1.5X increase in IPC from its Olympus cores and an innovative high-bandwidth design that Nvidia says delivers the fastest single-threaded performance on the market. The company also unveiled its new Vera CPU Rack architecture, which brings 256 liquid-cooled CPUs into one rack for CPU-centric workloads, claiming a 6X gain in CPU throughput and twice the performance in agentic AI workloads.

The evolution of the Vera CPU and its integration into deployable rack-scale systems marks Nvidia’s entry into direct CPU sales, positioning itself as a competitor to Intel and AMD in the traditional CPU market. That’s not to mention competing against the many flavors of custom Arm processors used by the world’s largest hyperscalers. This doesn’t come as a complete surprise, coming in the wake of the company’s announcement that Meta will now deploy multiple generations of Nvidia CPU-only systems across its infrastructure. Nvidia will also continue to use the CPUs for its own GPU-focused systems, such as the Vera Rubin platform we covered more in depth here.

Nvidia originally introduced its first-gen Grace CPUs at GTC in 2022, foreshadowing that its continued evolution of the series would eventually position it to compete with the broader CPU market. The new processors target both AI-centric and more general-purpose use-cases, with a heavy emphasis on the former, and Nvidia’s broadening of both the capabilities and its target markets will provide stiff competition for AMD and Intel as they battle for sockets in AI data centers. The chips are now in full production and will be available to Nvidia’s partners in the second half of this year. Let’s take a closer look at the new chips, and then the rack-scale architecture.

Article continues belowNvidia Vera CPU specifications and performance

Nvidia designed the Vera CPU to provide the best of many worlds, with the intention of melding the high core counts of hyperscale cloud CPUs with the high single-thread performance of gaming CPUs and the power efficiency of mobile chips, all with the goal of speeding common GPU-driven tasks in agentic AI, training, and inference workloads, such as Python execution, SQL queries, and code compilation.

All told, Nvidia claims 1.5x the performance-per-sandbox over x86 competitors, 3x the memory bandwidth per core, and twice the efficiency. To meet those goals, the company designed an 88-core CPU with 176 threads, an increase over the first-gen Grace’s 72 cores. Nvidia also claims the cores offer a 1.5X improvement in instructions per cycle (IPC) throughput, a massive generational jump relative to other competing architectures, which tend to gain a single-digit or a low-teens percentage increase with each generation. With the previous-gen Grace, Nvidia used off-the-shelf Arm Neoverse cores, but the firm does stipulate that the new Olympus cores found on Vera are ‘Nvidia designed,’ signaling that the company has made custom modifications to the reference design.

The Arm v9.2-A Olympus cores feature spatial multi-threading, which physically isolates the various components of the pipeline by not time-slicing the key elements, like the execution units, caches and register files, with the other thread running on the same core. This contrasts with the standard time-slicing found in other simultaneous multi-threading (SMT) implementations, a process that has the threads take turns utilizing the resources. Spatial Multi-Threading increases Instruction Level Parallelism (ILP), throughput, and performance predictability by pulling instructions from other threads when execution elements are idle, thus ensuring full utilization.

In effect, this allows both threads to truly run simultaneously on a single core, whereas in a standard SMT implementation the threads essentially take turns running on a single core. Naturally, this will be a boon for multi-tenancy environments.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Nvidia arranges all 88 cores in a single domain, so there are no latency-inducing NUMA eccentricities to be found, in stark contrast to current high core-count x86 competitors. This has dramatic implications for latency, predictability, bandwidth, and ease-of-programmability. The firm has not shared the full details of how it accomplished this feat while maintaining adequate latency to each core, but the chip features a new generation of the Nvidia Scalable Coherency Fabric (SCF), a mesh topology built from Arm’s CMN-700 Coherent Mesh Network used in Grace’s Arm Neoverse cores. Arm has moved forward to the newer Neoverse CMN S3 mesh with its latest designs, and Vera likely employs that design, or a variant thereof.

The mesh network can deliver impressive memory throughput to the cores in aggregate, and even more when certain cores are more bandwidth-hungry than others. Grace supported 546 GB/s of memory throughput to the mesh, working out to an average of 7.6 GB/s per core. Vera more than doubles that to 1.2 TB/s of bandwidth fed by 1.5TB of SOCAMM LPPDDR5 modules (a 3x increase in capacity), which works out to an average of 13.6 GB/s per core in full-load conditions. Importantly, the architecture now supports up to 80 GB/s of throughput to any single core when load conditions aren’t consistent across the mesh, an impressive uplift for bandwidth-hungry threads.

The execution pathway includes a 10-wide Instruction Decode unit, a neural branch predictor that supports two branch predictions per cycle, a custom graph database analytics prefetch engine, and a PyTorch-optimized Instruction Buffer.

The chip fully supports Confidential Computing, a notable advance over Grace that allows for fully protected CPU+GPU domains. The CPU also features an NVLink-C2C die-to-die interface with up to 1.8 TB/s of throughput, a doubling of Grace’s 900 GB/s interconnect and seven times faster than PCIe 6.0. It also supports two-processor (2P) configurations.

Overall, Vera supports the full suite of technologies expected from a modern data center processor, including PCIe 6.0 and CXL 3.1 support, but with a bandwidth and latency-focused compute design that positions its uniquely well for use in AI workflows.

The Vera CPU Rack and Benchmark Performance

Grace has already served as a fundamental building block in many Nvidia GPU+CPU systems, including some of the fastest AI supercomputers on the planet, but Nvidia’s expanded goal is to leverage Vera in pure-play CPU racks that can be more widely deployed.

The Vera CPU rack meets that goal with 256 liquid-cooled Vera CPUs paired with 74 Bluefield-4 DPUs and ConnectX SuperNIC networking. The rack weighs in with up to 400 TB of LPDDR5 and 300 TB/s of aggregate memory throughput. That feeds the 45,056 threads, which Nvidia says supports 22,500 concurrent CPU environments running independently.

Nvidia shared benchmarks in a wide range of workloads, touting from a 1.8x to 2.2x performance improvement over Grace in scripting, compilation, data analytics, graph analytics, and HPC workloads, among others.

Naturally one would expect this system to be deployed at Meta, which recently announced its partnership with Nvidia for CPU-only systems, but Nvidia says it will also offer the Vera CPU rack system to hyperscalers, including Oracle, Coreweave, Nebius, Alibaba, and others.

A broad range of OEMs and ODMs will also provide single- and dual-socket servers for the broader market for a wide range of use cases, including industry heavyweights like Dell, HPE, Lenovo, Supermicro, Foxconn, and many others. The Vera CPUs will also be used for Nvidia HGX NVL8 systems.

Perhaps most importantly, these racks will also serve as an integral part of Nvidia’s broader Vera Rubin platform, which features seven chips in total, including the Rubin GPU, NVLink6 Switch for rack-scale interconnect, ConnectX-9 SuperNIC for networking, Bluefield 4 DPU, Spectrum-X 102.4T Co-packaged Optics switch, and Nvidia’s Groq 3 LPUs.

The Vera CPUs are in full production now and are slated for deliveries beginning in the second half of this year.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

thestryker It will be interesting to see what sort of impact these make on the market as they seem quite good on paper unless you need super high core counts. I didn't see anything regarding the amount of PCIe lanes available and it'll be interesting to see what the core latencies end up looking like.Reply

Just to put the memory bandwidth per core into some context:

The highest x86 available today in that general core count is Intel's 72 and 96 core Xeon 6 using 12 channel MRDIMMs. This equates to ~11.7 GB/s and 8.8 GB/s per core respectively. -

Notton "To meet those goals, the company designed an 88-core CPU with 144 threads, an increase over the first-gen Grace’s 72 cores."Reply

The slide says 88 cores and 176 threads.

Also the die shot on the slide, if it's the actual product, looks like it's configured as 13x7 = 91. I'm guessing they disable the cores that don't work? -

bit_user Reply

What??? No, it does not mean that! There's been every indication these are fully custom cores, and not Nvidia's first!The article said:the firm does stipulate that the new Olympus cores found on Vera are ‘Nvidia designed,’ signaling that the company has made custom modifications to the reference design.

https://en.wikipedia.org/wiki/Project_Denver https://www.uio.no/studier/emner/matnat/ifi/IN5050/v25/slides/denver_carmel_uarch.pdf

This almost sounds like they're trying to spin a weakness into a strength. The reason other CPUs have things like low watermarks to constrain how much one thread can use of a competitively-shared resource is so that the other thread doesn't get starved out. On the whole, that's better for system throughput, even if it means shaving off a little bit of one thread's performance, every now and then.The article said:The Arm v9.2-A Olympus cores feature spatial multi-threading, which physically isolates the various components of the pipeline by not time-slicing the key elements, like the execution units, caches and register files, with the other thread running on the same core.

Zen 5 (not sure about earlier ones) does have a specific operational mode for providing exclusive access to a single thread. This mode gets entered and exited dynamically, at runtime, based on how many threads are scheduled on a given core, at a given point in time. This gives the OS thread scheduler the ability to boost select threads by prioritizing them for exclusive use of a core.The article said:Spatial Multi-Threading increases Instruction Level Parallelism (ILP), throughput, and performance predictability by pulling instructions from other threads when execution elements are idle, thus ensuring full utilization.

They do not. The best info on how Zen 5 partitions resources between SMT threads is probably here:The article said:in a standard SMT implementation the threads essentially take turns running on a single core.

https://chipsandcheese.com/p/a-video-interview-with-mike-clark-chief-architect-of-zen-at-amd

Only very simple SMT implementations, like some GPUs, will do a true round-robin between unblocked threads.

That's an exaggeration. Modern x86 server CPUs show very few NUMA effects, until you get to multi-socket. At that point, Nvidia's Rubin will be suffering as well.The article said:Nvidia arranges all 88 cores in a single domain, so there are no latency-inducing NUMA eccentricities to be found, in stark contrast to current high core-count x86 competitors. This has dramatic implications for latency, predictability, bandwidth, and ease-of-programmability.

https://chipsandcheese.com/p/evaluating-uniform-memory-access

Worth noting that those figures include both directions. At least the PCIe 6.0 figure was computed the same way.The article said:NVLink-C2C die-to-die interface with up to 1.8 TB/s of throughput, a doubling of Grace’s 900 GB/s interconnect and seven times faster than PCIe 6.0. It also supports two-processor (2P) configurations.

The NVLink-C2C interface supports 2P configurations, but you can scale up to way more CPUs over regular NVLink.

Oh, that's interesting. Makes me wonder if both SMT threads per CPU must be in the same Confidential Computing domain.The article said:That feeds the 45,056 threads, which Nvidia says supports 22,500 concurrent CPU environments running independently. -

bit_user Reply

Well, Intel's current server platform seems more focused on scaling up to 128 P-cores and feeding x96 PCIe 5.0 lanes.thestryker said:Just to put the memory bandwidth per core into some context:

The highest x86 available today in that general core count is Intel's 72 and 96 core Xeon 6 using 12 channel MRDIMMs. This equates to ~11.7 GB/s and 8.8 GB/s per core respectively.

https://www.intel.com/content/www/us/en/products/sku/240777/intel-xeon-6980p-processor-504m-cache-2-00-ghz/specifications.html

When Nvidia compared them, they probably used the 128-core 6980P to get their figure of "3x memory bandwidth per core". Nvidia will always find the most flattering point of comparison.

If the per-CPU cost of deploying Rubin is much lower, then you don't need as much performance per-CPU, because you can just scale up to more of them. NVLink supports that, via better scalability. -

thestryker Reply

That still doesn't math out because even comparing RDIMMs instead of MRDIMMs it's 4.8 GB/s, but I wouldn't put it past them to round up for marketing materials.bit_user said:When Nvidia compared them, they probably used the 128-core 6980P to get their figure of "3x memory bandwidth per core". Nvidia will always find the most flattering point of comparison. -

abufrejoval Reply

These are CUDA CPUs! CUDA is all about marshalling large number of cores to work in concert without stepping on each other or getting out of line: my mental image is that of a crowd of people in a rice paddy, seeding or weeding or harvesting, where if someone needs to grab a bite or take a leak and that requires stepping out of line, that brings down the efficiency of the entire crowd, so they need to ensure that doesn't happen.bit_user said:This almost sounds like they're trying to spin a weakness into a strength. The reason other CPUs have things like low watermarks to constrain how much one thread can use of a competitively-shared resource is so that the other thread doesn't get starved out. On the whole, that's better for system throughput, even if it means shaving off a little bit of one thread's performance, every now and then.

"classic" round-robin SMT takes advantage of the fact that threads aren't unison, but do very different things on very different parts of memory, mix FP and logic, because they are there to take advantage of pipeline and memory stalls to keep otherwise unused ALUs busy, especially on bigger designs like IBM's Power. But that's not the type of workload that's running on Vera: Vera is running the meta layers of CUDA, (was it called Dynamo?), which is still about marshalling, except that it's marshalling GPUs (or racks of them) not GPU cores.

Heterogeneosity (apart from mixing experts) is wholly undesired within scale-out workloads, optimizing the scale-up part on the other hand is what they wanted to support better.

IMHO Vera isn't hubris or thinking they could do the better generic CPU core. It's really mostly about general purpose CPUs not really fitting their needs, mostly in terms of I/O, but down to how CPU cores themselves make optimal use of their transistor budgets to support the workers on the rice paddy. -

bit_user Reply

No. CUDA has a very specific meaning, in that it refers to an API and hardware which is capable of being utilized via that API.abufrejoval said:These are CUDA CPUs!

Not everything with a lot of cores supports CUDA. Compared to a GPU, they would not be very efficiently utilized via CUDA, if it did have a CPU backend which ran atop them.abufrejoval said:CUDA is all about marshalling large number of cores to work in concert without stepping on each other or getting out of line:

For several generations, Nvidia has been working to make SIMD more efficient. You might be interested in reading about some of those efforts.abufrejoval said:my mental image is that of a crowd of people in a rice paddy, seeding or weeding or harvesting, where if someone needs to grab a bite or take a leak and that requires stepping out of line, that brings down the efficiency of the entire crowd, so they need to ensure that doesn't happen.

https://chipsandcheese.com/p/shader-execution-reordering-nvidia-tackles-divergence

What do you mean by "the metal layers of CUDA"?abufrejoval said:that's not the type of workload that's running on Vera: Vera is running the meta layers of CUDA, (was it called Dynamo?), which is still about marshalling, except that it's marshalling GPUs (or racks of them) not GPU cores.

In GPU contexts, these CPUs exist for implementing processing that's inefficient to run on GPUs (i.e. don't map well to CUDA). They're complementary to GPUs! They also do control & management plane operations + I/O and host memory pools that can be distributed throughout the NVLink fabric.

In CPU contexts, like what Meta is using them for, they're for running generic cloud workloads.

If all they wanted was more GPUs, they'd have stuck to to making GPUs and would have homogeneous compute fabrics.abufrejoval said:Heterogeneosity (apart from mixing experts) is wholly undesired within scale-out workloads, optimizing the scale-up part on the other hand is what they wanted to support better.

It is, because it's ARM ISA. ARM does not let customers implement their own nonstandard extensions. The only sort-of exceptions to that were where Apple made extensions which ARM later added to the ISA (or maybe it was a concurrent thing).abufrejoval said:IMHO Vera isn't hubris or thinking they could do the better generic CPU core.

The only reasons to implement it themselves are because they think they can better taylor it to their workloads and/or save money on die & licensing costs. -

JarredWaltonGPU I know it's not going to happen any time soon, but I can't help but wonder what the potential performance of a 32-core/64-thread variant tailored to the consumer market might be -- or even a 16-core/32-thread version. Obviously, getting enough memory and bandwidth is a consideration, but get something like that up and running Windows and Linux, and maybe we could have a serious x86 competitor for Steam Decks and the like.Reply

On a related note, I can't imagine a world where the MediaTek partnership with Nvidia results in CPUs that are anywhere near as competitive as Vera is supposed to be in the data center space. Hopefully, MediaTek and Nvidia prove me wrong and deliver something interesting and fast. LOL -

abufrejoval Reply

Meta not metal: the nested levels of scale-up and scale-out orchestration around CUDA kernels.bit_user said:No. CUDA has a very specific meaning, in that it refers to an API and hardware which is capable of being utilized via that API.

Not everything with a lot of cores supports CUDA. Compared to a GPU, they would not be very efficiently utilized via CUDA, if it did have a CPU backend which ran atop them.

What do you mean by "the metal layers of CUDA"?

Nvidia is scaling ML workloads to the data center level, not just a few GPUs but dozens of rows with dozens of racks with dozens of rows with servers that run Vera servers with Rubin GPUs, now also including Groq variants with a mix of Nvlink and Bluefield fabrics: these aren't general purpose workloads, but CUDA workloads running on Rubins with Veras for orchestration.

exactly.bit_user said:

In GPU contexts, these CPUs exist for implementing processing that's inefficient to run on GPUs (i.e. don't map well to CUDA). They're complementary to GPUs! They also do control & management plane operations + I/O and host memory pools that can be distributed throughout the NVLink fabric.

And the point is that these cloud orchestration workloads aren't really general purpose, they are very I/O heavy and latency sensitive so using a general purpose AMD or Intel CPU would a) have them pay for features they don't need, b) slow them down asking features they do want. That's why they did their own.

Most likely Nvidia expected to own ARM by the time they decided to go in this direction. And if you have enough money, restrictions go away.bit_user said:It is, because it's ARM ISA. ARM does not let customers implement their own nonstandard extensions. The only sort-of exceptions to that were where Apple made extensions which ARM later added to the ISA (or maybe it was a concurrent thing).

That's what I'm saying, too.bit_user said:The only reasons to implement it themselves are because they think they can better taylor it to their workloads and/or save money on die & licensing costs.

At a certain scale, every workload benefits from a bespoke design, especially under energy pressure. So far Nvidia had been extraordinarily generic with their GPUs, most other AI startups had done several generations of ASICs, only to fail because they couldn't gain critical mass and offer a long-term roadmap.

But now Nvidia is bending and going ASIC, pretty much validating Cerebras' approach for inference, but most importantly fighting latencies to enable scale out. -

abufrejoval Reply

They really aren't interested in the consumer market these days, because that's not where the money is.JarredWaltonGPU said:I know it's not going to happen any time soon, but I can't help but wonder what the potential performance of a 32-core/64-thread variant tailored to the consumer market might be -- or even a 16-core/32-thread version. Obviously, getting enough memory and bandwidth is a consideration, but get something like that up and running Windows and Linux, and maybe we could have a serious x86 competitor for Steam Decks and the like.

Vera is all about moving huge amounts of data quickly where it's needed, there may be very little customization in terms of the cores, much more in the RAM and I/O paths.

I can't see Nvidia wanting to sell a generic data center CPU, that's not a money maker any more.JarredWaltonGPU said:On a related note, I can't imagine a world where the MediaTek partnership with Nvidia results in CPUs that are anywhere near as competitive as Vera is supposed to be in the data center space. Hopefully, MediaTek and Nvidia prove me wrong and deliver something interesting and fast. LOL

For them Vera is a just a tool to run AI workloads better and more efficiently. CPUs are obviously needed for the orchestration, but going to AMD or Intel to get that critical part done right, only allows those two to slow them down and tax them on their AI earnings. They are onboarding the CPUs pretty much like Intel onboarded chipsets and NICs to keep as much of the value stack within their realm and control.

As for MediaTek, I concur: Nvidia wouldn't want to replace a dependency on AMD/Intel with another for the money makers in the data center.

MediaTek was and might still be ok for consumer focused devices, once that market becomes relevant enough again, because it gives them time-to-market speed in that arena.