The Next Big Feature For Intel CPUs is Cognitive Recognition

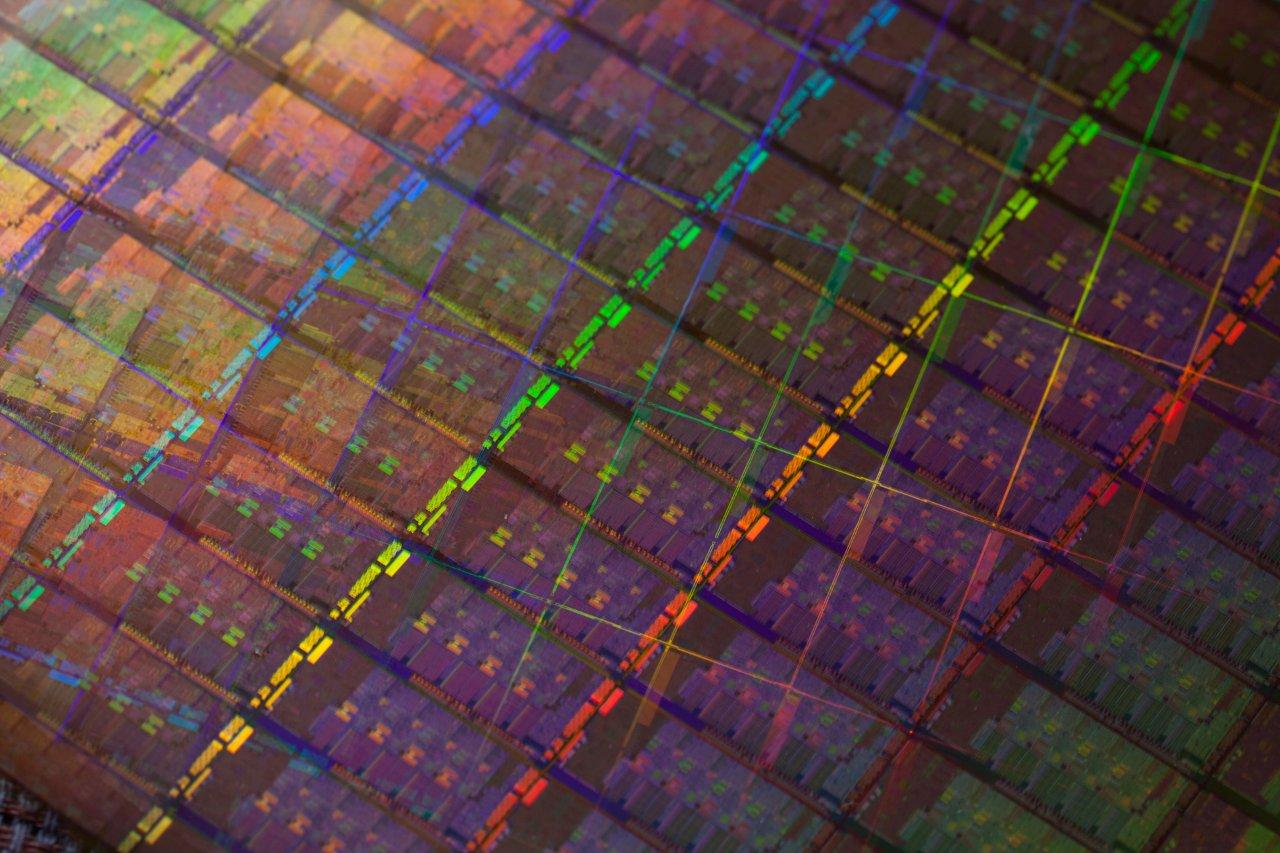

As transistor counts grow, the CPU will do more and understand what you want and need.

In an interesting interview with Intel's Paul Otellini over at PC World, which does not unveil any big secrets, the points out Intel's view of ARM and its idea of the future of computing, as well as thoughts what could be done with the rapidly increasing transistor count of processors.

Of course, as CPU dies shrink, we have come to expect lower power consumption, and greater performance overall to result in better efficiency. That has been the case since the departure from Netburst in 2006, but we have also seen GPU features to be integrated on the die and there is even more budget in the future to add new capabilities.

The feature Otellini stressed was cognitive recognition, which is a capability for the CPU to figure out - in context of all available data and your history of using the computing - what your current needs and wants may be and figure it out before you do. It is not a new idea: we have first heard about it at IDF Fall in 2005, where Intel explained the concept of user aware computing in its future technology keynote. Back then, the technology was forecast to become available within five to ten years and it is clearly worth noting that the topic is coming up again, even if it is under a different name.

Article continues belowThe executive also mentioned that he does not believe that the smart phone or tablet can be the centerpiece of computing and that PCs will remain alive and continue to evolve.

Contact Us for News Tips, Corrections and Feedback

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Wolfgang Gruener is an experienced professional in digital strategy and content, specializing in web strategy, content architecture, user experience, and applying AI in content operations within the insurtech industry. His previous roles include Director, Digital Strategy and Content Experience at American Eagle, Managing Editor at TG Daily, and contributing to publications like Tom's Guide and Tom's Hardware.

-

Prescott_666 Why would you build this function into silicon? This is the kind of feature that should be implemented in software.Reply

I don't see the point. -

master_chen Reply9403384 said:Why would you build this function into silicon? This is the kind of feature that should be implemented in software.

I don't see the point.

Processors and chips have instructions sets and firmwares, which are basically a kind of software too (just build-in).

-

beayn "The executive also mentioned that he does not believe that the smart phone or tablet can be the centerpiece of computing and that PCs will remain alive and continue to evolve. "Reply

While I hate to admit it, I think he's wrong here. Far too many people have allowed the smartphone to be their centerpiece of computing and their lives. It won't ever be that way for me, and people will still have PCs and such, but they are living off their phones today. That will only get worse.

-

pat Prescott_666Why would you build this function into silicon? This is the kind of feature that should be implemented in software.I don't see the point.Reply

Correction..

Why would you build this function into silicon? This is the kind of feature that should be implemented in HUMAN.I don't see the point

I hope I'll never need a machine to tell me what to do next..

-

nightbird321 I see this more as a power saver, the CPU can tell what processing capabilities the current user tasks need and power down everything else. No more music streaming keep all the cores and caches powered up.Reply -

doron beayn"The executive also mentioned that he does not believe that the smart phone or tablet can be the centerpiece of computing and that PCs will remain alive and continue to evolve. "While I hate to admit it, I think he's wrong here. Far too many people have allowed the smartphone to be their centerpiece of computing and their lives. It won't ever be that way for me, and people will still have PCs and such, but they are living off their phones today. That will only get worse.Reply

What I think he meant is that most of the more advanced computing capabilities will not be processed in smartphones. Even if smartphones will be the "centerpiece of computing" for most people and PCs will eventually die, most of the number-crunching will be done in servers and will be transferred to your device of choice. This will become more apparent as internet bandwidth and speed will grow, but you can already see some services offering advanced capabilities such as Dropbox and Onlive -

freggo master_chenOPEN THE BAY DOORS, HAAAL!!!!Reply

I'm sorry, master_chen. I'm afraid I can't do that. -

freggo patCorrection..Why would you build this function into silicon? This is the kind of feature that should be implemented in HUMAN.I don't see the pointI hope I'll never need a machine to tell me what to do next..Reply

...now hit "submit" to continue or F1 if you need help :-)

-

A Bad Day freggo...now hit "submit" to continue or F1 if you need help :-)Reply

But your keyboard is not recognized by the computer. :-)