Nvidia GeForce GTX 465 1 GB Review: Zotac Puts Fermi On A Diet

We've already crowned Nvidia's GeForce GTX 480 the fastest (and most power-hungry) single-GPU card we've ever seen. Now the company is launching its GeForce GTX 465, based on the same massive GF100 GPU. Can such a complex part compete with AMD's value?

Power Consumption And Temperature

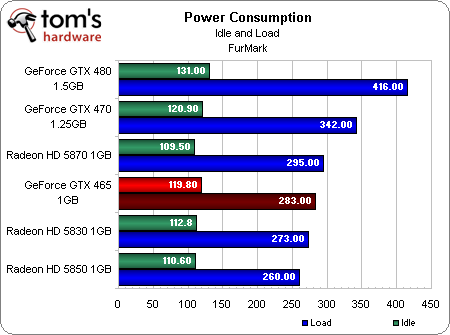

Power consumption is most definitely an issue to consider when it comes to the GeForce GTX 480—416W of total system draw under load is significant compared to the Radeon HD 5870’s 295W. The GeForce GTX 470 isn’t in as bad of shape, though the fact that it’s slower than the 5870 and more power-hungry is at least worth pointing out.

Once you get down to the GeForce GTX 465, though, total power use is a little more reasonable. Two hundred eighty three watts is a big number, but the Radeon HD 5830 it goes up against is only 10W behind. Consider power consumption on this card a non-issue for us. We're satisfied with its draw, the temperatures it generates under load, and the minimal noise it creates in the process.

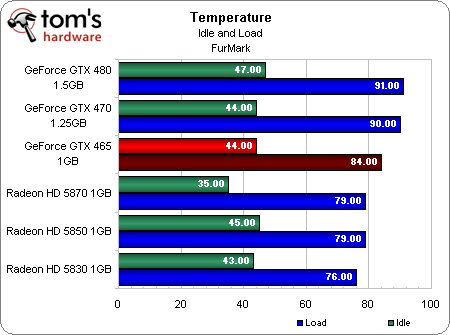

What about heat? The GeForce GTX 465 idles at about the same temperature as all of the other cards (with the exception of the Radeon HD 5870; our sample dropped way down there).

Under load, there’s no doubt it gets warmer than even AMD’s fastest single-GPU. But it’s a fair bit cooler than the flagship GeForce GTX 480. And even more notably, the card requires far less fan speed to maintain that temperature, while the GTX 480 must blow much harder to stay around 91 degrees.

Of course, we addressed the resulting noise of these cards’ fans in our GeForce GTX 480 Update: 3-Way SLI, 3D Vision, And Noise.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Power Consumption And Temperature

Prev Page Benchmark Results: Just Cause 2 Next Page Conclusion-

Annisman Dang, it looks like Nvidia has almost no real answers for the AMD/ATI lineup of cards. However, if this card can drop in price a little it may be competitive because of some of it's Nvidia-only features. I mean, it runs cooler and uses a fair amount less power than the 470 and 480, maybe this will become the Phsyx card to get ? Espescially if they could manage a single slot version and drop the price. Anyways, no competition is bad for everyone and I hope Nvidia can get their act together asap.Reply -

tacoslave fatkid35i'll stick to my crossfire'd 5770s. same money and same power consumption.Or a 5870 same thing less problems but thats just me. oh and that thing got pwnd by a 5830 and thats not saying much.Reply

-

welshmousepk wow, the pricing of this thing is all wrong. given how well the the 480 and 470 sit in the market, this just seems like a pointless card.Reply -

liquidsnake718 How many times do I have to say that this is nothing but a marketing gimmick for defective GTX480's and possibly 470's as well. Like the 5830 which was a cut/gimped/ or limited 5850Reply -

bombat1994 make it 60 cheaper and you might have a good card but i would buy a 5850 over this thing everyday of the weelReply -

dco retail is messed up they charge you for a brand not the product by comparison. Whats worse is that people will buy it.Reply -

rohitbaran The GTX4xx line is definitely not the way it is to be played and this latest crappy piece of hardware further proved it. Hot and expensive but poor on performance. The more cards they launch, the clearer ATI's victory becomes.Reply