Nvidia Is Building Its Own AI Supercomputer

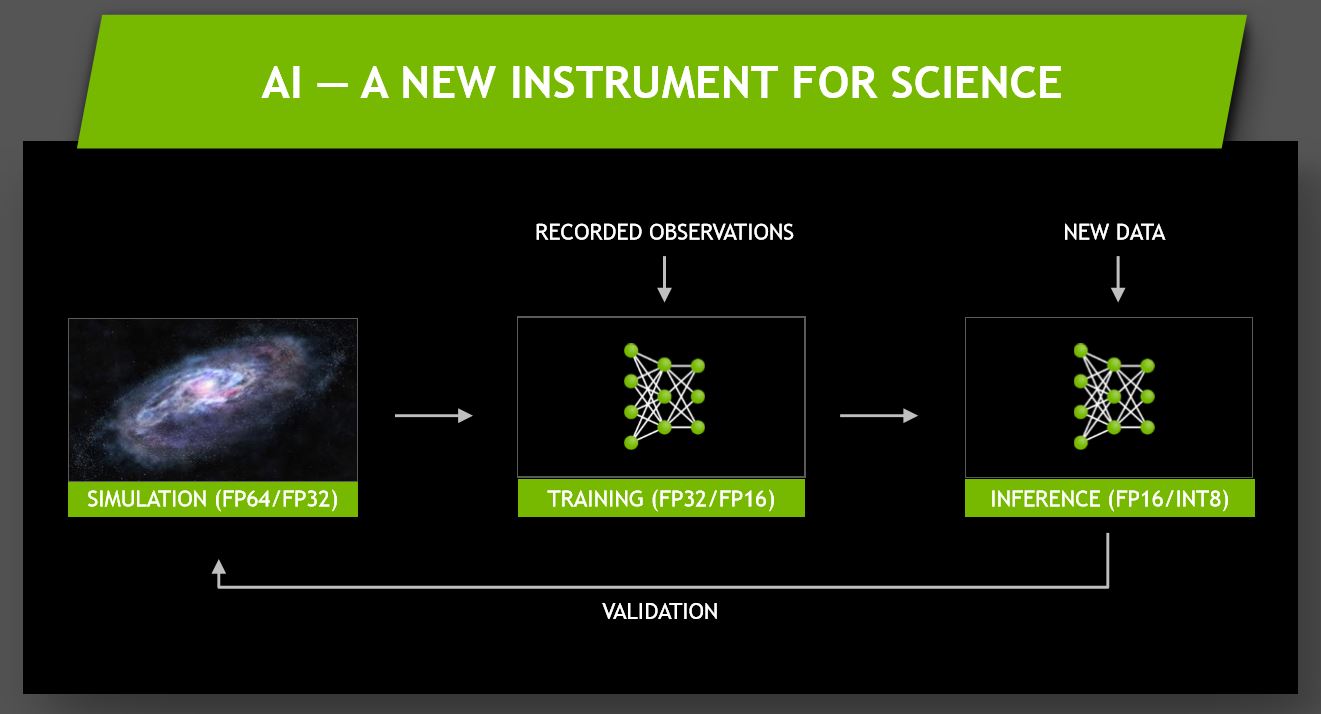

AI has begun to take over the data center and HPC applications, as a quick trip through the recent HotChips and ongoing Supercomputing 2017 trade shows quickly affirms.

Nvidia has been one of the companies at the forefront of AI development, and its heavy investments in hardware, and more importantly the decade-long development of CUDA, have paid off tremendously. In fact, the company announced that 34 new Nvidia GPU-accelerated supercomputers were added to the TOP500 list of supercomputers, bringing its total to 87. The company also powers 14 of the top 20 energy-efficiency supercomputers on the Green500 list.

Jensen Huang, Nvidia CEO, took to the stage at Supercomputing 2017 to announce that the company is also developing its own AI supercomputer for its autonomous driving program. Nvidia projects its new supercomputer will land in the top 10 list of worldwide AI supercomputers, which we'll cover shortly.

New threats loom in the form of Intel's recent announcement that it's forming a new Core and Visual Computing business group, headed by Raja Koduri of AMD fame, to develop not only a discrete GPU but also a differentiated graphics stack that scales from the edge to the data center. AMD is also enjoying significant uptake of its EPYC platform paired with its competitive Radeon Instinct GPUs, a big theme here at the show, while a host of FPGA and ASIC vendors are also vying for a slice of the exploding AI segment.

The show floor is packed with Volta V100 demonstrations from multiple vendors, as we'll get to in the coming days, and Nvidia also outlined its progress on multiple AI fronts.

Nvidia's SaturnV AI Supercomputer

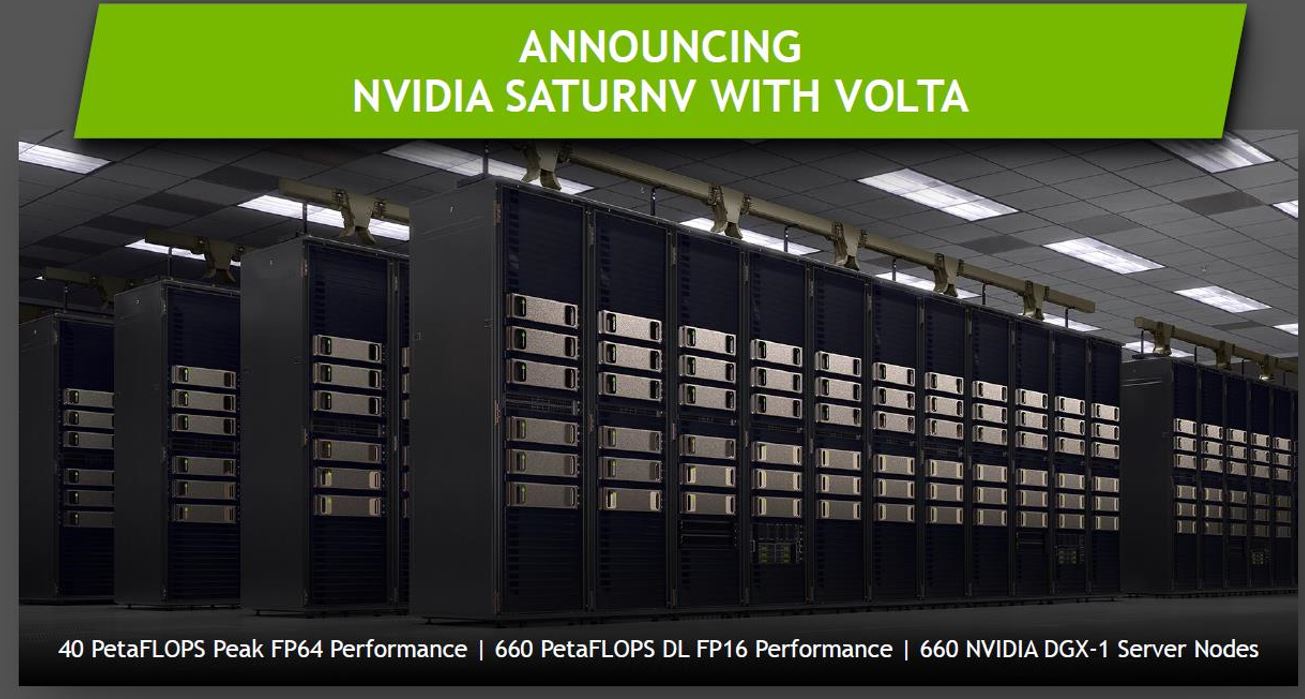

Nvidia also announced that it's upgrading its own supercomputer, the SaturnV, with 660 Nvidia DGX-1 nodes (more detail on the nodes here), spread over five rows. Each node houses eight Tesla V100s for a total of 5,280 GPUs. That powers up to 660 PetaFLOPS of FP16 performance (nearly an ExaFLOPS) and a peak of 40 PetaFLOPS of FP64. The company plans to use SaturnV for its own autonomous vehicle development programs.

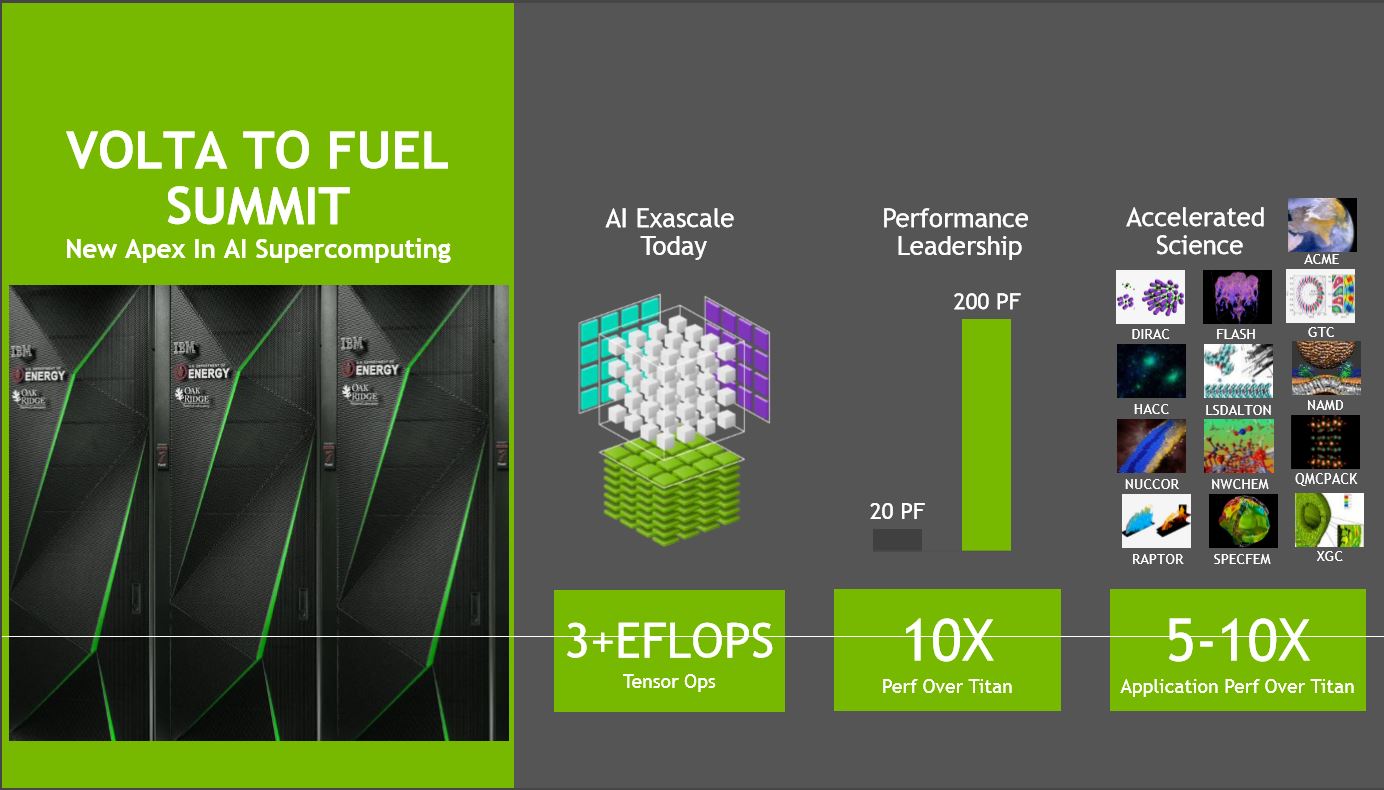

Amazingly, Nvidia claimed that the SaturnV will easily land in the top 10 AI supercomputers worldwide, and might even enter the top five once completed. That's impressive considering that state-run institutions drive many of the world’s leading supercomputers. Nvidia and IBM are also developing the Summit supercomputer at the Oakridge National Labs. Summit should unseat the current top supercomputer, which resides in China, with up to three ExaFLOPS of AI performance.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Expanding The Ecosystem

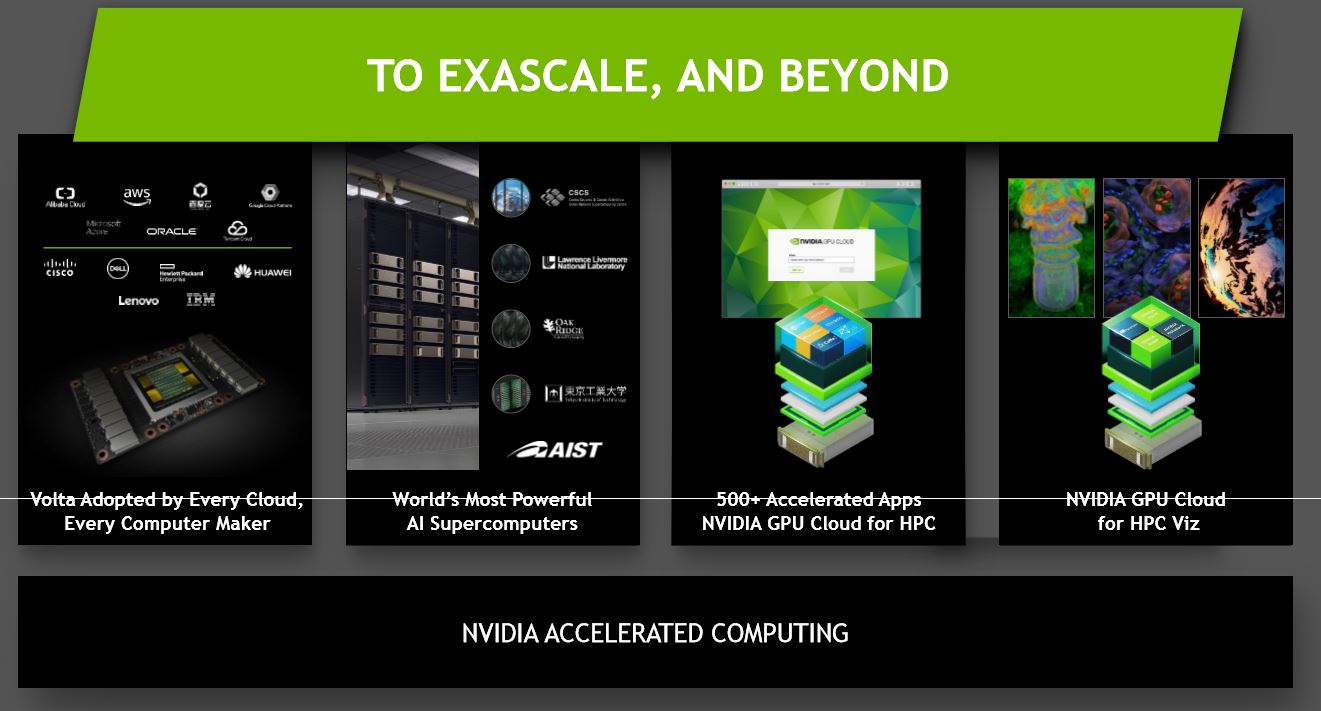

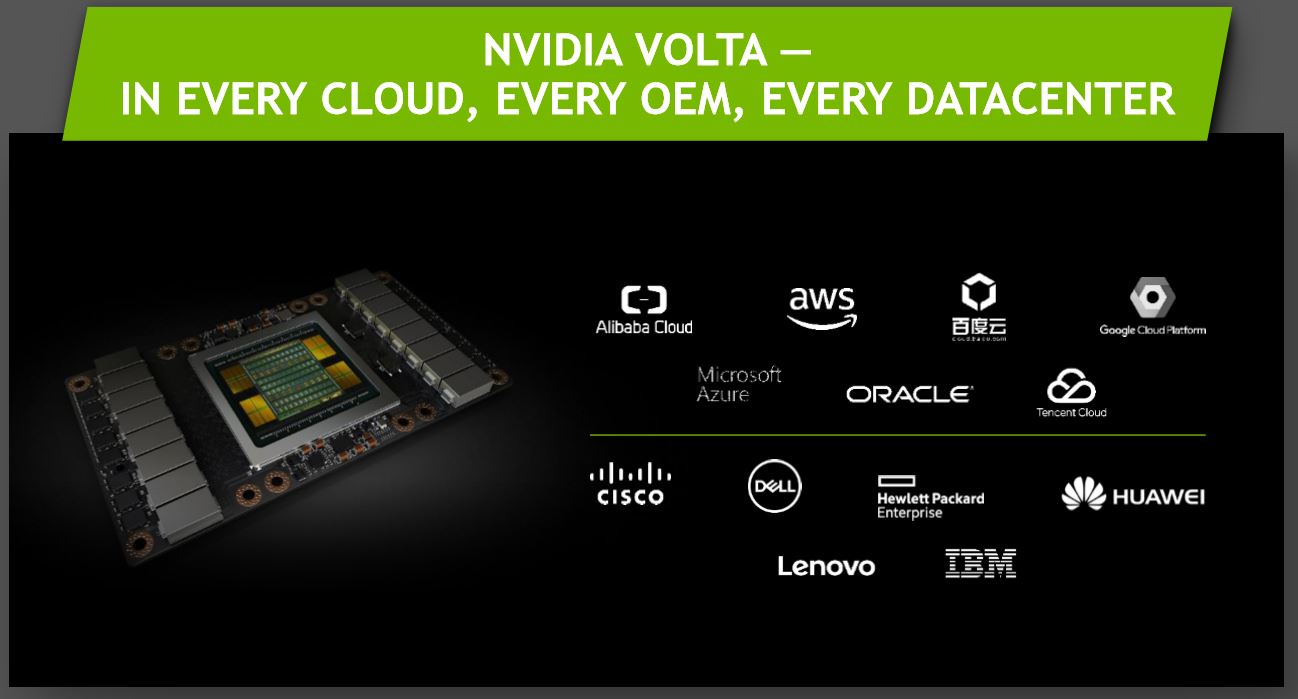

Nvidia announced that its massive V100 GPU, which boasts an 815mm2 die packed with 21 billion transistors and paired with 16GB of HBM2, has been adopted by every major system and cloud vendor. The blue-chip systems roster includes Dell EMC, HPE, Huawei, IBM, Lenovo, and a host of whitebox server vendors. AI development is expanding rapidly, and a number of startups, businesses, and academic institutions are rapidly developing new products and capabilities, but purchasing and managing the requisite infrastructure can hinder adoption.

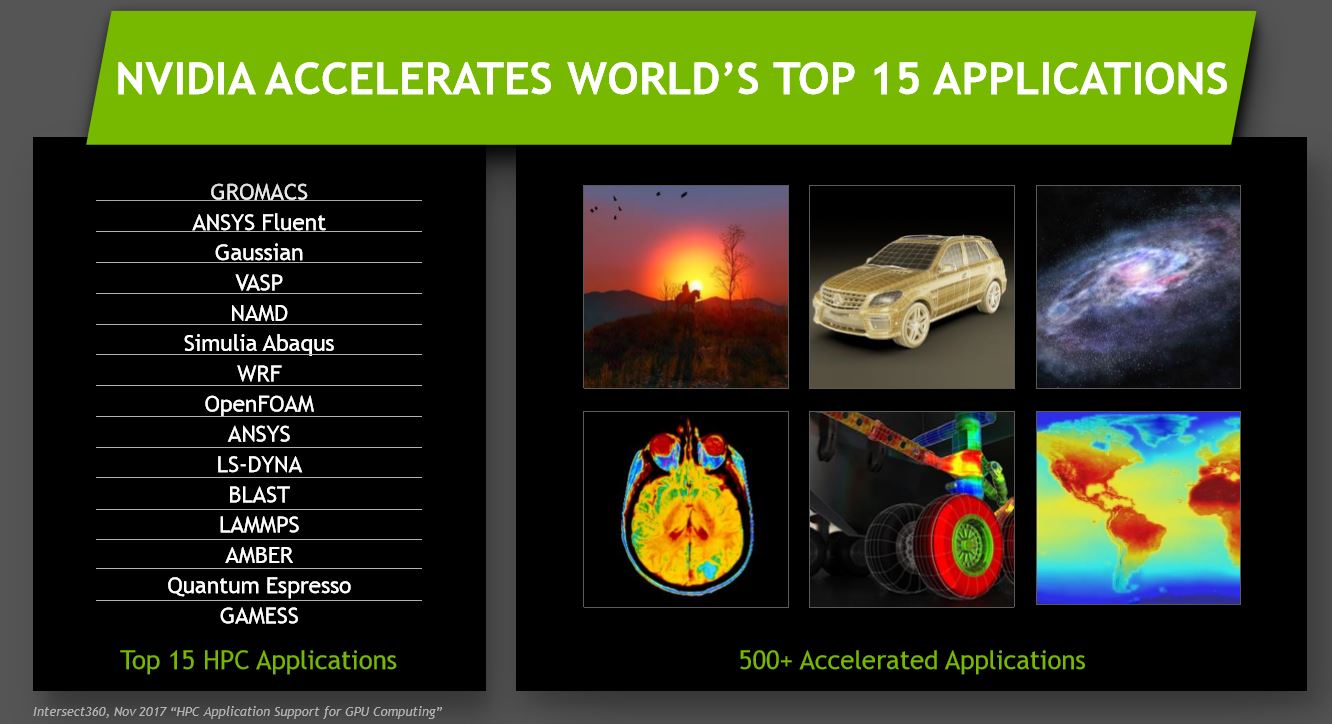

Every major cloud now offers Volta-powered cloud instances, including AWS, Azure, Google Cloud, Alibaba, Tencent, and others. Microsoft recently announced its new cloud-based Volta services, which will be available in November. The company also now supports up to 500 applications with the CUDA framework.

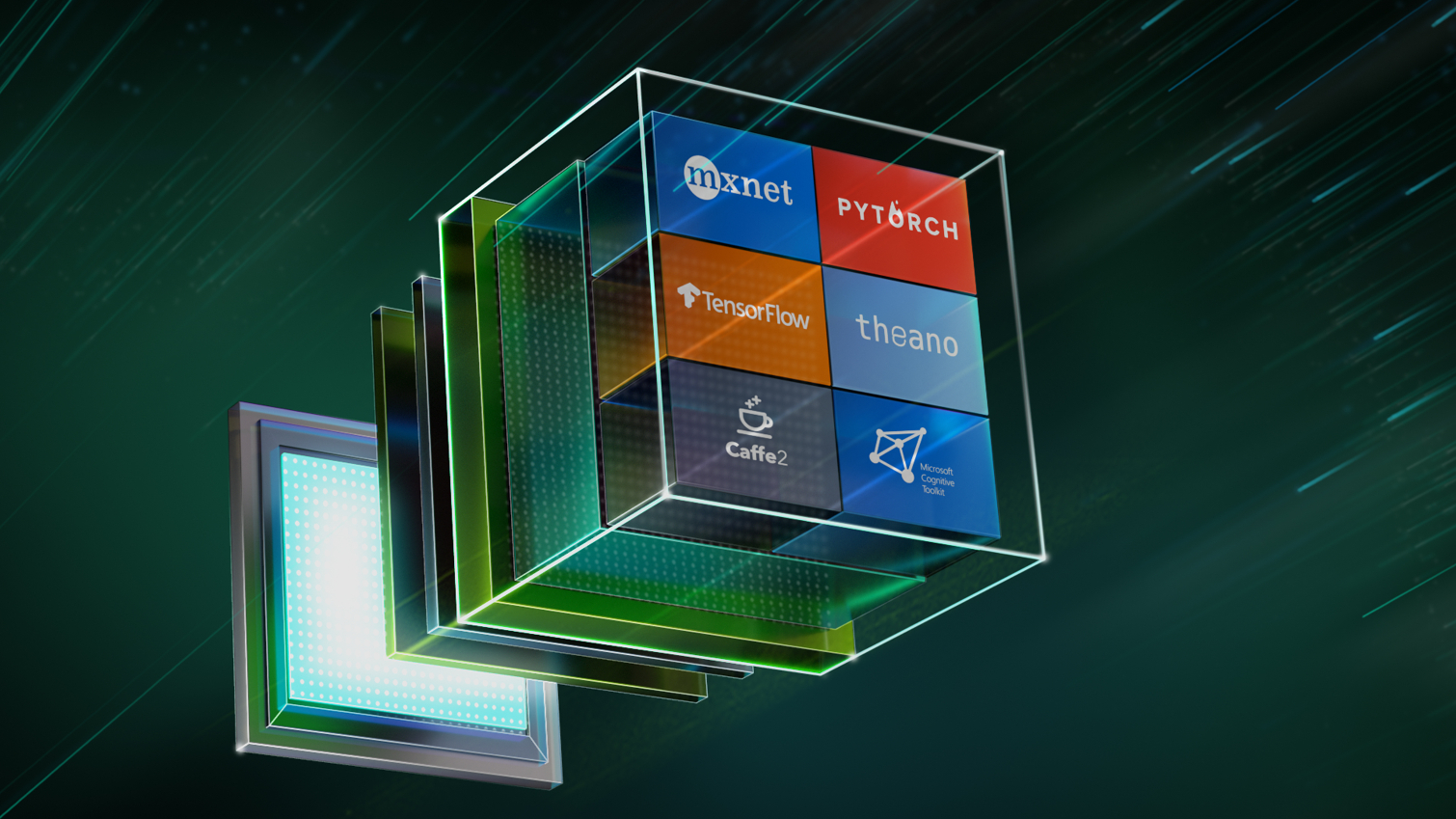

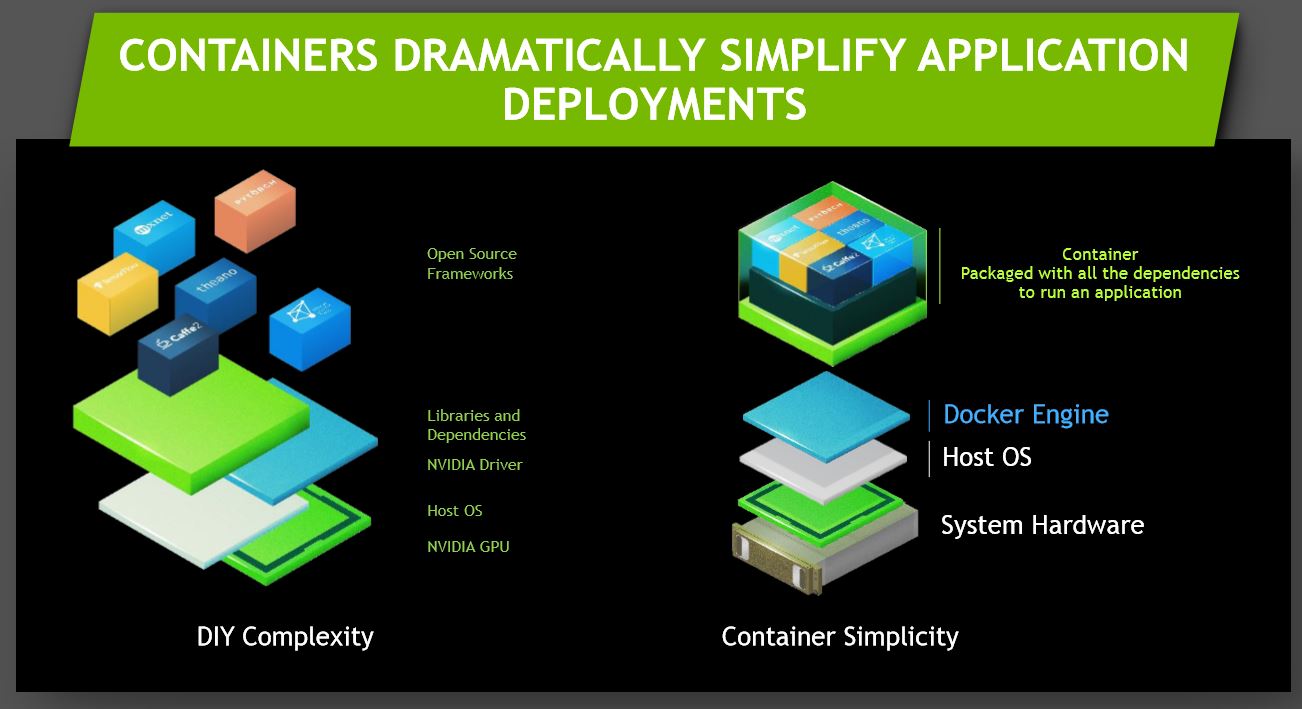

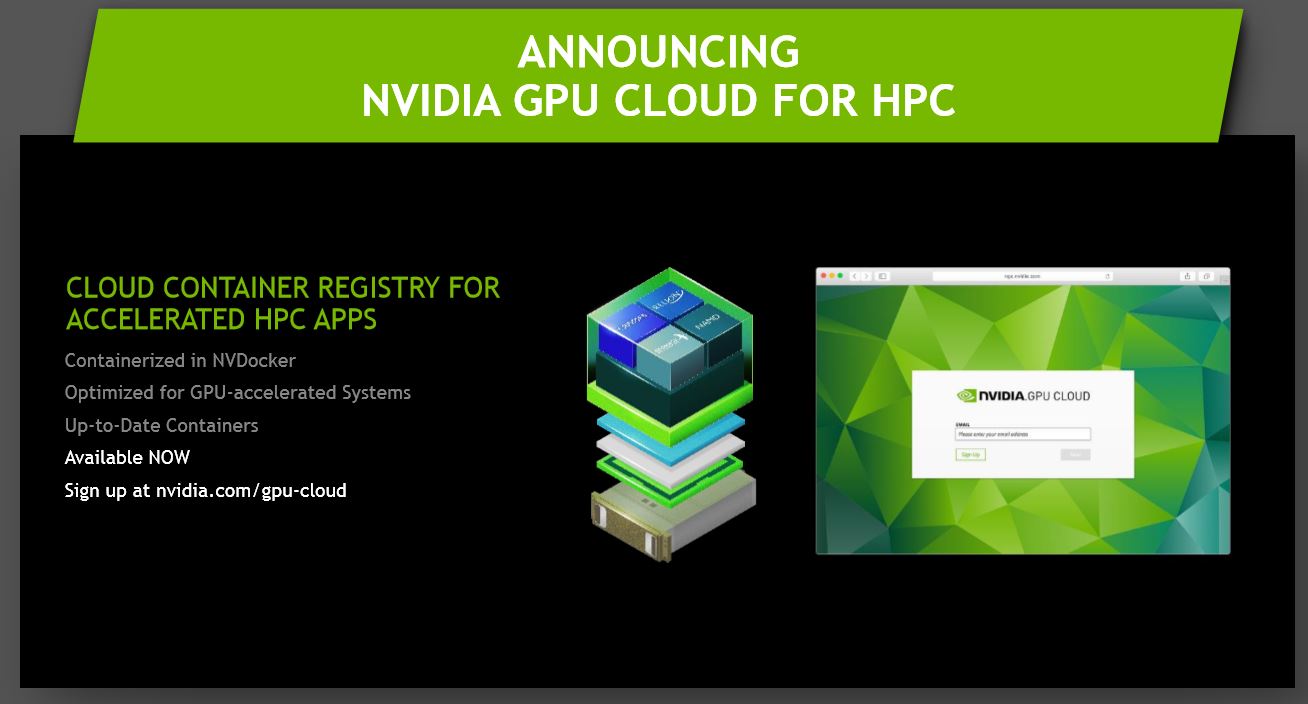

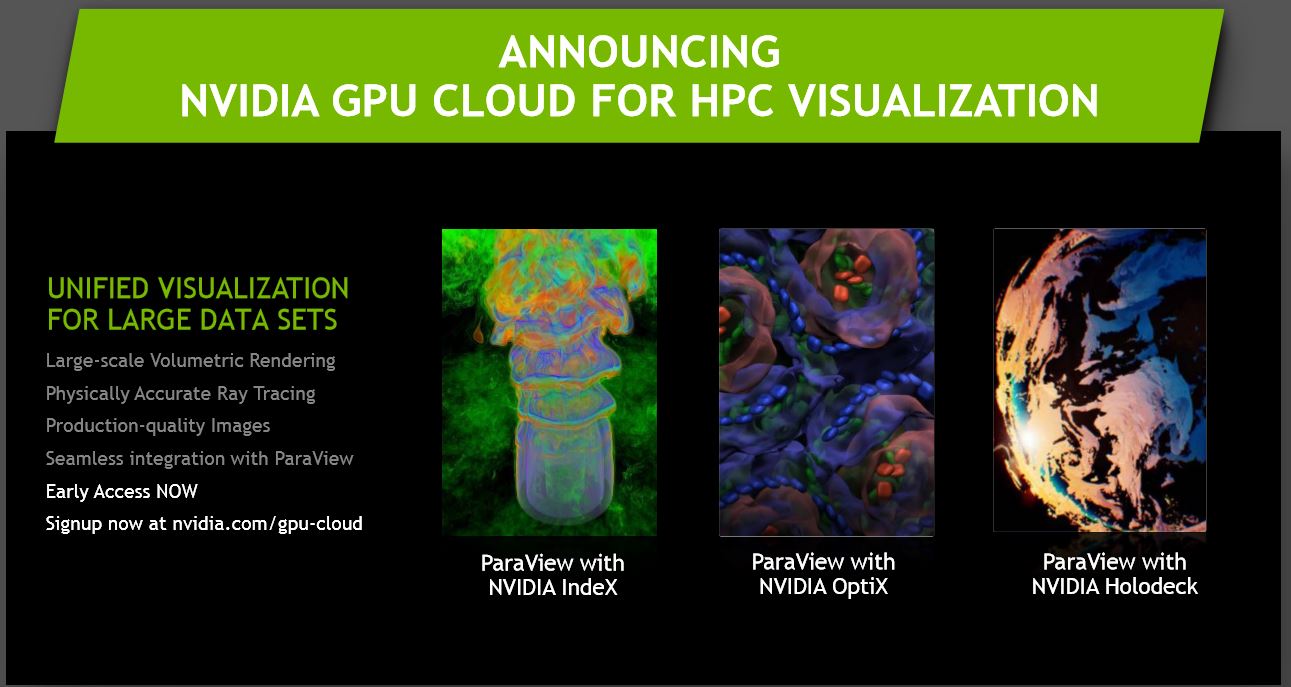

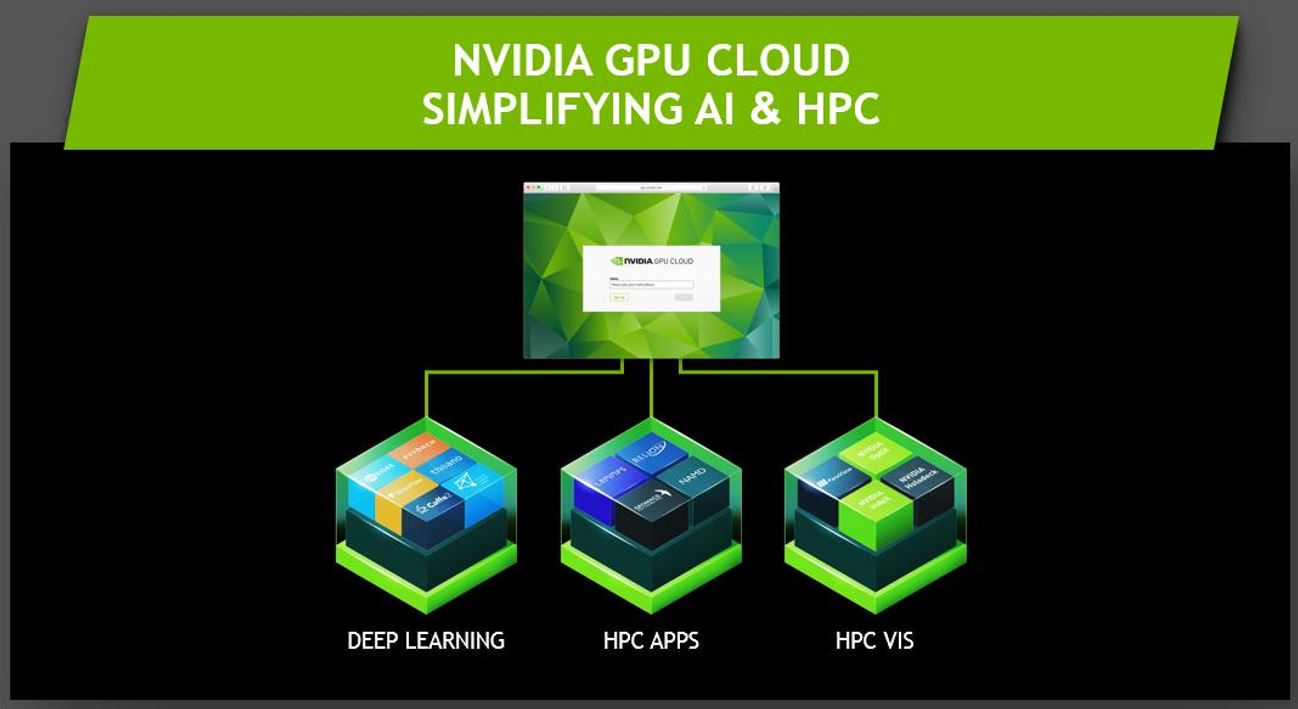

Nvidia is also offering new software and tools on the Nvidia GPU Cloud (NGC), such as new HPC containers that simplify deployment. The containers work with Docker, with support for other container applications coming in the future. The applications run on any Pascal or newer GPU, along with HPC supercomputing clusters and Nvidia's DGX systems. These containers support all the main frameworks, such as Caffee, Torch, Pytorch, and many others. Nvidia has focused on simplifying to process down to a few minutes.

Japan's AIST also incorporated 4,352 Tesla Volta V100 GPUs into its ABCI supercomputer, which provides over 37 PetaFLOPS of FP64 performance, making it the fastest AI supercomputer in Japan. It's the first ExaFLOPS- AI supercomputer in the world.

We'll be scouring the show floor for some of the latest Tesla V100-powered systems; stay tuned.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

bit_user Reply660 PetaFLOPS of FP16 performance (nearly an ExaFLOP)

That's quite generous of you.

:0

I'd leave it at "more than half-way" or maybe "2/3rds of an ExaFLOPS".

And the "S" can't be omitted. A FLOP is not a unit. A FLOPS (Floating Point Operation Per Second) is.